NVIDIA GTC Keynote 2026 ~ How It Supports Next-Generation Intelligent Systems ~

This page has been translated by machine translation. View original

Hello.

I am Takaaki Tanaka from the Smart Factory Team in the Manufacturing Business Technology Department.

Introduction

Watch NVIDIA Founder and CEO Jensen Huang's GTC keynote as he unveils the latest breakthroughs in AI and accelerated computing. See how agentic AI, AI factories, and physical AI are powering the next generation of intelligent systems.

This is a summary of NVIDIA CEO Jensen Huang's NVIDIA GTC 2026 keynote.

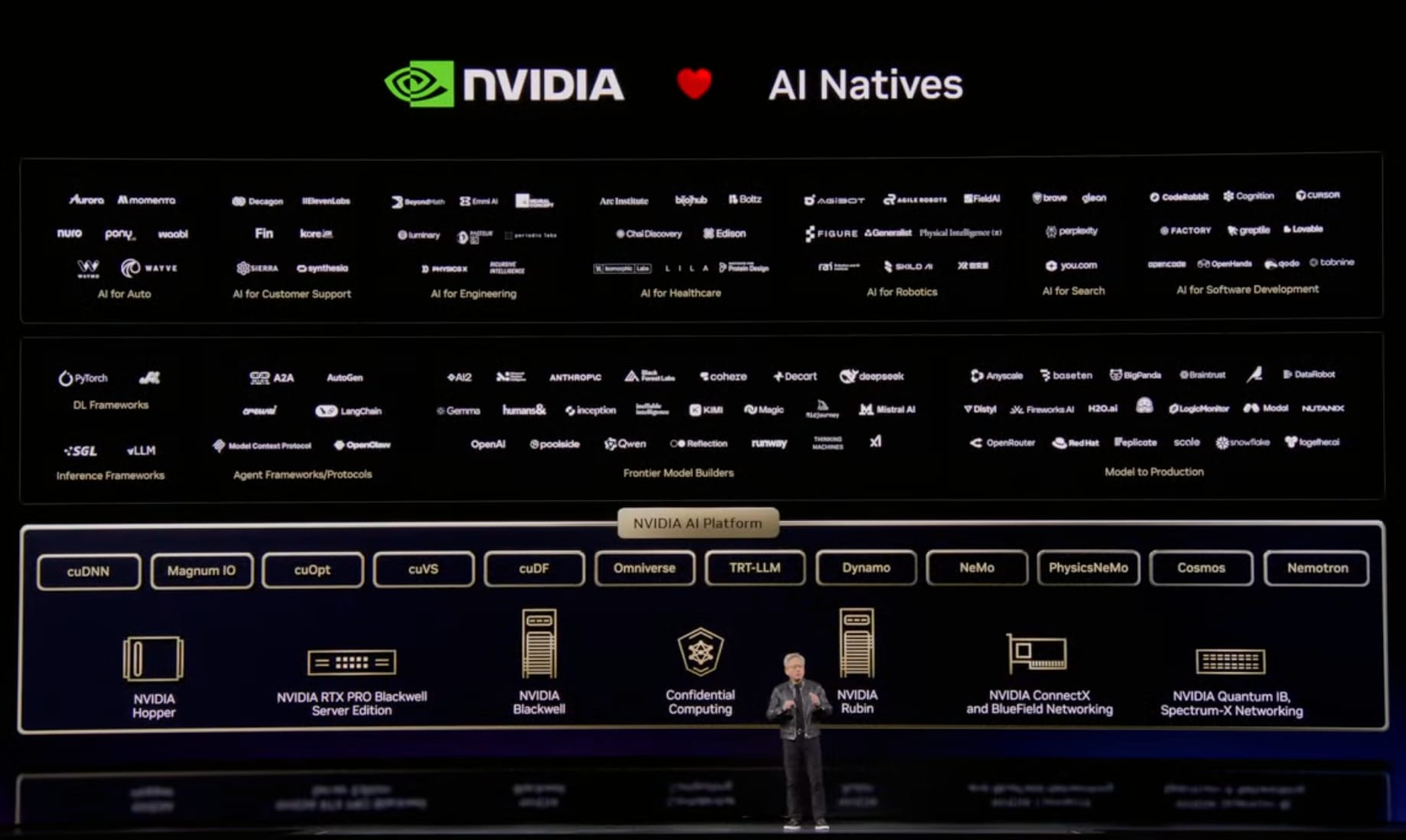

NVIDIA's 3 Platforms

NVIDIA has developed 3 platforms:

- CUDA X: Algorithm libraries

- Systems: Computing systems

- AI Factory: Newly announced AI infrastructure

CUDA's 20th Anniversary and Flywheel Effect

CUDA has reached its 20th anniversary. The SIMT (Single Instruction, Multi-Threaded) architecture has made it easy to transform scalar code into multi-threaded applications.

The core of NVIDIA's strategy is the "flywheel effect":

- Large install base attracts developers

- Developers create new algorithms and achieve breakthroughs

- Breakthroughs create new markets

- New markets expand the ecosystem, further increasing the install base

This cycle has even caused the price of Ampere, shipped 6 years ago, to rise in the cloud.

Continuous software optimization has significantly extended the useful life of GPUs.

GeForce 25th Anniversary and AI's Big Bang

GeForce invented programmable shaders (pixel shaders) 25 years ago.

It was the world's first programmable accelerator.

GeForce's spread of CUDA throughout the world enabled Alex Krizhevsky, Ilya Sutskever, Geoff Hinton, and Andrew Ng to discover they could accelerate deep learning with GPUs. This marked the beginning of the AI big bang 10 years ago.

About 8 years ago, NVIDIA introduced RTX, combining hardware ray tracing with AI.

DLSS 5 and Neural Rendering

They announced the next-generation graphics technology "neural rendering," which is a fusion of 3D graphics and AI.

- Structured data: Controllable and predictable 3D graphics

- Generative AI: Probabilistic but highly realistic

By combining these two, they can generate content that is both beautiful and controllable. This concept will be repeated across all industries. Structured data forms the foundation of "trustworthy AI."

Data Processing Innovation: cuDF and cuVS

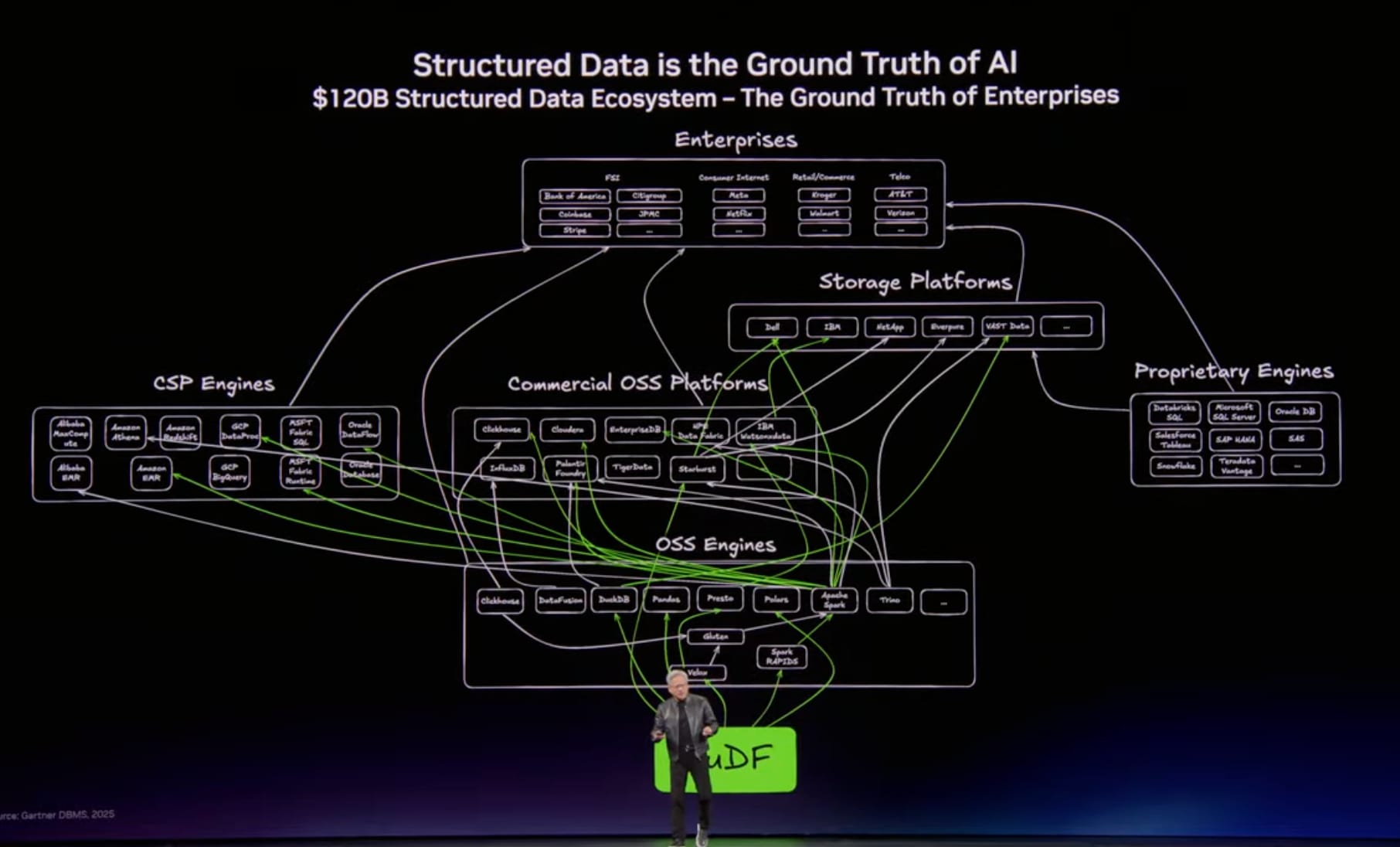

Structured Data

Data frames processed by platforms like SQL, Spark, Pandas, and Velox. Used in Snowflake, Databricks, Amazon EMR, Azure Fabric, Google Cloud BigQuery, etc. This is the "ground truth" of business.

AI agents use this data much faster than humans, necessitating thorough acceleration.

Unstructured Data

About 90% of data generated annually is unstructured - PDFs, videos, audio, etc. Previously difficult to query or search, AI's multimodal perception can now understand, vectorize, and make this content searchable.

Two Foundation Libraries

- cuDF: For data frames (structured data)

- cuVS: For vector stores (unstructured, semantic data)

Cloud Partnerships

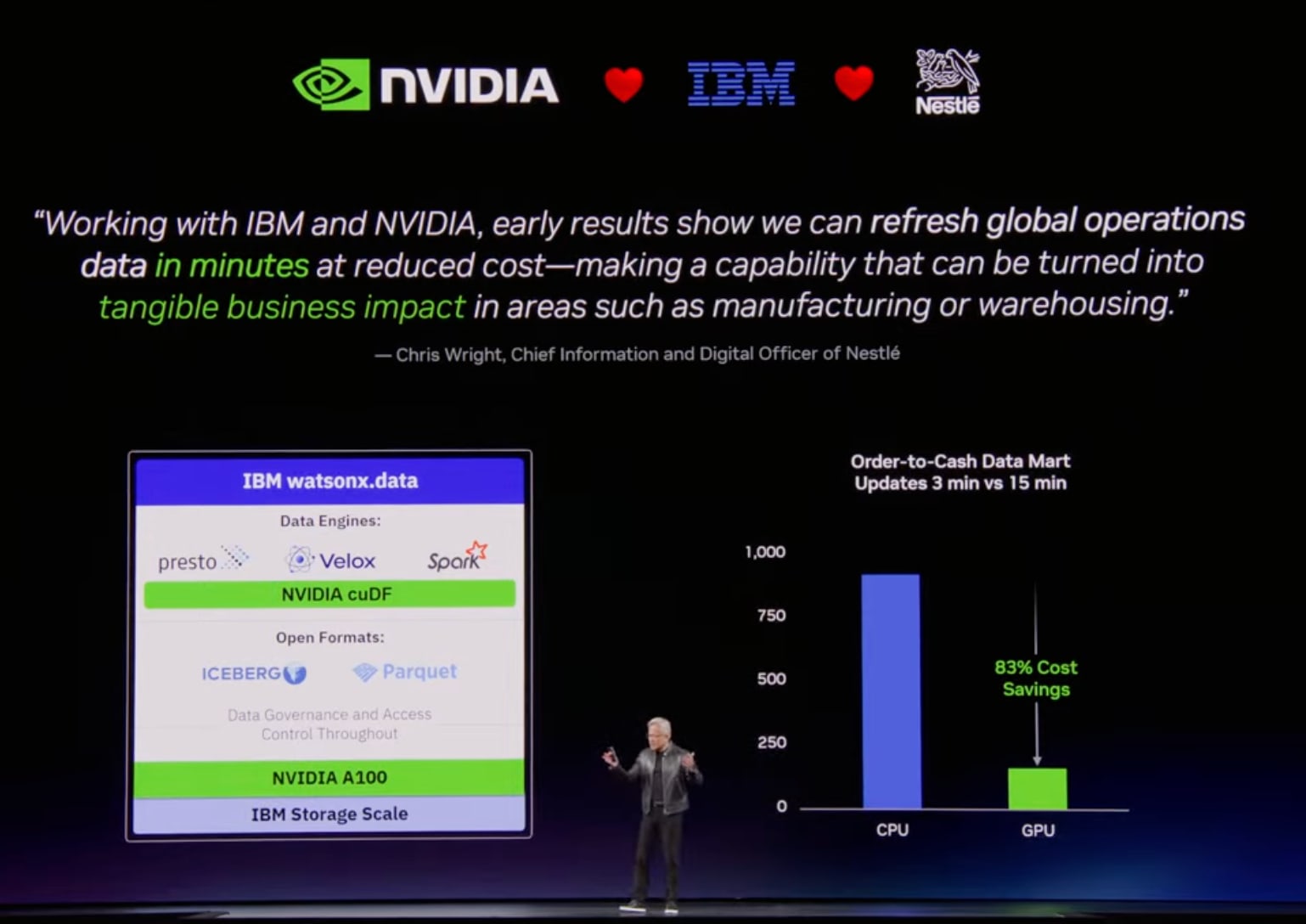

IBM

Collaborating with IBM, inventor of SQL, to accelerate Watson X data with cuDF. Nestle can now process supply chain data across 185 countries 5 times faster and at 83% lower cost.

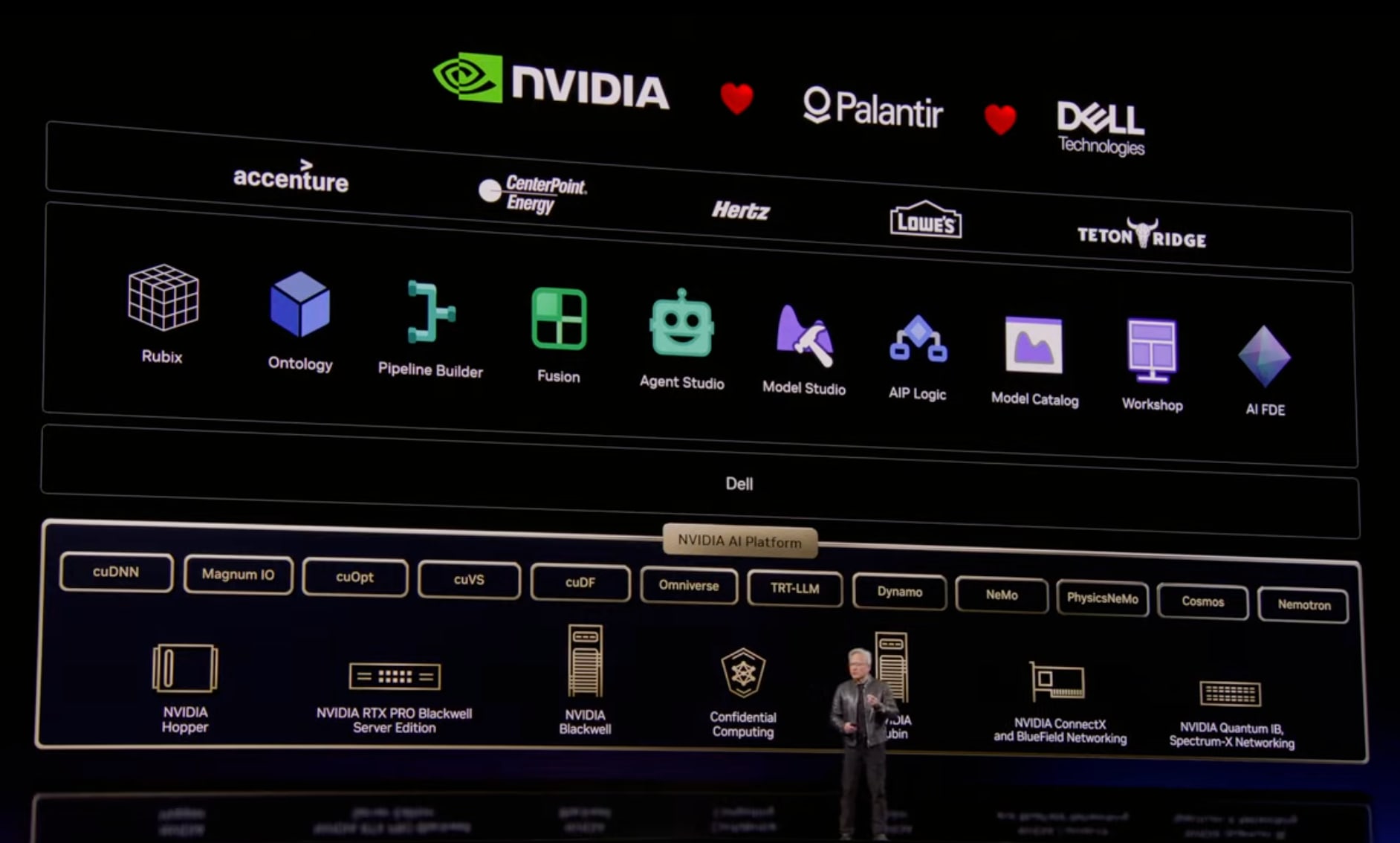

Dell

Announced Dell AI data platform integrating cuDF and cuVS. Collaboration with NTT Data has achieved significant speed improvements.

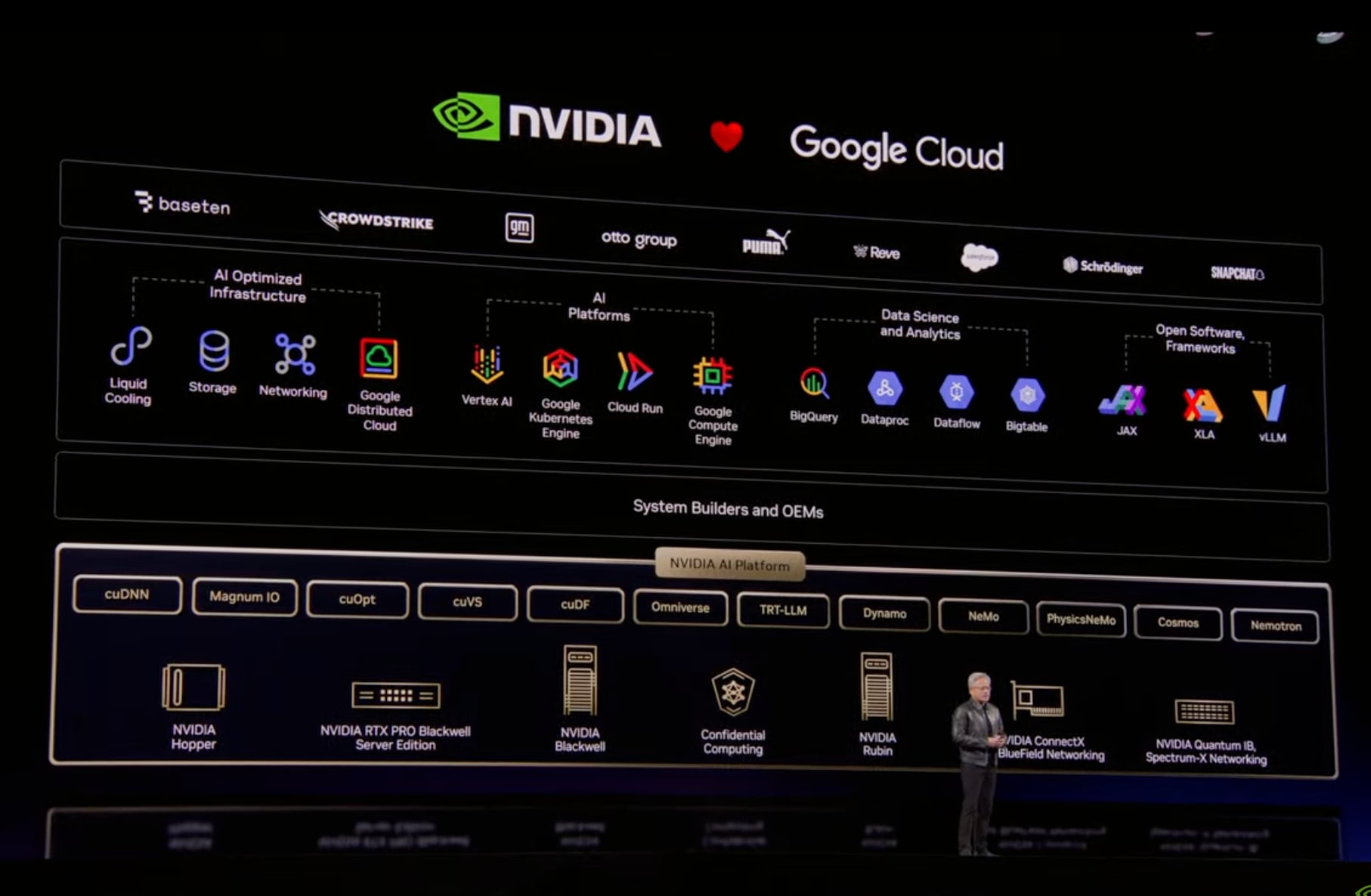

Google Cloud

Accelerating Vertex AI and BigQuery. Reduced Snapchat's computing costs by approximately 80%.

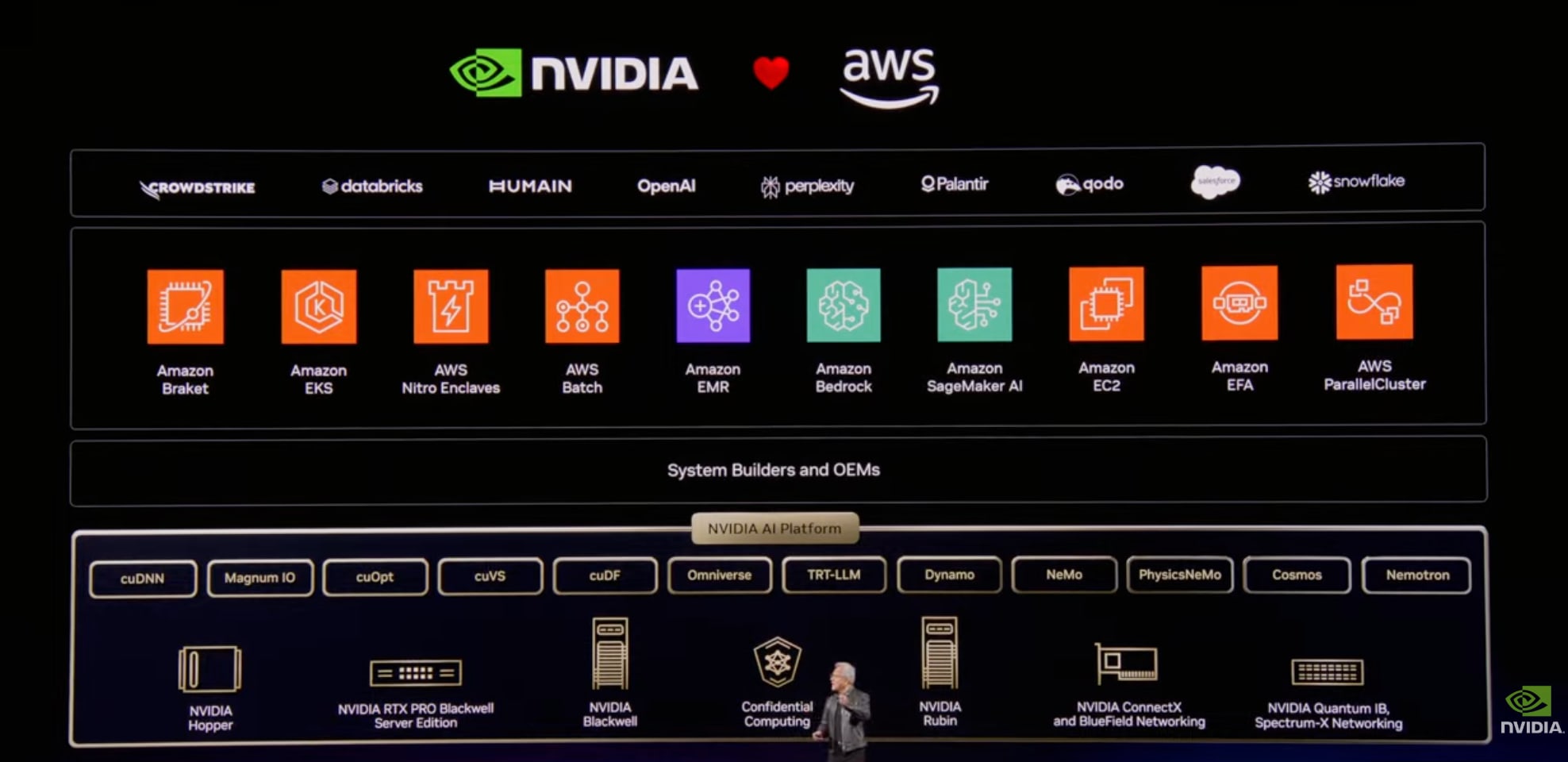

AWS

Accelerating EMR, SageMaker, and Bedrock.

This year, bringing OpenAI to AWS is expected to greatly expand consumption. AWS was NVIDIA's first cloud partner.

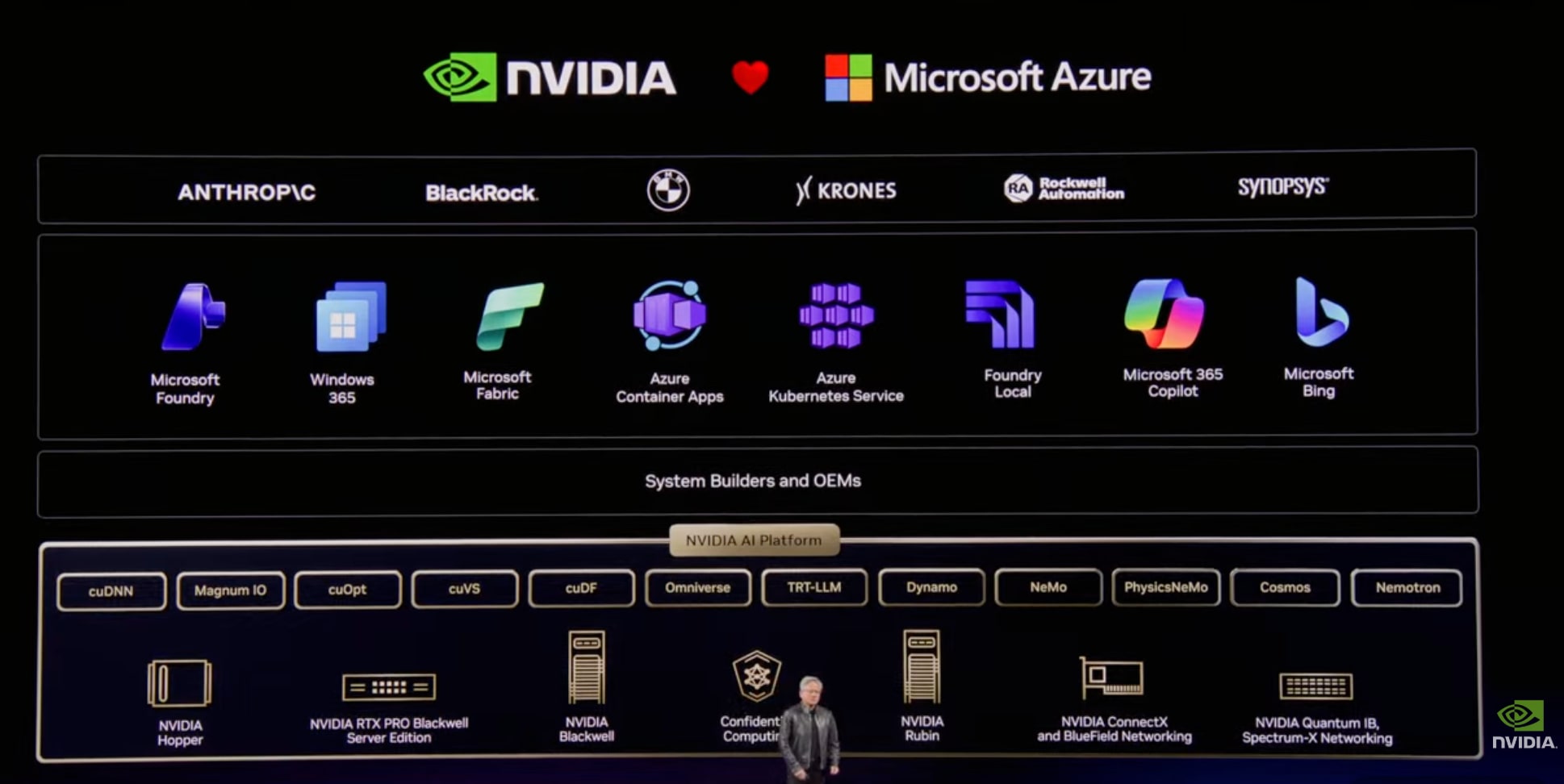

Microsoft Azure

Azure was the first to deploy NVIDIA's A100 supercomputer, which led to their success with OpenAI. Accelerating AI Foundry and Bing search.

Confidential computing allows deploying OpenAI and Anthropic models in environments where even operators cannot see the data or models.

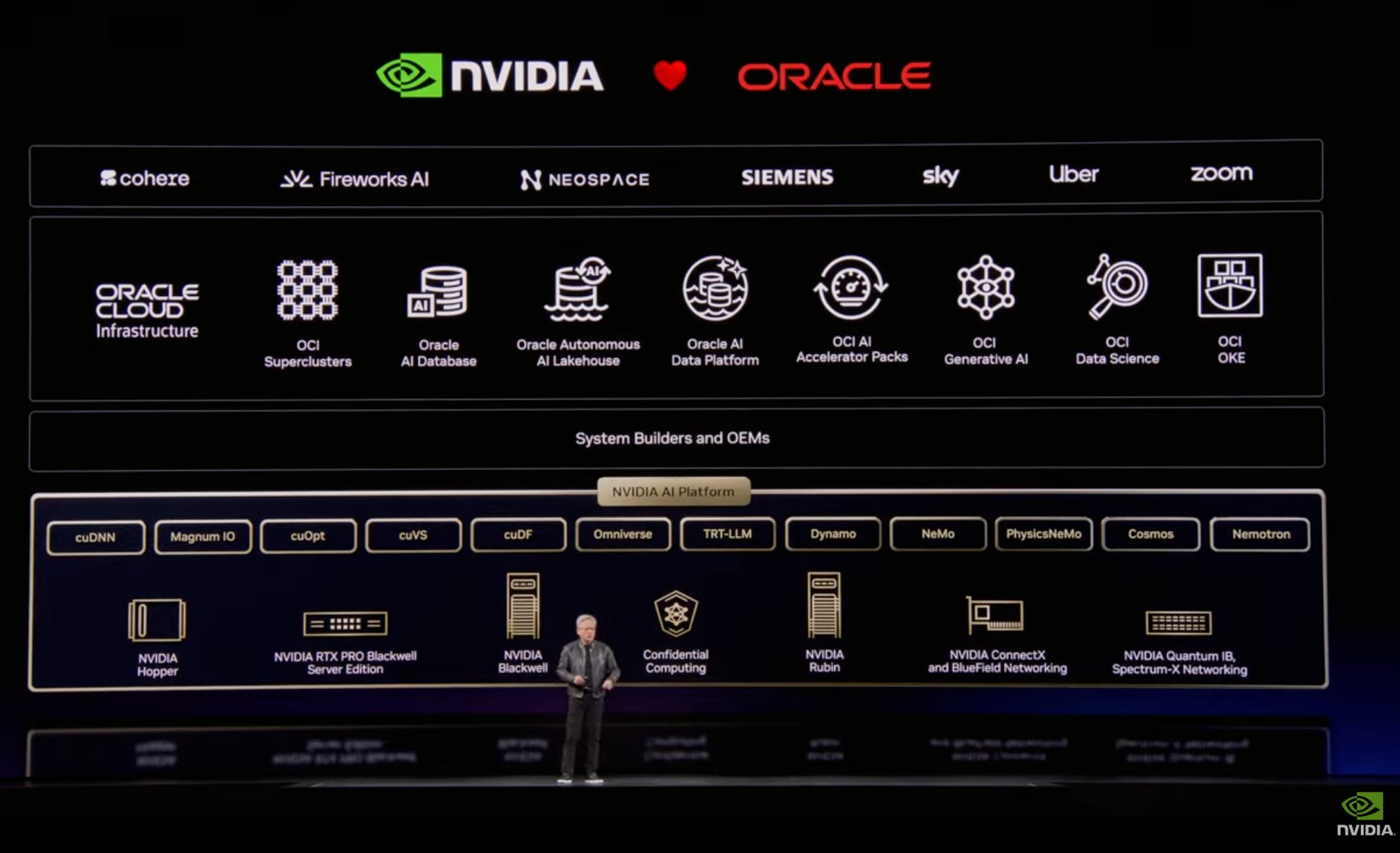

Oracle

NVIDIA was Oracle's first AI customer and also their first supplier. Deploying Cohere, Fireworks, OpenAI, and others.

Palantir + Dell

The three companies are building an AI platform that can be fully deployed in air-gapped environments or on-premises. Integration with Palantir Ontologies enables AI deployment in any country or field.

NVIDIA's Strategy: Vertical Integration × Horizontal Openness

NVIDIA is the world's first vertically integrated yet horizontally open company.

Why Vertical Integration Is Necessary

The essence of accelerated computing is accelerating applications. With Moore's Law reaching its limits, only domain-specific acceleration can achieve significant speed improvements and cost reductions.

Therefore, NVIDIA needs to understand:

- Applications

- Domains

- Algorithms

- Deployment scenarios (data centers, cloud, on-premises, edge, robotics)

Horizontal Openness

NVIDIA's technology can be integrated into any platform. By providing software and libraries and integrating with partners' technologies, they deliver accelerated computing worldwide.

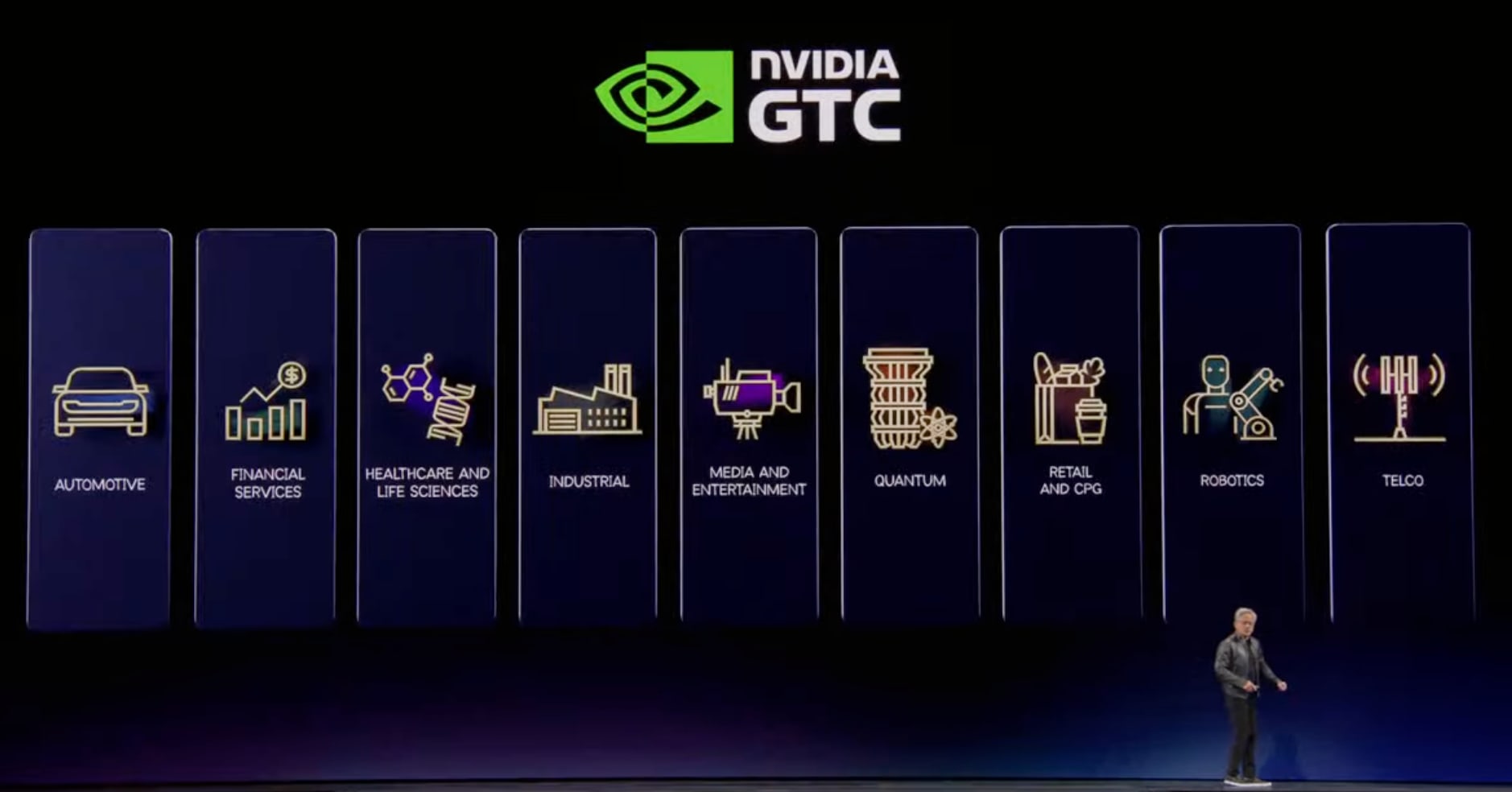

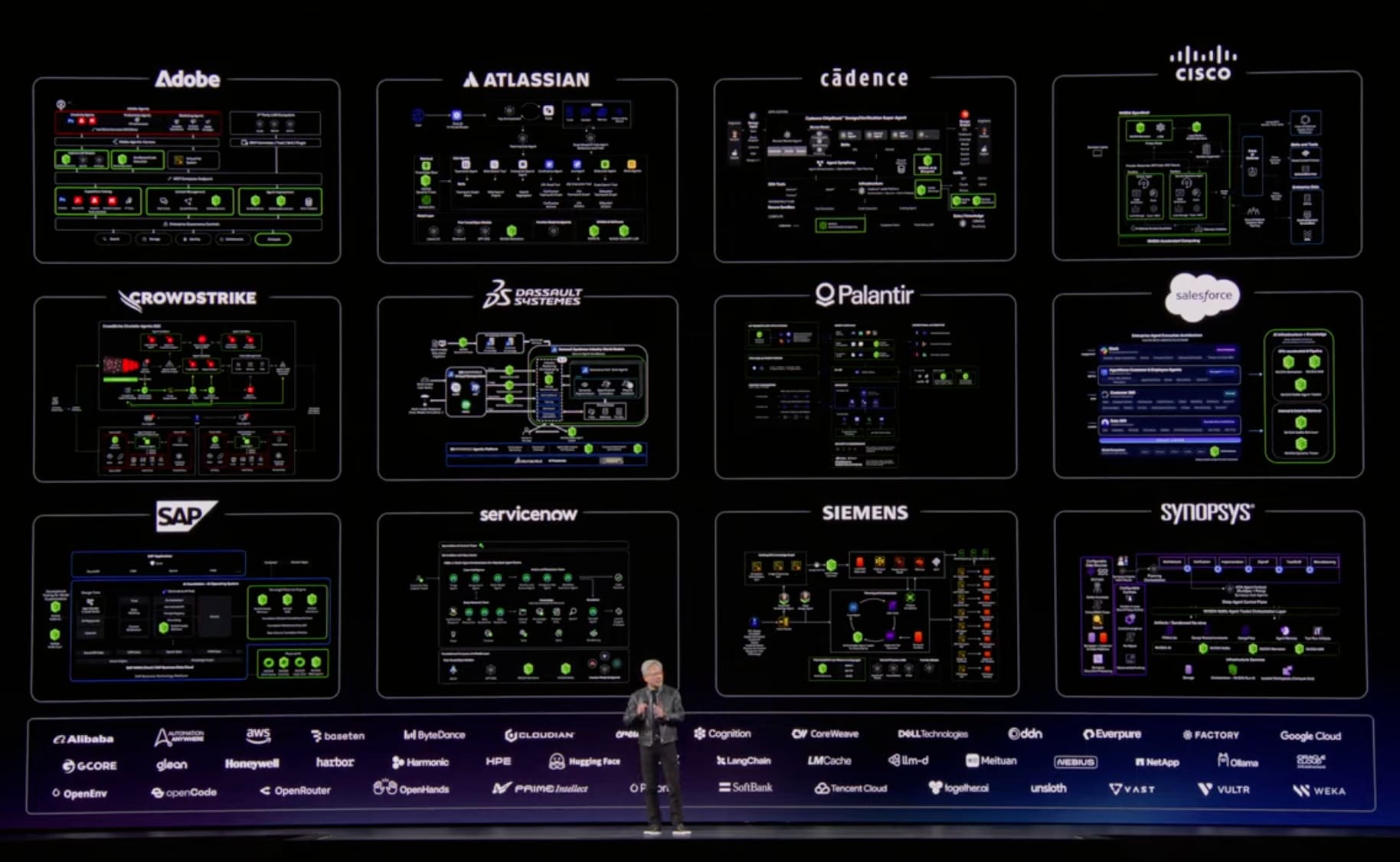

Industry-Specific Deployments

| Industry | Market Size | NVIDIA's Initiatives |

|---|---|---|

| Self-driving Cars | - | Wide reach and impact |

| Financial Services | - | Algorithmic trading shifting to deep learning/transformers. Largest participant percentage at GTC |

| Healthcare | - | AI physics/biology for drug discovery, diagnostic support AI agents |

| Industry | - | AI factories, chip plants, computer plant construction |

| Media/Gaming | - | Real-time AI platforms, translation, broadcast support. Holoscan |

| Quantum Computing | - | Building cuQuantum GPU hybrid systems with 35 companies |

| Retail/CPG | $35 trillion | Supply chain, shopping systems, customer support AI agents |

| Robotics/Manufacturing | $50 trillion | 10 years of work, 110 robots on display |

| Telecommunications | $2 trillion | Base stations evolving into AI infrastructure platforms. Collaborating with Nokia, T-Mobile on Aerial (AI RAN) |

NVIDIA's Crown Jewel: CUDA X Libraries

NVIDIA is an algorithm company.

CUDA X libraries are the company's crown jewel, enabling computing platforms to solve industry-specific problems.

At this GTC, they announced approximately 100 libraries and about 40 models.

Key Libraries

| Library | Purpose |

|---|---|

| cuDNN | Deep neural networks (driver of the AI big bang) |

| cuOpt | Decision optimization |

| cuLitho | Computational lithography |

| cuDSS | Direct sparse solver |

| cuEquivariance | Geometry-aware neural networks |

| Aerial | AI RAN |

| Warp | Differentiable physics |

| Parabricks | Genomics |

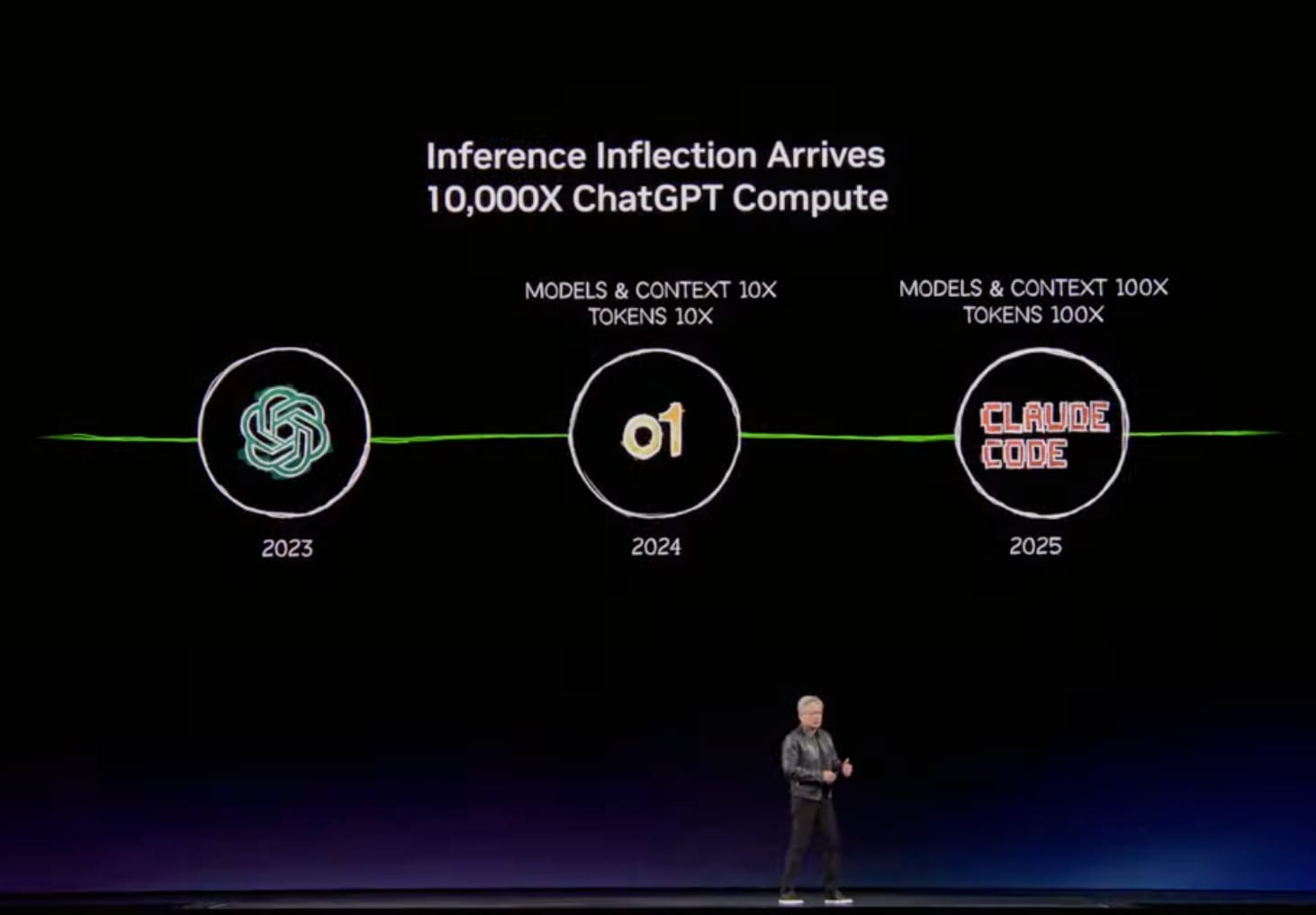

Three Inflection Points in AI

The past two years have seen three critical inflection points in AI:

- ChatGPT (late 2022-2023): Beginning of the generative AI era. Computing fundamentally shifted from "search-based" to "generation-based"

- o1 (reasoning AI): Enabled reflection, planning, and problem decomposition. Made generative AI reliable and truth-based

- Claude Code (agent AI): Can read files, create code, compile, test, and iterate. 100% of NVIDIA employees use either Claude Code, Codex, or Cursor

As a result, AI has evolved from "perceptual AI" → "generative AI" → "reasoning AI" → "AI that actually does work."

Inference Inflection Point and Demand Explosion

As AI became productive, an inference inflection point arrived:

- Computational demand increased 1 million times in the past two years (10,000x increase in computation × 100x increase in usage)

- Venture investment in AI startups reached $150 billion (largest in human history)

- Investment scale shifted from millions to billions of dollars

By 2027, at least $1 trillion in demand is expected (doubling from $500 billion last year).

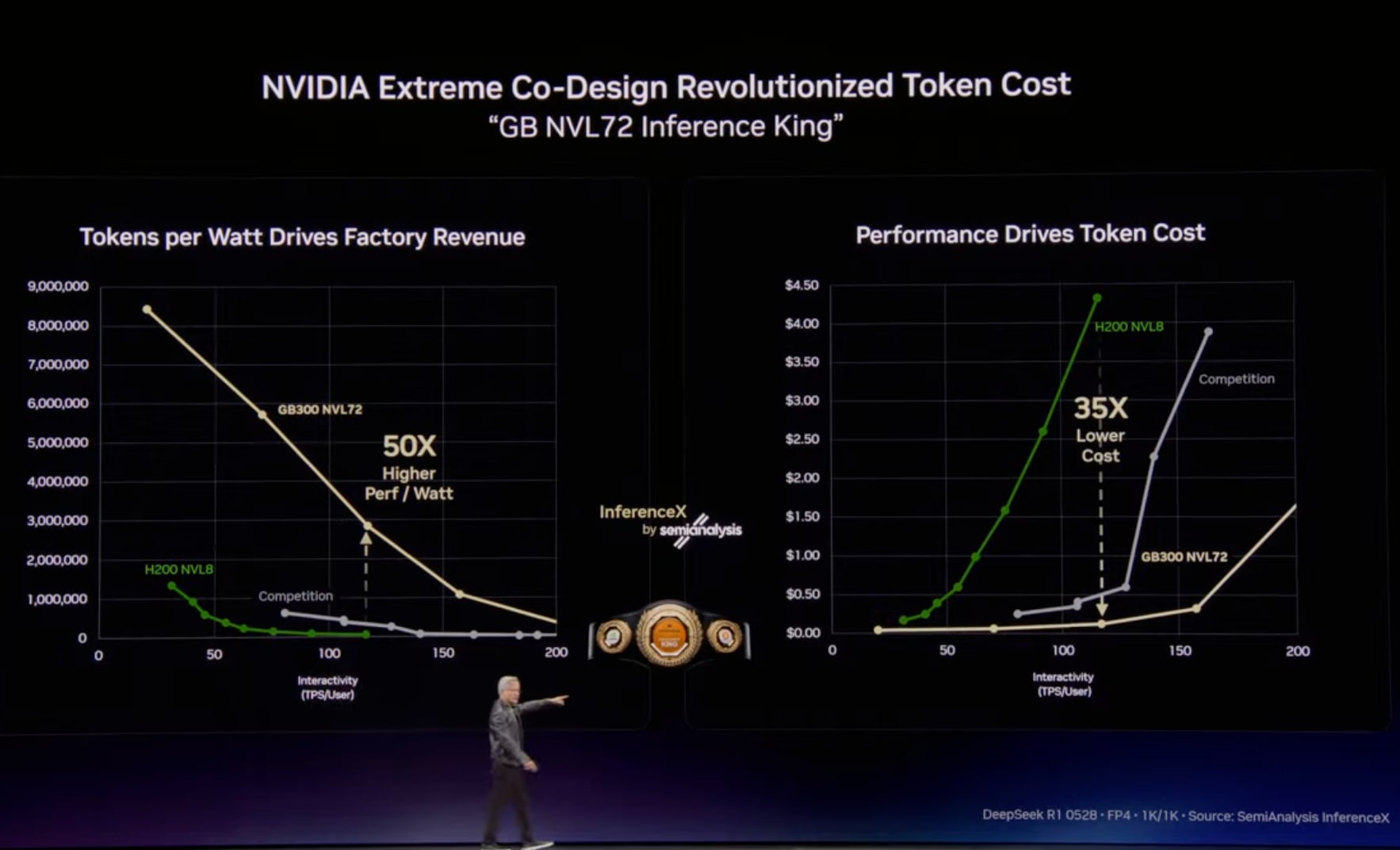

Token Factory Economics

Data centers have transformed from "data centers for files" to "factories generating tokens."

Two Axes of Token Economy

| Axis | Meaning | Business Impact |

|---|---|---|

| Throughput (vertical) | Tokens generated per watt | Production capacity under power constraints |

| Token speed (horizontal) | Inference speed | AI intelligence, amount of context that can be processed |

Price Tier Differentiation

| Tier | Characteristics | Price Example |

|---|---|---|

| Free | High throughput, low speed | $0 |

| Standard | Medium throughput, medium speed | $3 / million tokens |

| High | Low throughput, high speed | $6 / million tokens |

| Premium | Lowest throughput, highest speed | $45-$150 / million tokens |

Going forward, CEOs worldwide will study token factory efficiency as it directly impacts revenue.

Grace Blackwell and Vera Rubin Performance

Grace Blackwell NVLink 72

- While Moore's Law would predict 1.5x performance improvement, they achieved 35-50x

- According to Dylan Patel of semi analysis, "Jensen is sandbagging. It's actually 50x"

- Lowest cost per token in the world, "basically unbeatable"

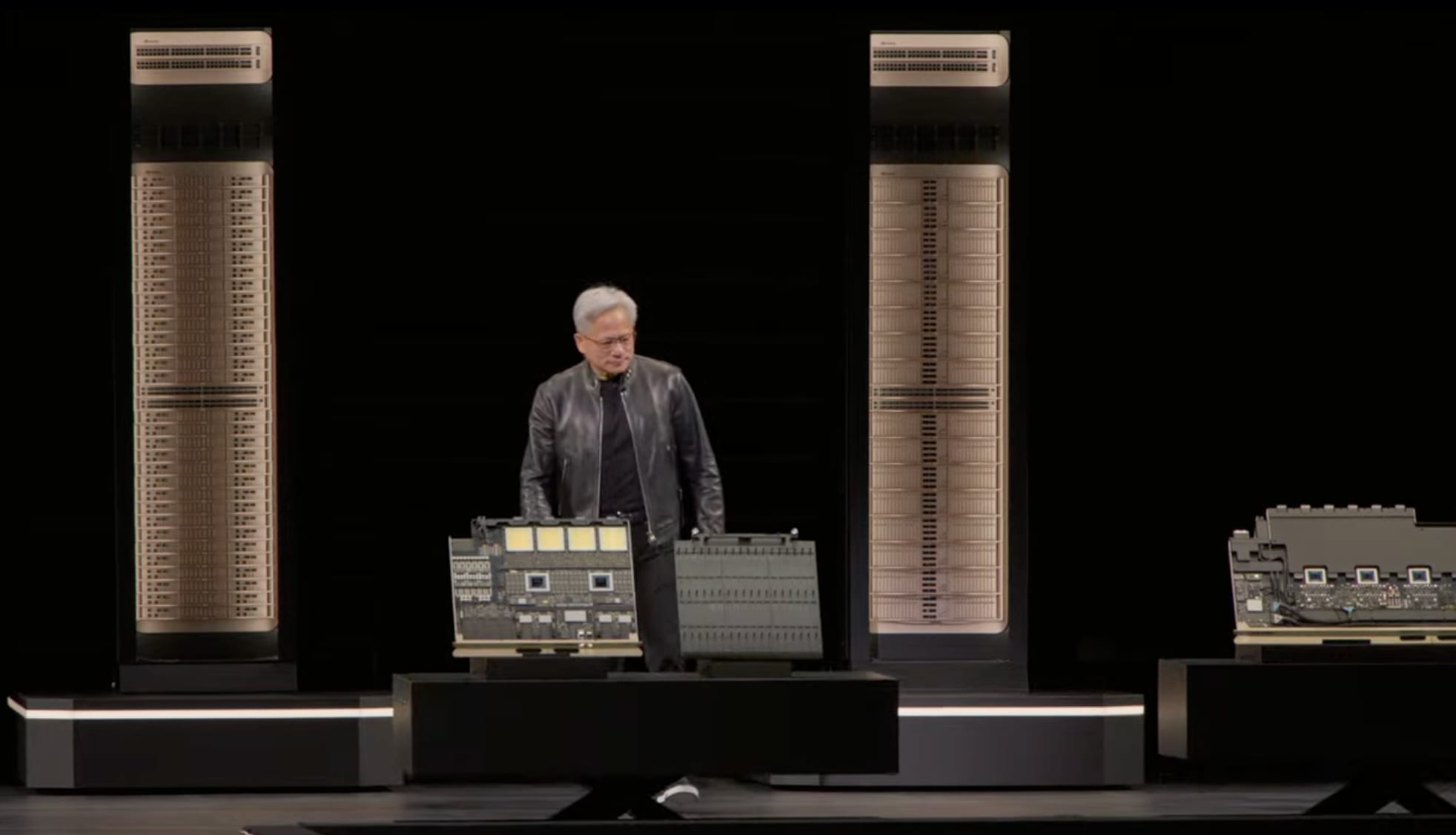

Vera Rubin Platform

- 7 chips, 5 rack-scale computers

- 3.6 exaflops, 260TB/sec NVLink bandwidth

- 40 million times compute improvement over 10 years

- 100% liquid-cooled, cooled with 45-degree warm water

- Installation time: reduced from 2 days to 2 hours

Revenue Impact (for a 1GW data center)

| Platform | Revenue relative to Hopper |

|---|---|

| Hopper | 1x |

| Grace Blackwell | 5x |

| Vera Rubin | 25x (35x with Groq integration) |

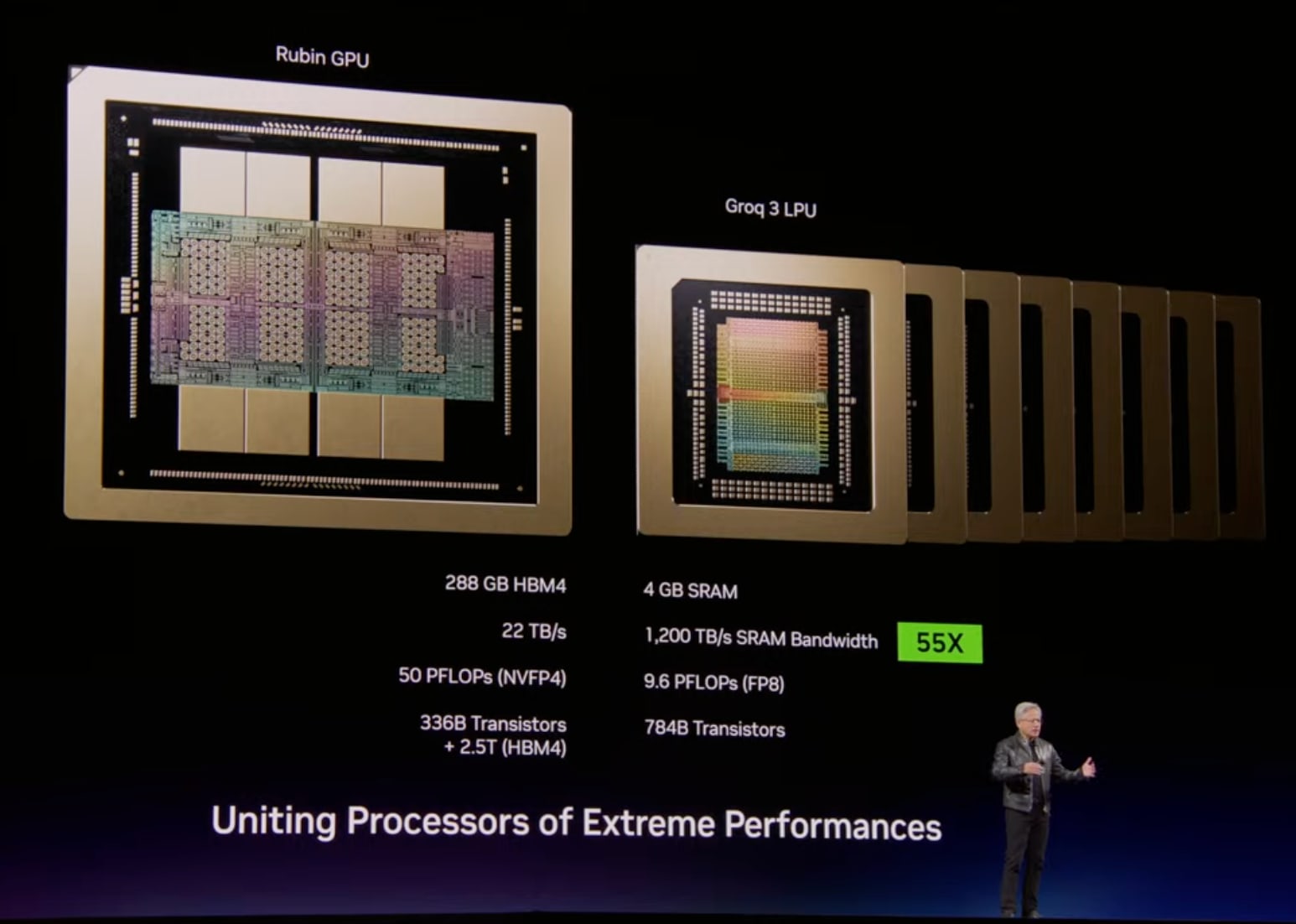

Groq Integration

NVIDIA acquired the Groq team and licensed their technology.

They integrated two extreme processors:

| Vera Rubin | Groq | |

|---|---|---|

| Design philosophy | High throughput | Low latency |

| Memory | 288GB/chip | 500MB/chip (large amount of SRAM) |

| Use | Pre-fill, Attention | Decode, token generation |

They integrated the two processors with software called Dynamo to optimize the inference pipeline, achieving both high throughput and low latency.

Roadmap

| Generation | GPU | CPU | LPU | Scale-up |

|---|---|---|---|---|

| Blackwell | Blackwell | Grace | - | NVLink 72 |

| Rubin | Rubin | Vera | LP 30 | NVLink 72 → NVLink 576 |

| Rubin Ultra | Rubin Ultra | Vera | LP 35 | Kyber (NVLink 144) |

| Feynman | Next-gen | Rosa | LP 40 | Kyber + CPO |

NVIDIA Platform

A digital twin platform that optimizes AI factory design and operation:

- DS World: Virtual design of AI factories on Omniverse

- DS Flex: Dynamic power management with the grid

- DS MaxQ: Dynamic maximization of token throughput

Partners: PTC, Dassault Systemes, Jacobs, Siemens, Cadence, Procore, etc.

AI factories have twice the optimization potential, and at this scale, doubling is enormous.

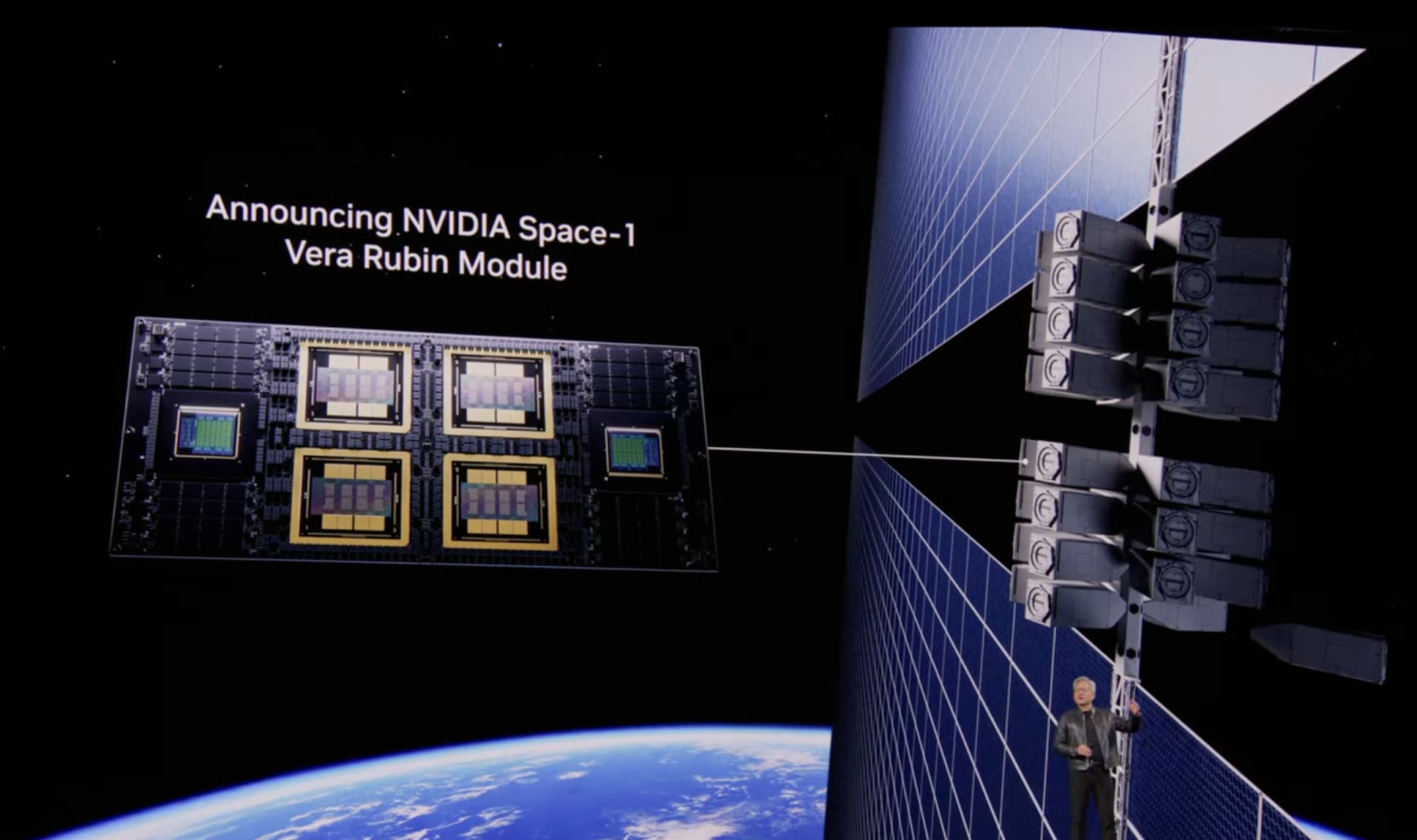

Space Deployment

- Thor: Radiation-approved, already mounted on satellites

- Vera Rubin Space-1: Computer for space data centers (under development)

- Challenge: In space, cooling relies solely on radiation as there's no conduction or convection

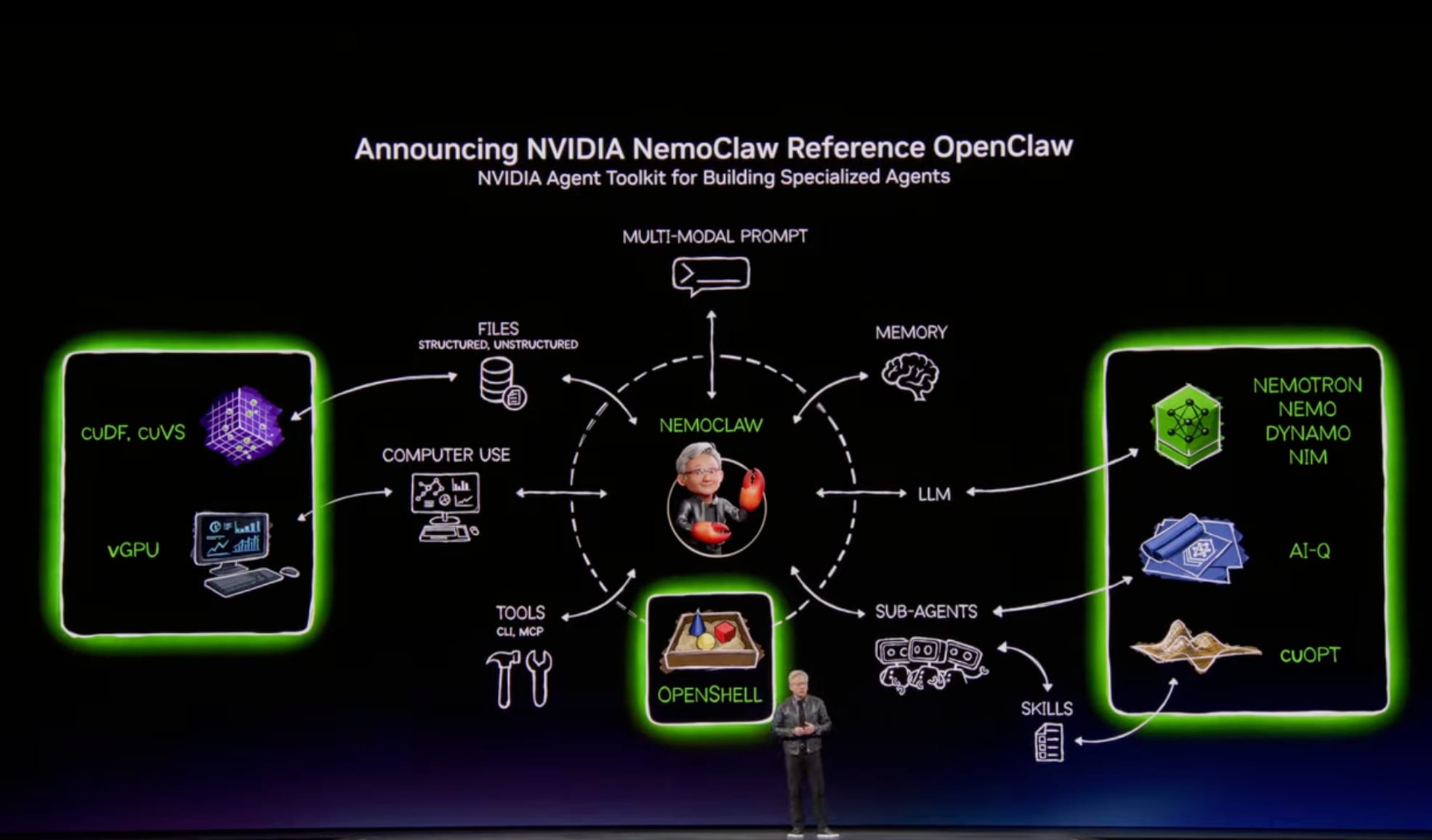

OpenClaw

An open-source project developed by Peter Steinberger:

- Most popular open-source project in human history (surpassed Linux's 30 years in just weeks)

- Download and build AI agents with a single command

- Andrej Karpathy's "Research" function: assign a task, go to sleep, wake up to 100 automatically run experiments

NVIDIA announced support for OpenClaw.

OpenClaw is an operating system for AI agents, featuring resource management, scheduling, sub-agent invocation, and multimodal I/O.

Just as Windows enabled personal computers, OpenClaw has enabled personal agents.

Like Linux, HTTP/HTML, and Kubernetes, it provided what the industry needed when it needed it. Every company needs an "OpenClaw strategy."

Enterprise IT Transformation

Every SaaS company is becoming a GaaS (Agentic as a Service) company.

Addressing Security Challenges

Security is essential as agents can access confidential information, execute code, and communicate externally.

NVIDIA developed NeMo Claw:

- OpenShell (security integration)

- Policy engine integration

- Network guardrails

- Privacy router

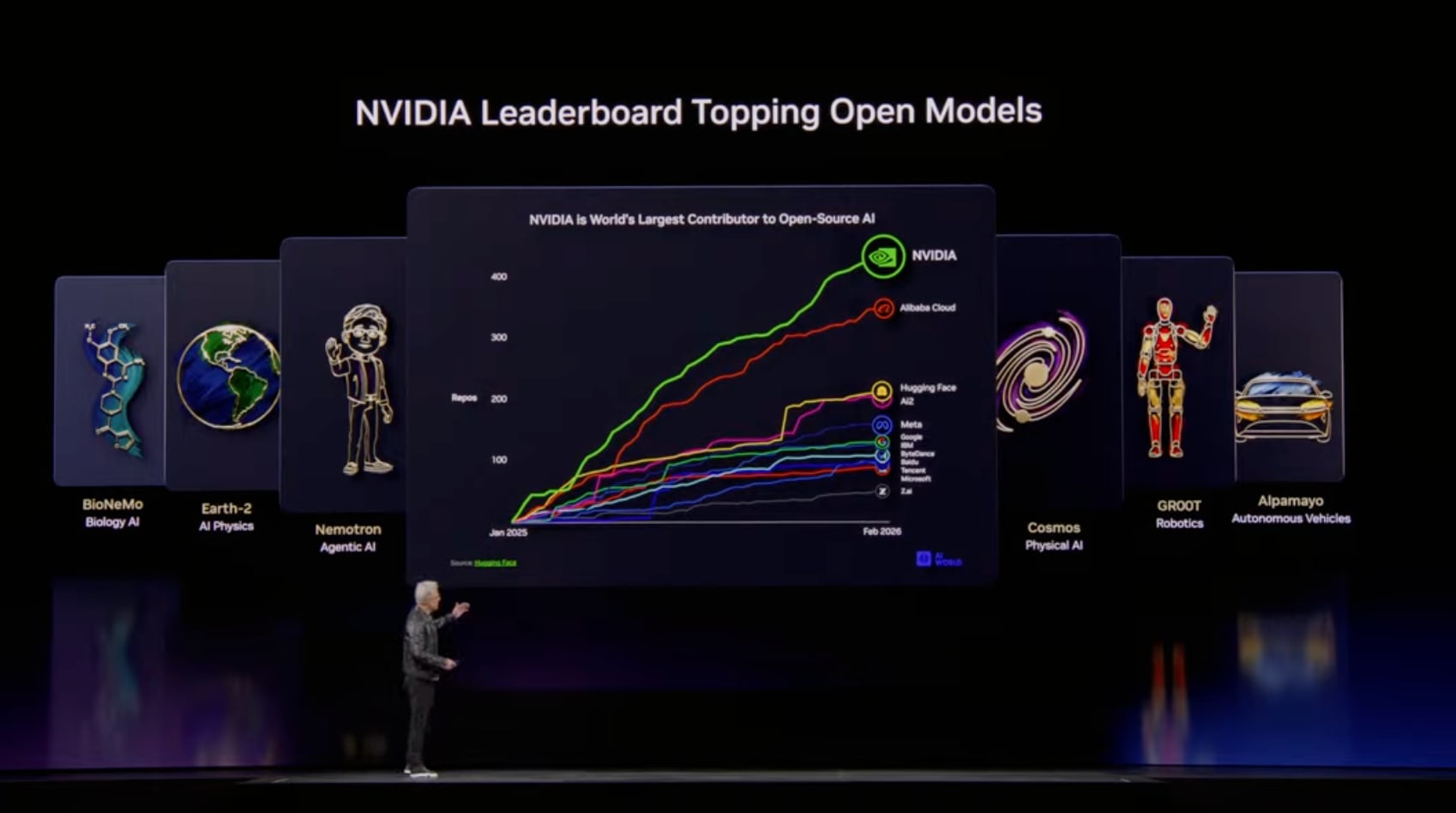

NVIDIA Open Models

Six model families at the frontier of all domains:

| Model | Purpose |

|---|---|

| Nemotron | Language, vision, RAG, voice |

| Cosmos | Physical AI, world generation |

| Alpamayo | Self-driving (world's first thinking and reasoning model) |

| Groot | General-purpose robotics |

| BioNeMo | Biology, molecular design |

| Earth Two | Weather and climate prediction |

Nemotron-3 Ultra has become the world's best base model, supporting Sovereign AI development in various countries.

Nemotron Coalition

Cursor, LangChain, Mistral, Perplexity, Sarvam, and many others are participating to jointly develop Nemotron-4.

The Era of Token Budgets

In the future, all engineers will have an annual token budget. In addition to their base salary, they'll receive about half that amount in tokens. In Silicon Valley, "how many tokens come with the job" has become a hiring condition.

Physical AI

Self-driving Cars' "ChatGPT Moment"

It has been proven that self-driving works reliably.

New partners: BYD, Hyundai, NISSAN, Geely (total 18 million vehicles annually)

Partnering with Uber to deploy robotaxis in multiple cities.

Alpamayo: World's first thinking and reasoning self-driving AI. The car can explain its actions.

Robot Development Ecosystem

| Tool | Purpose |

|---|---|

| Isaac Lab | Training and evaluation |

| Newton | Differentiable physics simulation |

| Cosmos | Neural simulation |

| Groot | Robot reasoning and behavior generation |

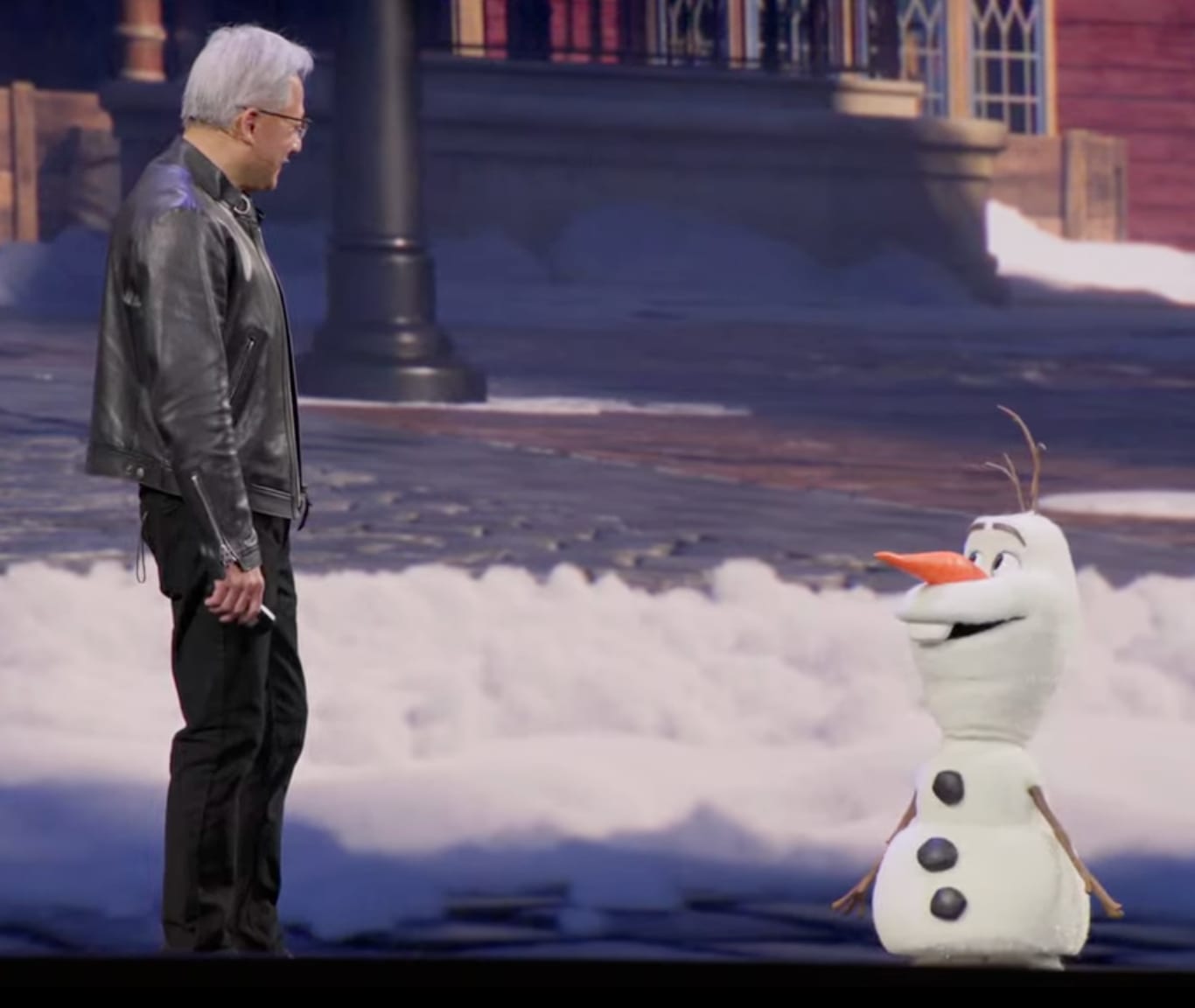

Disney Collaboration

Co-developed with Disney Research. An Olaf robot appeared, learning to walk in Omniverse and adapting to the physical world with the Newton solver.

In the future Disney parks, character robots will walk around the parks.

Summary

This keynote was packed with content, and I've summarized some of the key points that caught my attention. AI has entered the era of reasoning and agents, with tokens becoming a new currency and value. I strongly felt that NVIDIA is positioning itself to provide the foundation for this across hardware, software, and the entire ecosystem.

AI Transformation Points

- Evolution from ChatGPT → o1 → Claude Code has shifted AI's purpose from "generation" → "reasoning" → "execution"

- Computational demand increased 1 million times in the past 2 years, with $1 trillion in demand expected by 2027

- 100% of NVIDIA employees use Claude Code / Codex / Cursor

Token Factory

- Data centers have shifted from "file storage" to "token production factories"

- We've entered an era where CEOs worldwide will pursue token efficiency

- In the future, engineers will have annual token budgets, which will become a hiring condition

Vera Rubin Platform

- 35-50x performance improvement compared to Hopper (vs. 1.5x expected from Moore's Law)

- 40 million times compute improvement over 10 years

- Groq integration combines high throughput with low latency

OpenClaw Revolution

- OS for Agent AI (with impact equivalent to Linux, HTML, Kubernetes)

- Most popular open-source project in human history

- All SaaS companies will become GaaS (Agentic as a Service) companies

Physical AI

- The "ChatGPT moment" has arrived for self-driving

- New partners: BYD, Hyundai, NISSAN, Geely (18 million vehicles annually)

- Co-developed Olaf robot with Disney for future Disney parks