A blind majority vote with three LLMs! I tested the Claude Sonnet 4.6 translation pipeline under construction

This page has been translated by machine translation. View original

DevelopersIO has been offering an automatic article translation feature powered by Amazon Bedrock and Claude since the end of 2025.

While the previous pipeline was able to maintain Markdown structure during translation to some extent, this article introduces how we adopted Claude's latest model Sonnet 4.6 to further improve translation quality and use the API more efficiently.

Architecture

Here is the architecture of the translation pipeline being constructed.

| Component | Technology |

|---|---|

| Translation Engine | Amazon Bedrock (Claude Sonnet 4.6, Structured Outputs) |

| Evaluation Engine | Amazon Bedrock (Nova Pro, gpt-oss-120b) + Gemini 3.1 Pro |

| Execution Environment | AWS Lambda (Python 3.12 / arm64) |

| Data Store | Amazon DynamoDB |

| Deployment | AWS SAM |

Using Structured Outputs for translation+summary+recommendation in one call

We wanted to get additional information beyond translation, such as summaries that help with search, in a single LLM execution. Bedrock's Structured Outputs proved to be the optimal solution for this.

Schema Design

Using Bedrock's output_config.format.json_schema, we return 9 fields in a single API call.

{

"type": "object",

"properties": {

"title": { "type": "string" },

"excerpt": { "type": "string" },

"content": { "type": "string" },

"summary_en": { "type": "string" },

"rag_en_s": { "type": "string" },

"rag_en_m": { "type": "string" },

"rag_en_l": { "type": "string" },

"recommendation": { "type": "string" },

"confidence": { "type": "integer" }

},

"required": ["title", "excerpt", "content", "summary_en",

"rag_en_s", "rag_en_m", "rag_en_l", "recommendation",

"confidence"],

"additionalProperties": false

}

| Field | Purpose |

|---|---|

| title, excerpt, content | Translation results (title, excerpt, Markdown body) |

| summary_en | English summary (3-line bullet points) |

| rag_en_s / rag_en_m / rag_en_l | For search preview / vector search / comprehensive technical summary |

| recommendation | Recommendation text (2-3 sentences) |

| confidence | AI self-evaluation (0-100). For anomaly detection |

Using additionalProperties: false prevents the inclusion of fields outside the schema.

Bedrock Invocation

body = {

"anthropic_version": "bedrock-2023-05-31",

"max_tokens": 60000,

"system": [{"type": "text", "text": system_prompt,

"cache_control": {"type": "ephemeral"}}],

"messages": [{"role": "user", "content": user_prompt}],

"output_config": {"format": {"type": "json_schema", "schema": SCHEMA}}

}

response = bedrock.invoke_model(modelId="us.anthropic.claude-sonnet-4-6", body=json.dumps(body))

Key Points of the Translation Prompt

The translation prompt is placed in the system prompt. Since the full text is lengthy, here are the key rule instructions:

- Preserve all Markdown formatting symbols (#, *, _, [], (), etc.) in their exact positions

- Never edit, modify, or translate URLs. Keep all URLs exactly as they appear in the original

- Preserve the line break structure of the original document

- Do not start the content translation with a heading

We explicitly instruct to maintain the Markdown structure. The prohibition against URL modification prevents the translation model from translating Japanese paths within URLs.

For summary fields, we specify word count and content granularity for each purpose:

- summary_en: 3 lines in English, max 50 words per line, Markdown bullet format (- )

- rag_en_s: ~200 words, 1 paragraph. First 80 words MUST contain what the article covers and key outcome

- rag_en_m: ~150 words, dense factual summary. Include technologies used, problem solved, approach taken

Execution Results

We verified with a 13,479-character technical article (8 code blocks, Mermaid diagrams, multiple tables).

| Item | Value |

|---|---|

| Input tokens | 7,704 |

| Output tokens | 7,435 |

| Processing time | 109 seconds |

| Memory usage | 90 / 256 MB |

| JSON parsing errors | 0 instances |

Thanks to Structured Outputs, there were zero parsing errors even with JSON containing long Markdown text. Without worrying about backticks or escape characters breaking JSON, subsequent processing becomes simpler.

Mechanical QA: 4-item quality check

We automatically check the translation results against these 4 items:

| Check | Logic | Pass condition |

|---|---|---|

| Heading count | Compare number of # lines between original and translation |

Match |

| Code block retention | Compare count of ``` |

Match |

| Line ratio | Translation/original line count ratio | 0.8~1.5 |

| URL consistency | Compare URL sets between original and translation | Exact match |

We normalize line breaks (\r\n → \n) as preprocessing.

In addition, we use the previously mentioned confidence score (0-100) for anomaly detection. Articles with low confidence are subject to human review.

The pilot article passed all items with a confidence of 93.

LLM-as-a-Judge: 3-model blind majority vote for quality verification

Why LLM-as-a-Judge?

While mechanical QA can detect structural issues like "missing headings" or "changed URLs," it cannot measure "naturalness of translation" or "appropriateness of technical term choices." However, human evaluation is costly and time-consuming even at a scale of 50 articles.

Therefore, we adopted LLM-as-a-Judge, having multiple LLMs score translation quality and determine by majority vote.

Selection of Evaluation Models

We used three models as evaluators. Nova Pro and gpt-oss-120b are available on Amazon Bedrock, while Gemini 3.1 Pro was manually executed outside of Bedrock. Each model's characteristics will be introduced in detail in the evaluation results section.

Design for Bias Elimination

LLM-as-a-Judge has two strong biases:

Version Number Bias (Anchoring): When including "Sonnet 3.7" and "Sonnet 4.6" in the prompt, LLMs may be influenced by their prior knowledge that "newer versions must be better."

Position Bias: The presentation order of translations (A/B) tends to affect judgment.

To eliminate these, we implemented the following measures:

- Model Name Masking: The prompt only mentions "Translation A" and "Translation B" without any model names or version information

- A/B Order Randomization: Randomly swap A/B assignments for each article, with script-side automatic reversal of results after evaluation

Evaluation Prompt

The evaluation prompt was written in English. We prioritized evaluation accuracy and stability, as evaluation models tend to be more proficient in English.

Here is the full text of the prompt we actually used:

You are an expert bilingual (Japanese-English) technical translation evaluator.

Compare two machine translations of the same Japanese technical blog article.

You MUST choose a winner — ties are NOT allowed.

## Evaluation Criteria (1-10 scale)

1. Accuracy — faithful meaning, correct technical terms, preserved numbers/data

2. Naturalness — reads like native English engineer writing, not "translated Japanese"

3. Technical Precision — AWS/API/code terms handled correctly

4. Structural Fidelity — Markdown structure (headings, tables, code blocks) preserved

5. Readability — easy to follow, logical flow

## Response Format

Return ONLY valid JSON (no markdown fences):

{

"accuracy_a": <int>, "accuracy_b": <int>,

"naturalness_a": <int>, "naturalness_b": <int>,

"technical_a": <int>, "technical_b": <int>,

"structural_a": <int>, "structural_b": <int>,

"readability_a": <int>, "readability_b": <int>,

"winner": "A_much_better" | "A_slightly_better" | "B_slightly_better" | "B_much_better",

"summary": "<one paragraph explaining the key differences that determined the winner>"

}

---

## Original Japanese

### Title

{ja_title}

### Excerpt

{ja_excerpt}

### Body

{ja_content}

---

## Translation A

### Title

{a_title}

### Excerpt

{a_excerpt}

### Body

{a_content}

---

## Translation B

### Title

{b_title}

### Excerpt

{b_excerpt}

### Body

{b_content}

We have the models score each translation on 5 axes (Accuracy, Naturalness, Technical Precision, Structural Fidelity, Readability) on a 1-10 scale, and return the overall winner and a summary of differences in JSON.

Evaluation Results

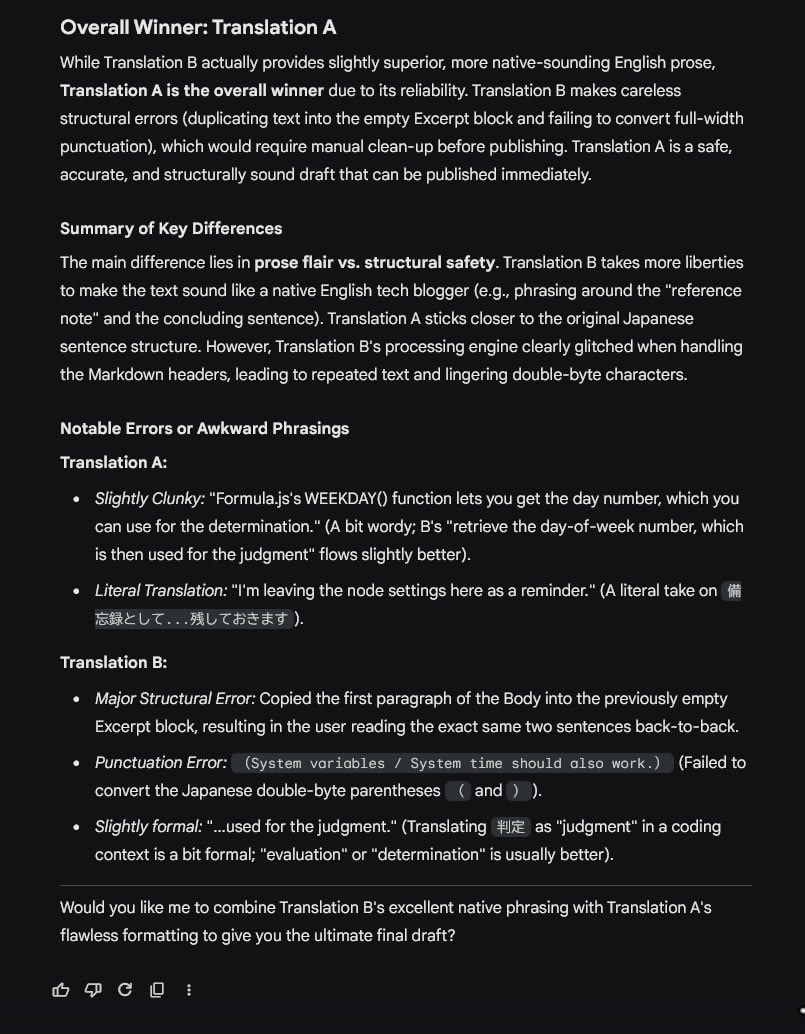

For quality verification, we compared A/B between Sonnet 4.6 translations and existing translation pipeline results.

Nova Pro + gpt-oss-120b Evaluation

In the initial evaluation, we used a schema that allowed for Ties, but Nova Pro judged 72% of articles as Ties, making the evaluation ineffective. LLMs seem to have a bias towards choosing Tie as the safest option when differences are slight.

We then switched to Forced Choice (binary choice) with a 4-level evaluation (A_much_better / A_slightly_better / B_slightly_better / B_much_better).

| Old Translation Wins | Sonnet 4.6 Wins | Split (Divergent Opinions) | |

|---|---|---|---|

| Overall Judgment | 2 | 30 | 19 |

Breakdown by model:

| Old Translation Wins | Sonnet 4.6 Wins | |

|---|---|---|

| Nova Pro (51 articles) | 2 | 49 |

| gpt-oss-120b (50 articles) | 21 | 29 |

With Forced Choice, Nova Pro now provided clear judgments for all articles and overwhelmingly favored Sonnet 4.6. gpt-oss-120b also favored Sonnet 4.6 but selected the old translation for 21 articles.

Analysis of Why gpt-oss-120b Chose Old Translations

Analyzing the summaries of the 21 cases where gpt-oss-120b chose the old translation revealed clear patterns:

| Reason | Count | Countermeasure |

|---|---|---|

| Empty Excerpt hallucination (copying body text when original was empty) | 7 | Add "return empty string if empty" to prompt / exclude in preprocessing |

| Structural fidelity (slight differences in heading hierarchy) | 9 | Strengthen heading structure preservation in prompt |

| Faithfulness to original (3.7 was closer to literal translation) | 4 | Adjust balance between free translation and faithfulness |

The hallucination of empty Excerpts in particular was a useful discovery as a concrete improvement point, as adding one line to the prompt could potentially improve 7 cases.

Gemini 3.1 Pro Detailed Evaluation

We had AI assistant Kiro (Opus 4.6) select 8 samples with the following criteria, then entered the generated evaluation prompts into web browser version of Gemini:

- Include longest (33,330 characters) and shortest (415 characters) articles

- Include articles that failed mechanical QA

- Include non-technical articles (company joining blogs) as well as technical ones

- Include contentious articles where Nova and gpt-oss had split decisions

| Result | Count |

|---|---|

| Sonnet 4.6 Wins | 6 |

| Tie | 1 |

| Old Translation Wins | 1 |

Strengths of Sonnet 4.6 Identified by Gemini

Free Translation Ability: It converted Japanese abbreviations and colloquial expressions into phrases that make sense to English speakers.

| Original | Sonnet 4.6 | Old Translation |

|---|---|---|

| LTの資料 | lightning talk slides ✅ | LT materials ❌ |

| ポン出しのたたき台 | rough first draft ✅ | a starting point △ |

| 差分として検出され | detected as a diff ✅ | unintended changes being detected △ |

| 突然ですが、クイズです | Let me start with a quick quiz ✅ | Suddenly, here's a quiz ❌ |

"LT" is a common abbreviation in Japanese tech communities but not understood in English-speaking regions. Sonnet 4.6 expanded this to "lightning talk slides," which Gemini highly rated. For "detected as a diff," Gemini commented that it "reads like it was translated by someone who actually writes Terraform."

Structure Preservation: Sonnet 4.6 accurately preserved custom Markdown blocks like :::message and :::details. In the old translation, there were cases where :::message blocks were completely removed.

Text Restructuring: It went beyond mere translation by restructuring long Japanese sentences into English conventions like "First... Second... Third..." to match English writing norms.

Cases Needing Improvement

The NocoDB article (1,589 characters) where the old translation won in Gemini 3.1 Pro's detailed evaluation revealed specific improvement points:

- Empty Field Hallucination: Even though the original Excerpt was empty, Sonnet 4.6 copied the beginning of the body text to fill the Excerpt

- Full-width Bracket Retention: It didn't convert

()to half-width()

Empty field hallucination was also confirmed in 7 cases in the batch evaluation and can be addressed by either stating "return an empty string if empty" in the prompt or handling it in code preprocessing. Converting full-width brackets is a mechanical issue that should be resolved with str.replace rather than being left to the LLM.

Prompt Tuning Implementation and Re-evaluation

Based on these evaluation results, we added the following 4 items to the translation prompt:

- If a field (title, excerpt) is empty in the source, return an empty string. Never fabricate content

- Translate image alt-text (e.g.  → )

- Adapt section headings to natural English conventions rather than translating word-for-word

- Proofread your output for typos and grammatical errors before responding

After deployment, we re-translated the NocoDB article that lost to the old translation and the FSx article that was a Tie, then re-evaluated them using the same evaluation script.

| Article | Before Tuning | After Tuning |

|---|---|---|

| NocoDB (1,589 characters) | Old Translation Wins | Sonnet 4.6 Wins |

| FSx (3,933 characters) | Tie | Sonnet 4.6 Wins (both Nova Pro and gpt-oss-120b) |

Specific improvements for the NocoDB article:

| Item | Before Tuning | After Tuning |

|---|---|---|

| Excerpt | Hallucination by copying body beginning ❌ | Returns empty string ✅ |

| Full-width brackets | (System variables...) ❌ |

Converted to half-width (...) ✅ |

| Heading | ## Verification |

## Testing ✅ (more natural in English) |

| Code notation | WEEKDAY() as normal text |

`WEEKDAY()` with backticks ✅ |

Upon examining the hallucinated Excerpt content, we found it was an appropriate summary of the article. While we prioritized translation fidelity by implementing the "return empty if empty" prompt tuning, we also plan to adjust the pre-translation process to ensure Japanese Excerpts exist.

Comparison of Evaluation Model Characteristics

Nova Pro: Fast (2-5 seconds) and low cost. When allowed to choose Tie, 72% of judgments were Ties, but with Forced Choice it provided clear judgments for all cases. However, most were slightly_better with modest distinctions. Seems suitable for primary screening of large volumes of articles.

gpt-oss-120b: Provides reasoning with careful comparisons section by section. Its strength is that concrete improvement points like empty Excerpt hallucinations and structural differences can be identified from the summary. However, due to the output format typical of reasoning models, JSON parsing failed in some cases (initially 15/51 cases, improved to 1/51 with retry), requiring attention to output stability.

Gemini 3.1 Pro: Provided the most detailed evaluations. It quotes specific phrases and explains "why that translation is good." It detects typos, points out hallucinations, and even provides context-aware comments like "reads like it was translated by someone who actually writes Terraform." Gemini's evaluations were most helpful in difficult cases.

Conclusion

We've confirmed that our Sonnet 4.6 translation pipeline under construction is on track to exceed the quality of the previous version. It shows excellent results particularly in free translation ability, custom Markdown block preservation, and adaptation to English writing conventions.

Meanwhile, evaluations from gpt-oss-120b and Gemini revealed specific issues to address through preprocessing or prompt tuning, such as hallucinations caused by empty fields. When we actually modified the prompt and re-evaluated, articles that had lost to the old translation were reversed to Sonnet 4.6 wins. The LLM-as-a-Judge we introduced can quickly judge post-modification quality, promising more efficient improvement cycles.

Along with the update to Sonnet 4.6, we've established a foundation for LLM-based evaluation and improvement mechanisms. We aim to continue improving translation quality to deliver technical information to more people.