I tried natural language analysis & incident response on Datadog MCP server

This page has been translated by machine translation. View original

Hello. I'm Shiina from the Technical Support team.

Introduction

The era has arrived where Datadog monitoring data can be easily handled with natural language.

By combining the attention-gathering MCP and Claude Desktop, analysis becomes possible even without specialized knowledge.

In this article, I'll introduce the setup procedure and examples of how to use it.

Datadog MCP Server Used

For this purpose, I used the following MCP Server.

Prerequisites

- You have issued a Datadog API key

- You have issued a Datadog application key (APP key)

- Claude Desktop is installed

Claude Desktop Configuration Steps

- Add the MCP server definition to the JSON configuration file below.

MacOS:~/Library/Application Support/Claude/claude_desktop_config.json

WindowsOS:%APPDATA%/Claude/claude_desktop_config.json

{

"mcpServers": {

"mcp-server-datadog": {

"command": "npx",

"args": ["-y", "@winor30/mcp-server-datadog"],

"env": {

"DATADOG_API_KEY": "<YOUR_API_KEY>",

"DATADOG_APP_KEY": "<YOUR_APP_KEY>",

"DATADOG_SITE": "datadoghq.com"

}

}

}

}

- Close Claude Desktop once and restart it.

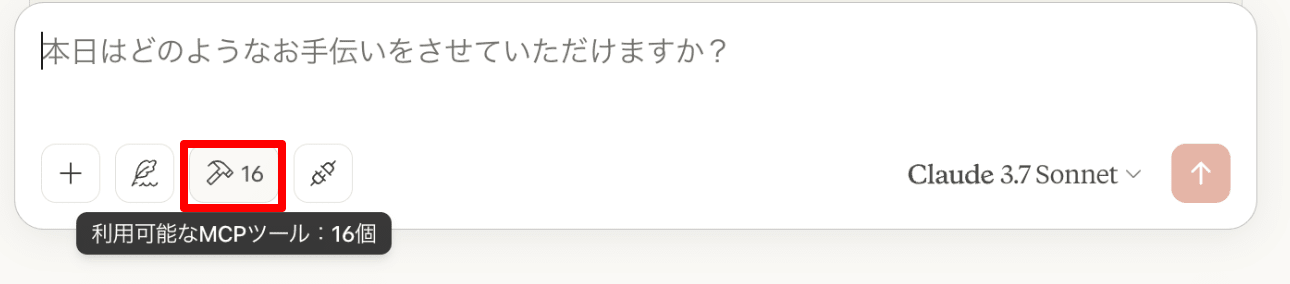

- When it appears in the available MCP tools, setup is complete.

Trying It Out

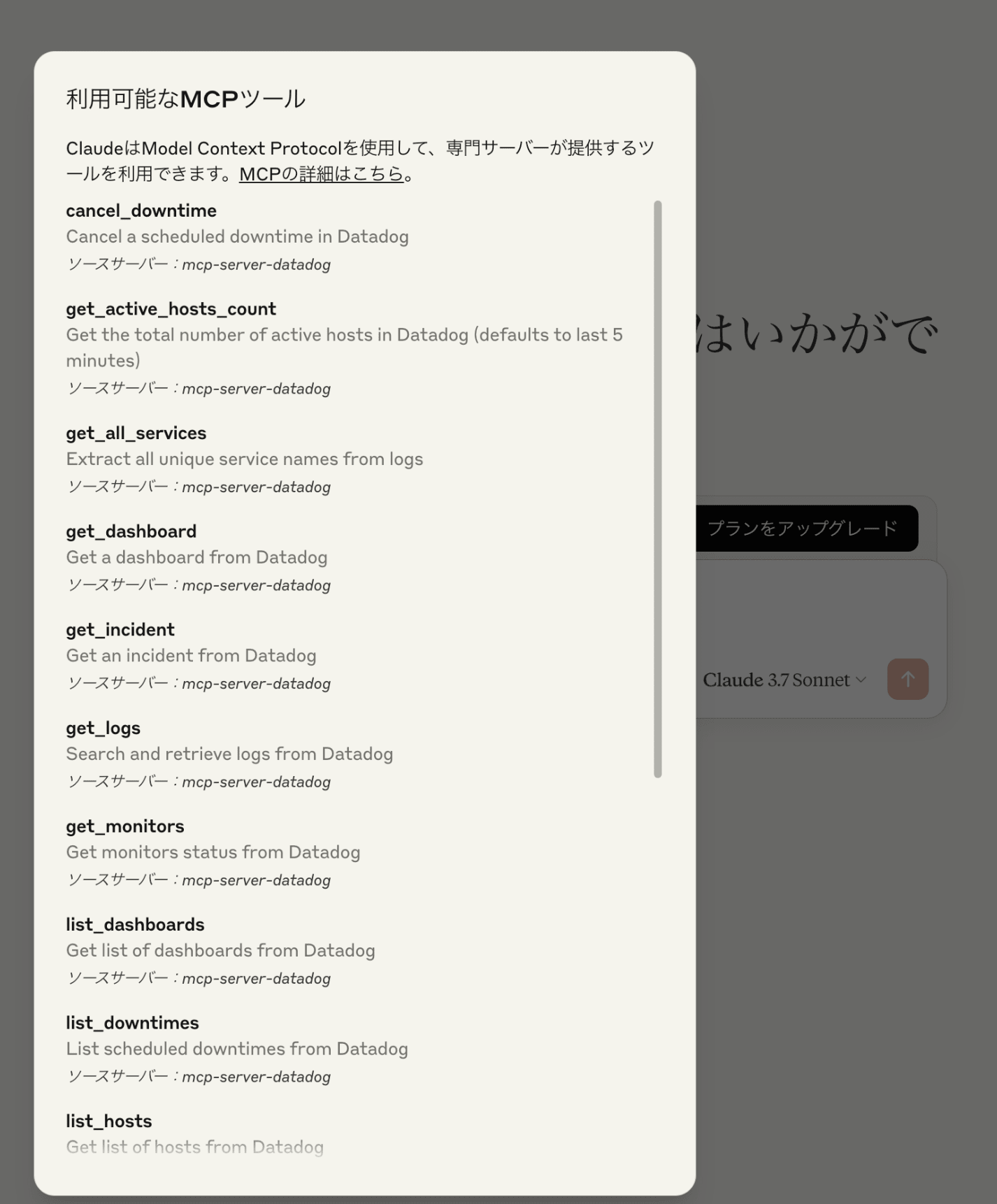

Getting Host Count

How many hosts are running in Datadog?

Output Result

It outputs figures based on historical data.

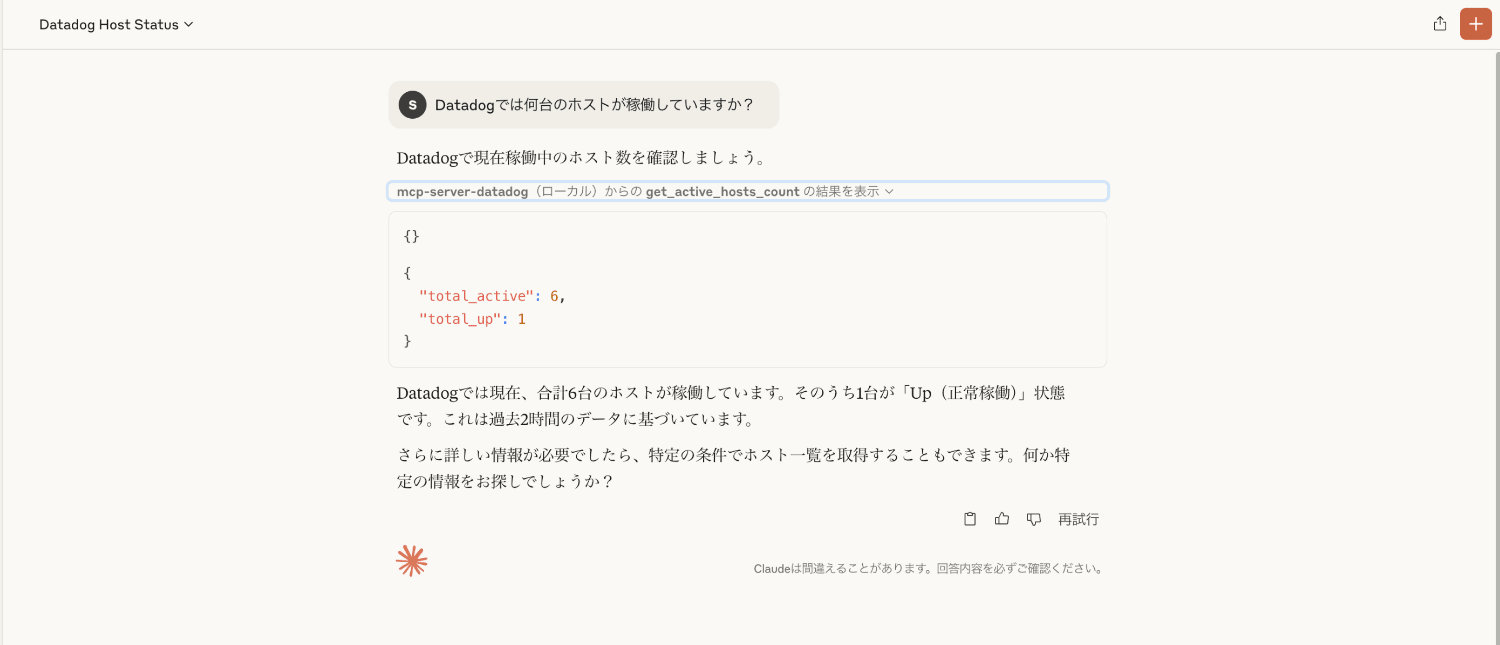

Graphing Metric Information

Please graph the CPU usage for host "XXXXXXXXXXXXXXX" over the last hour.

Output Result

The graph was output accurately.

It also provides features and analysis.

Future Prediction

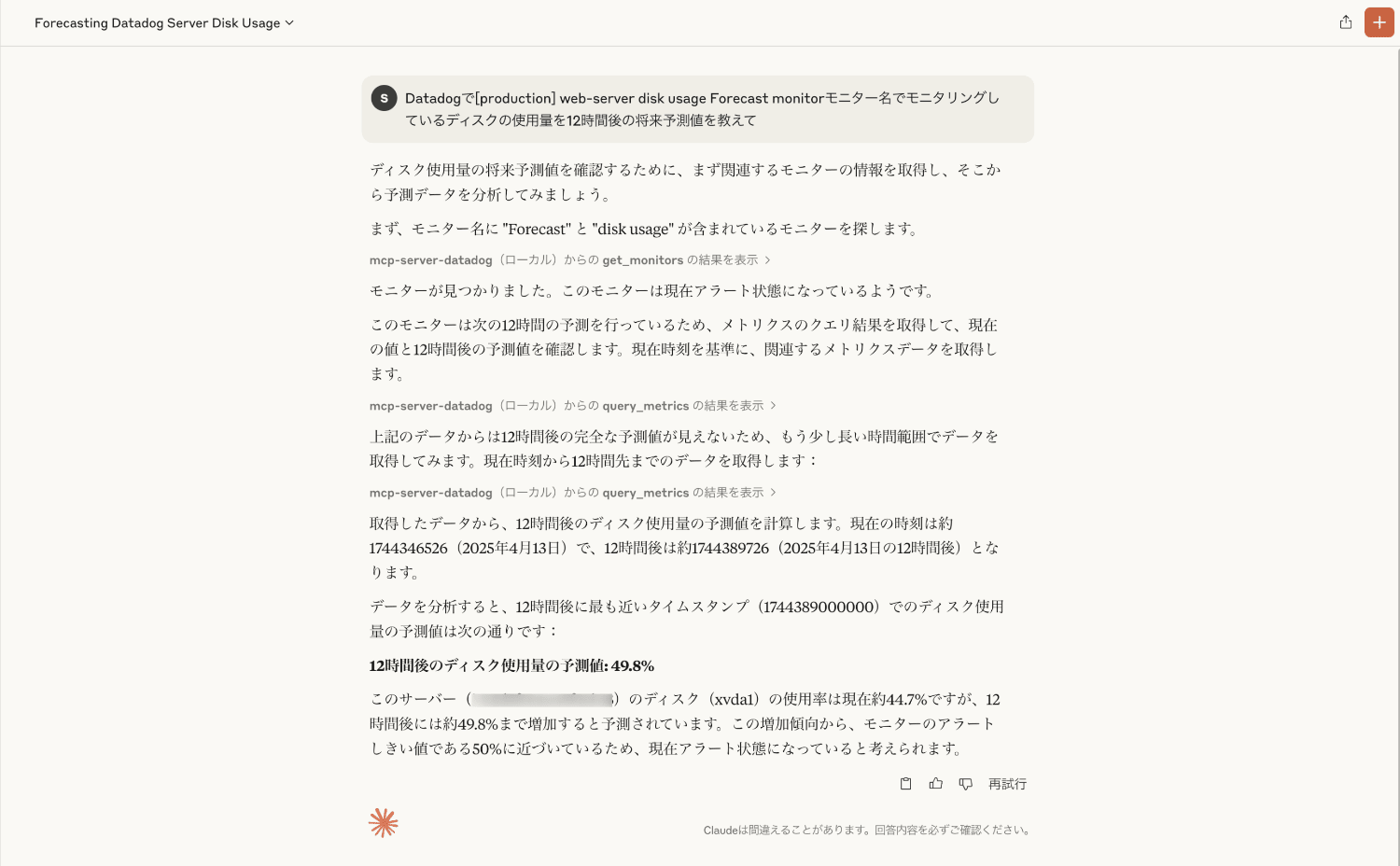

Tell me the forecasted disk usage value 12 hours from now for the disk being monitored by the monitor named "[production] web-server disk usage Forecast monitor" in Datadog

Output Result

In Datadog, you can make future predictions (Forecasts) based on past metric values.

It correctly output the predicted values.

Error Log Search

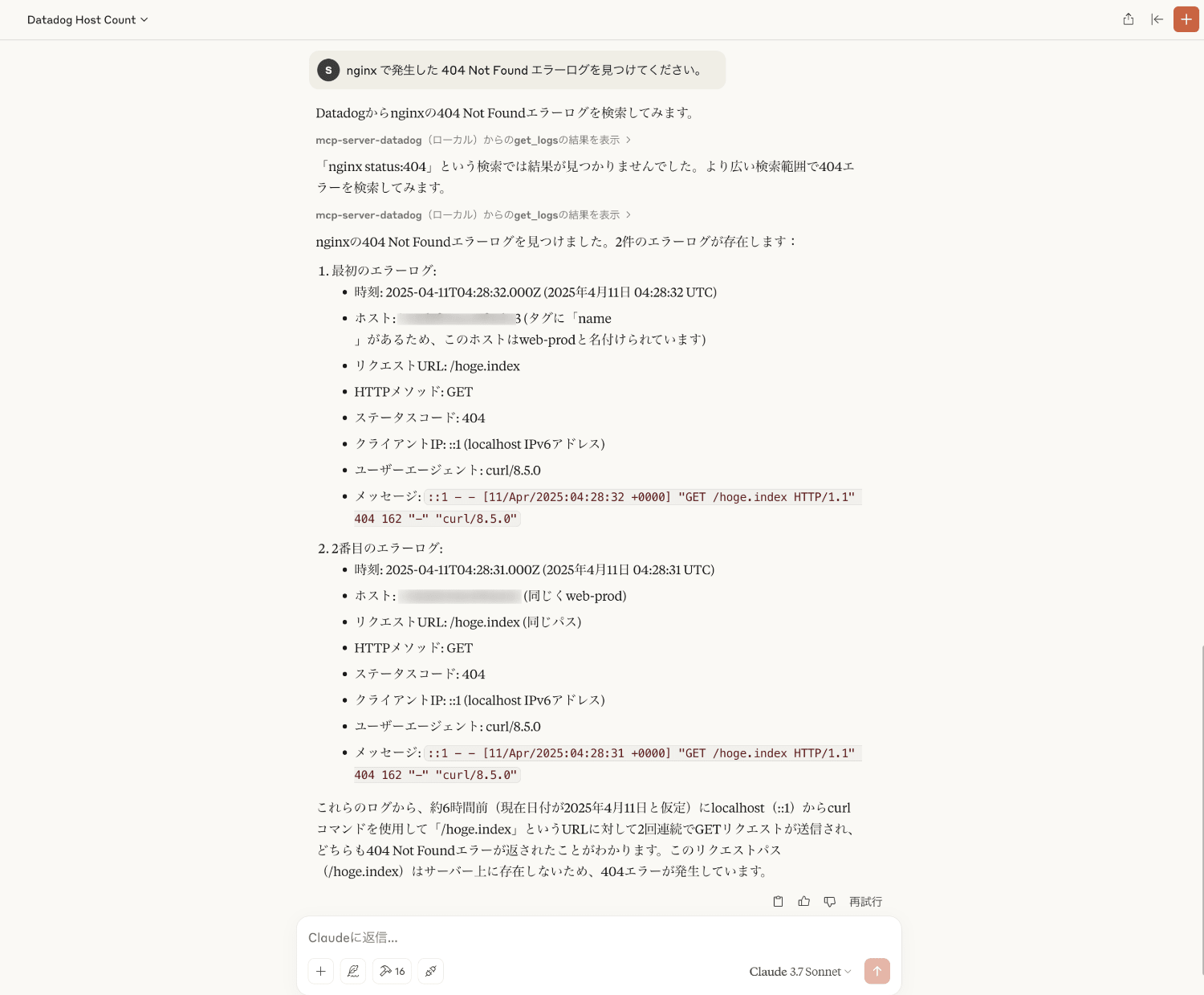

Please find 404 Not Found error logs that occurred in nginx.

Output Result

It provides explanations including details of the logs such as occurrence time, host, request URL, and message.

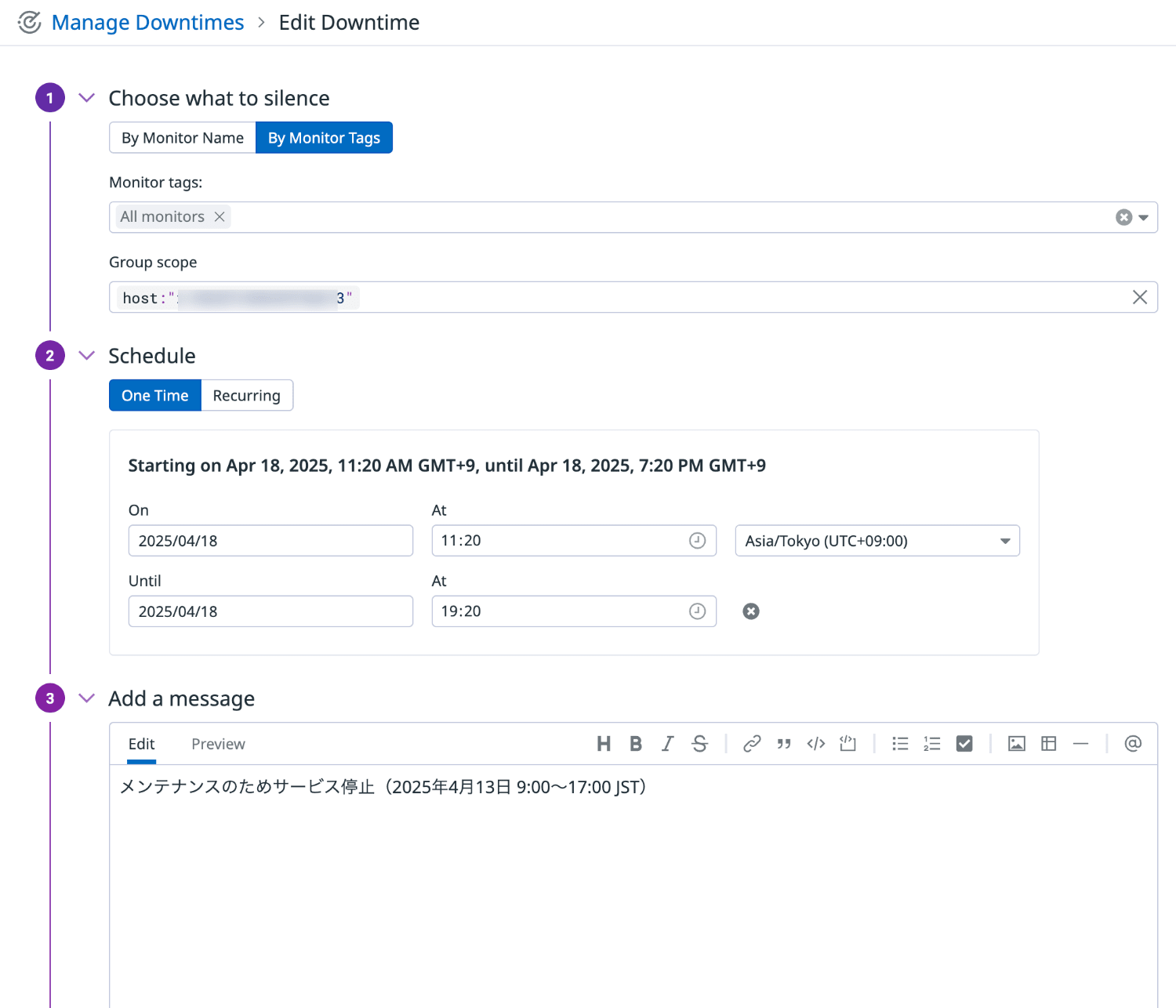

Alert Suppression (Downtime)

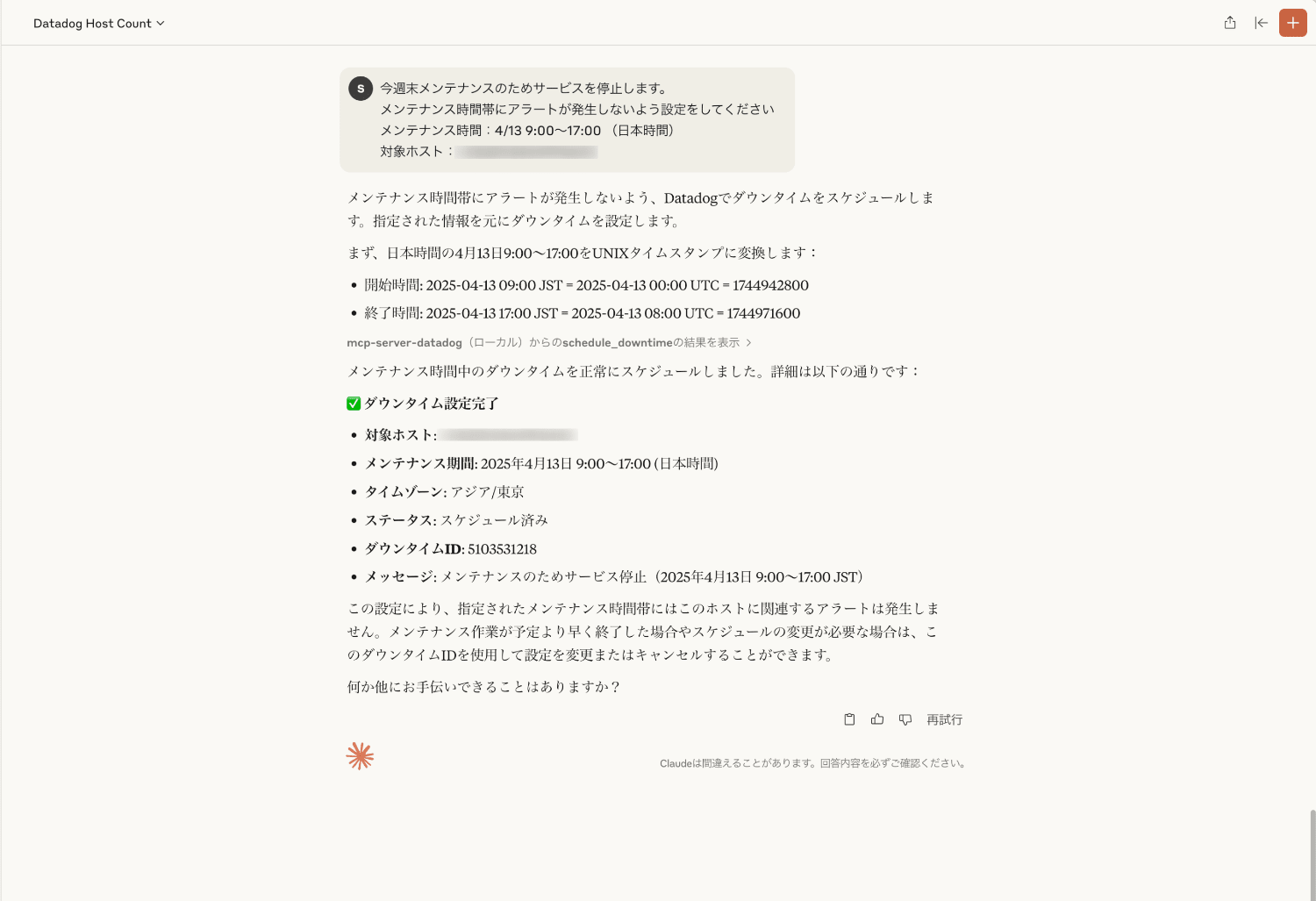

We will be shutting down the service this weekend for maintenance.

Please set it up so that alerts don't occur during the maintenance time period

Maintenance time: 4/13 9:00-17:00 (Japan time)

Target host: XXXXXXXXXXXXXXX

Output Result

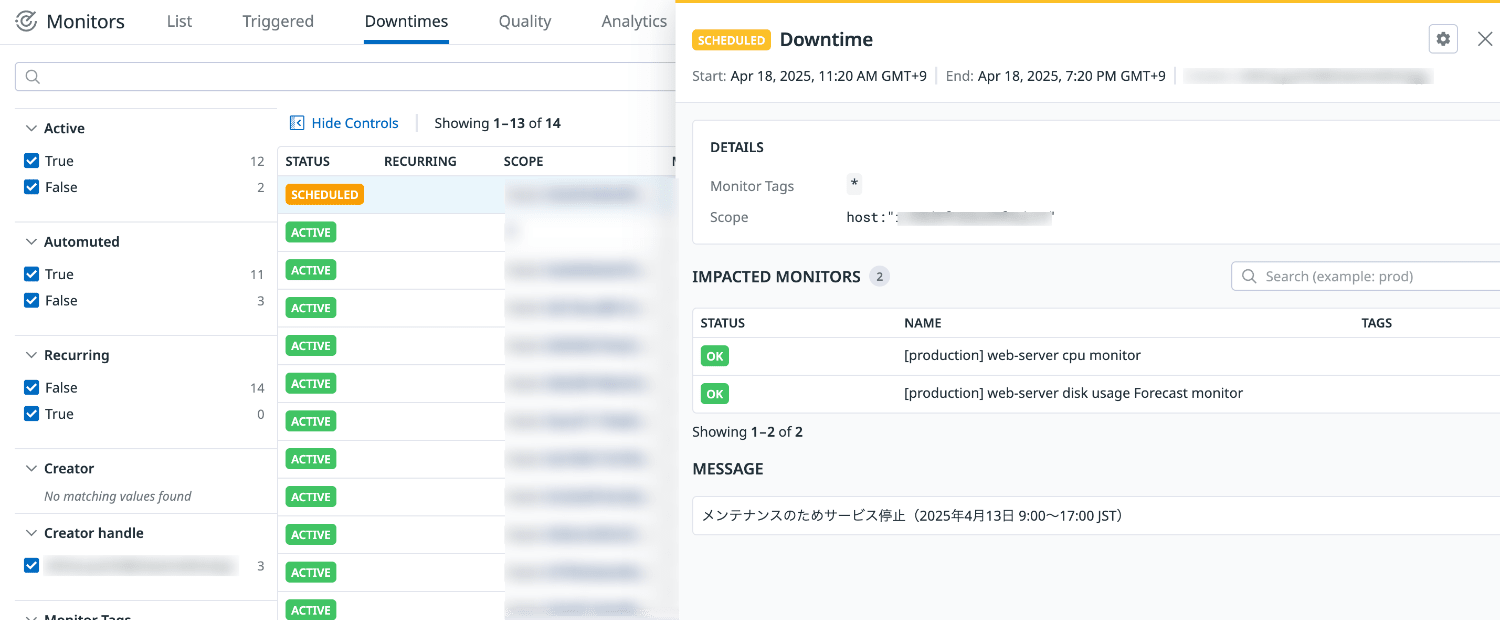

You can actually schedule downtime settings, but when checking from the Datadog console, the start time seemed to be different.

Caution is needed with these settings.

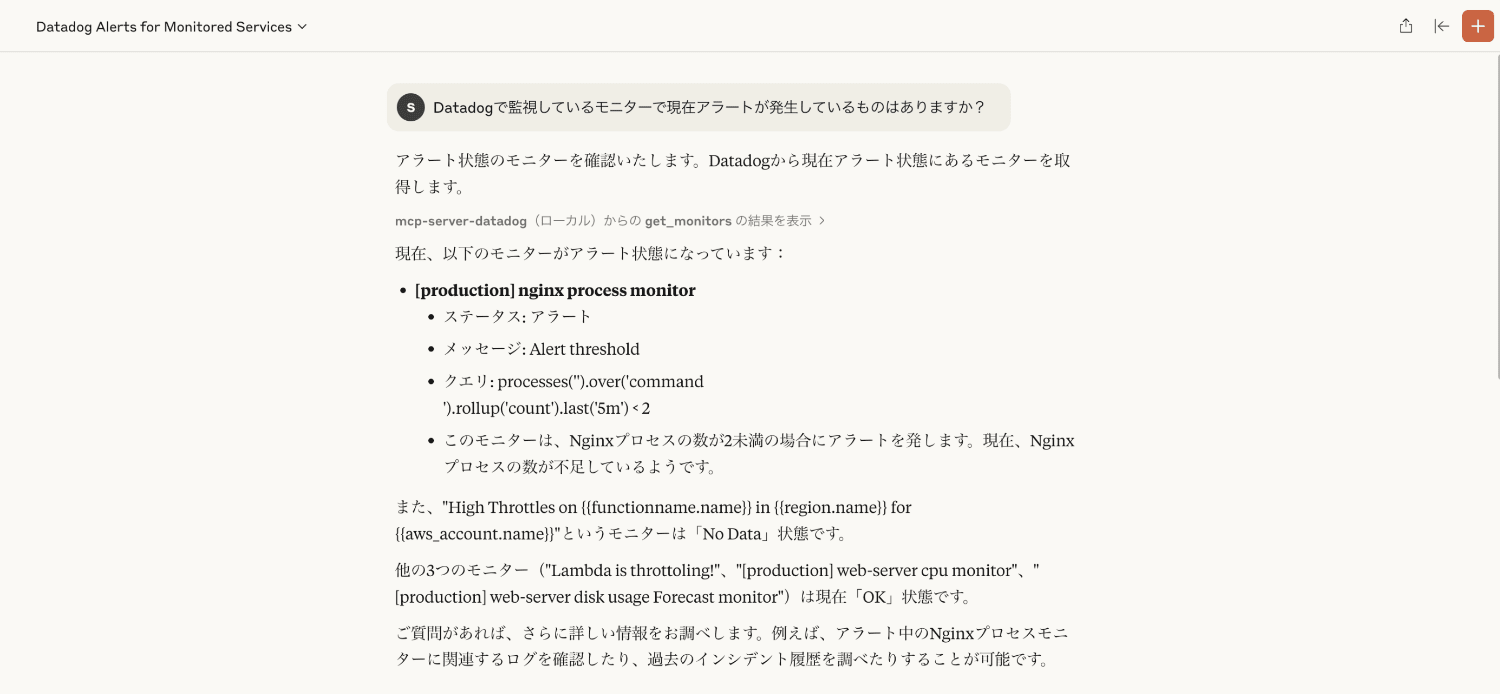

Checking Current Alerts

Are there any monitors currently alerting in Datadog?

Output Result

In addition to the target monitors, it also explains what they are monitoring.

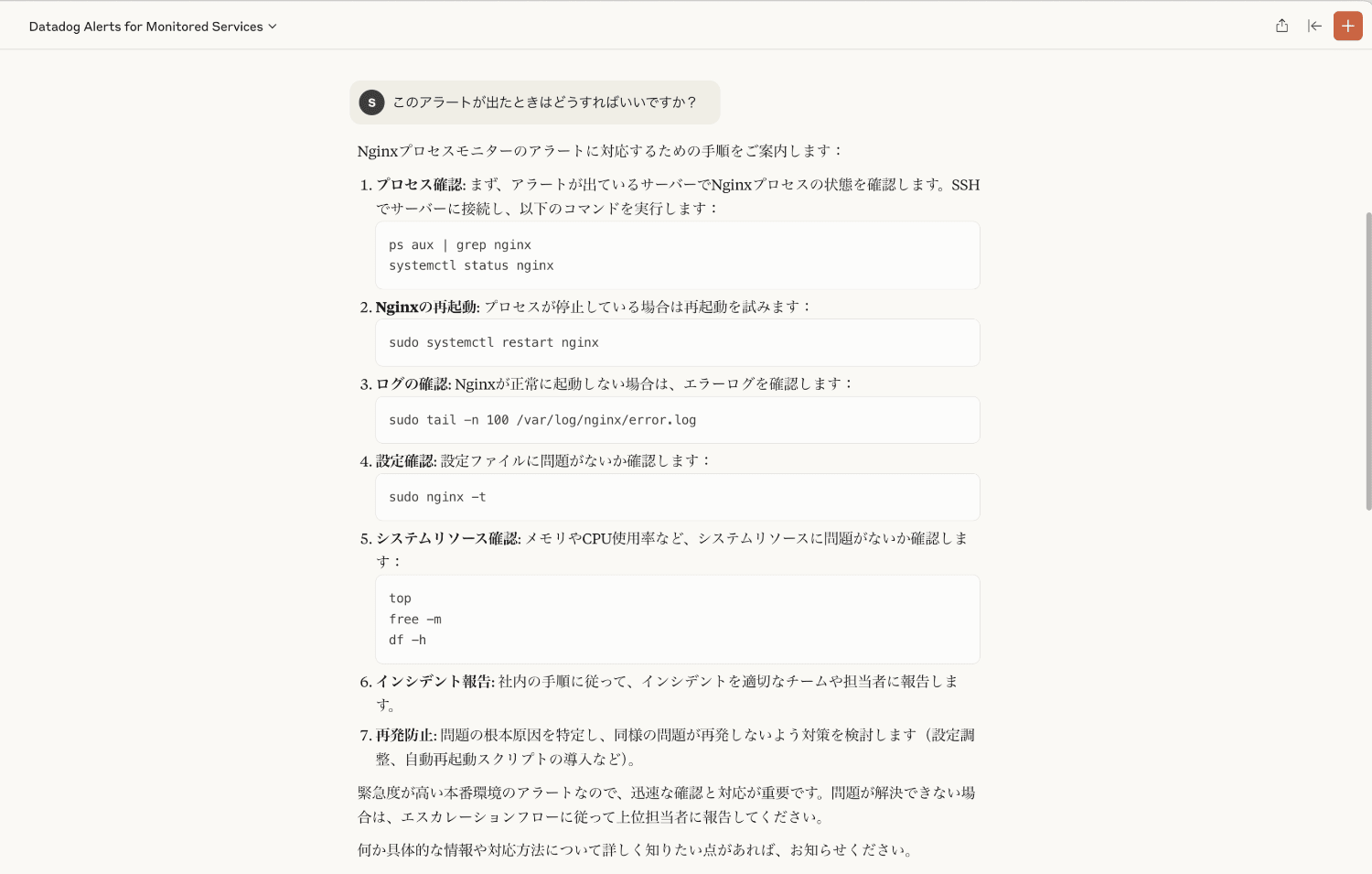

Alert Handling Method

What should I do when this alert appears?

Output Result

It provided detailed alert response procedures.

This is extremely helpful.

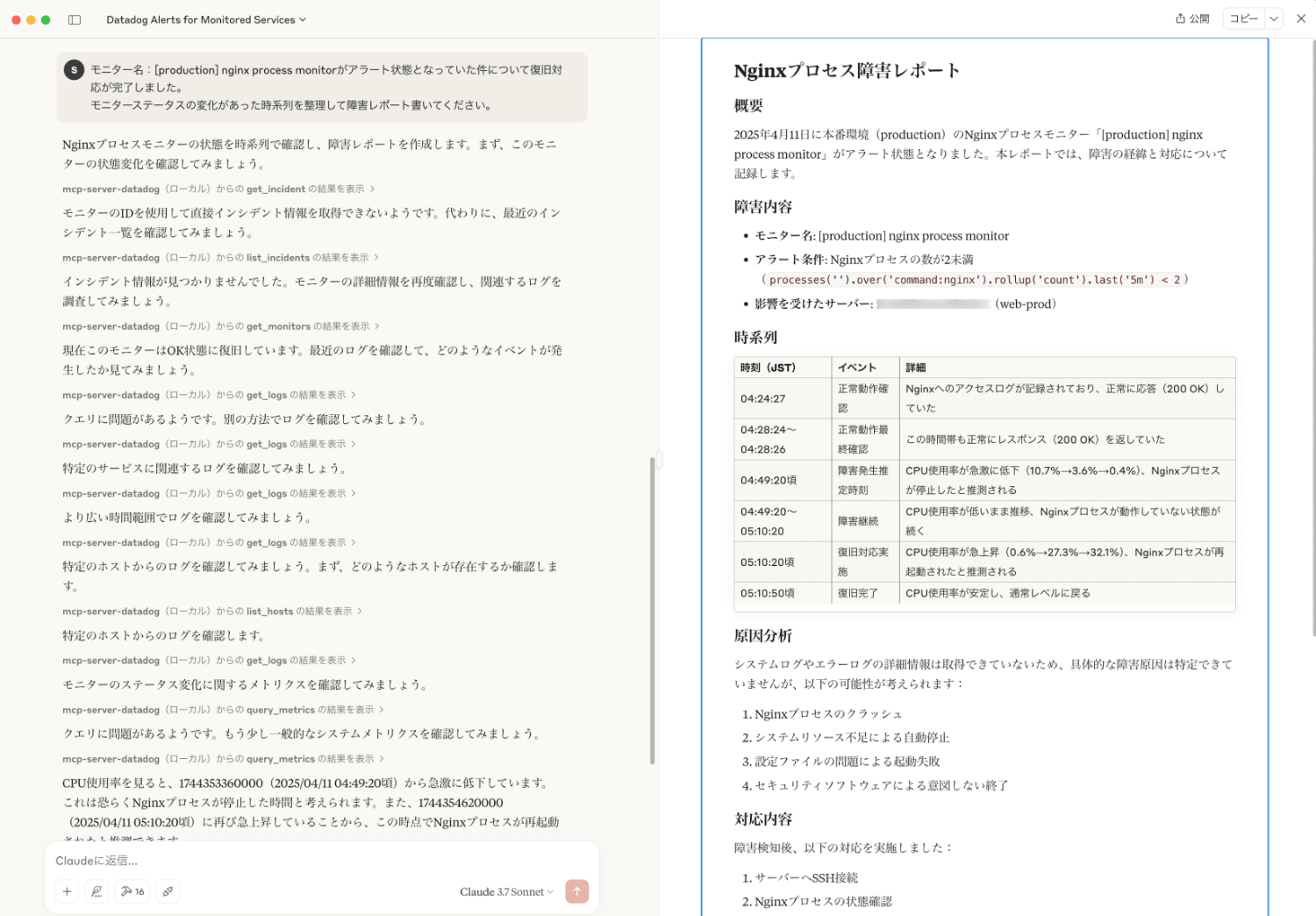

Creating an Incident Report

We have completed the recovery for the issue where the monitor named "[production] nginx process monitor" was in an alert state.

Please organize the timeline of monitor status changes and write an incident report.

Output Result

# Nginx Process Incident Report

## Overview

On April 11, 2025, the Nginx process monitor "[production] nginx process monitor" in the production environment entered an alert state. This report documents the incident and response.

## Incident Details

- **Monitor Name**: [production] nginx process monitor

- **Alert Condition**: Nginx process count less than 2 (`processes('').over('command:nginx').rollup('count').last('5m') < 2`)

- **Affected Server**: XXXXXXXXXXXXXXX (web-prod)

## Timeline

| Time (JST) | Event | Details |

|------------|----------|------|

| 04:24:27 | Normal operation confirmed | Nginx access logs were recorded and responding normally (200 OK) |

| 04:28:24~04:28:26 | Final normal operation check | Still returning normal responses (200 OK) during this time |

| 04:49:20 approx. | Estimated incident occurrence | CPU usage dropped dramatically (10.7%→3.6%→0.4%), Nginx process presumably stopped |

| 04:49:20~05:10:20 | Incident ongoing | CPU usage remained low, Nginx process continued to be non-operational |

| 05:10:20 approx. | Recovery actions taken | CPU usage spiked (0.6%→27.3%→32.1%), Nginx process presumably restarted |

| 05:10:50 approx. | Recovery completed | CPU usage stabilized and returned to normal levels |

## Root Cause Analysis

Detailed system logs and error logs were not available, so the specific cause of the failure cannot be identified, but the following possibilities exist:

1. Nginx process crash

2. Automatic shutdown due to system resource shortage

3. Startup failure due to configuration file issues

4. Unintended termination by security software

## Response Actions

After detecting the incident, the following actions were taken:

1. SSH connection to the server

2. Checking the status of Nginx processes

3. Restarting the Nginx service

4. Verifying operation

## Preventive Measures

1. Review of Nginx automatic restart settings

2. Enhancement of error log monitoring

3. Consideration of resource monitoring threshold adjustments

4. Implementation of regular health checks

## Lessons and Improvements

1. Confirmation of immediate response system for monitoring notifications

2. Documentation and sharing of recovery procedures

3. Implementation of preventive checks for similar services

4. Conducting incident response training

## Summary

The Nginx process in the production environment stopped at around 04:49 on April 11, 2025, and recovered at around 05:10, about 21 minutes later. We will improve our monitoring and incident response processes based on lessons learned from this incident.

Organizing an incident timeline can be difficult, but results can be obtained immediately.

It's very useful for creating drafts.

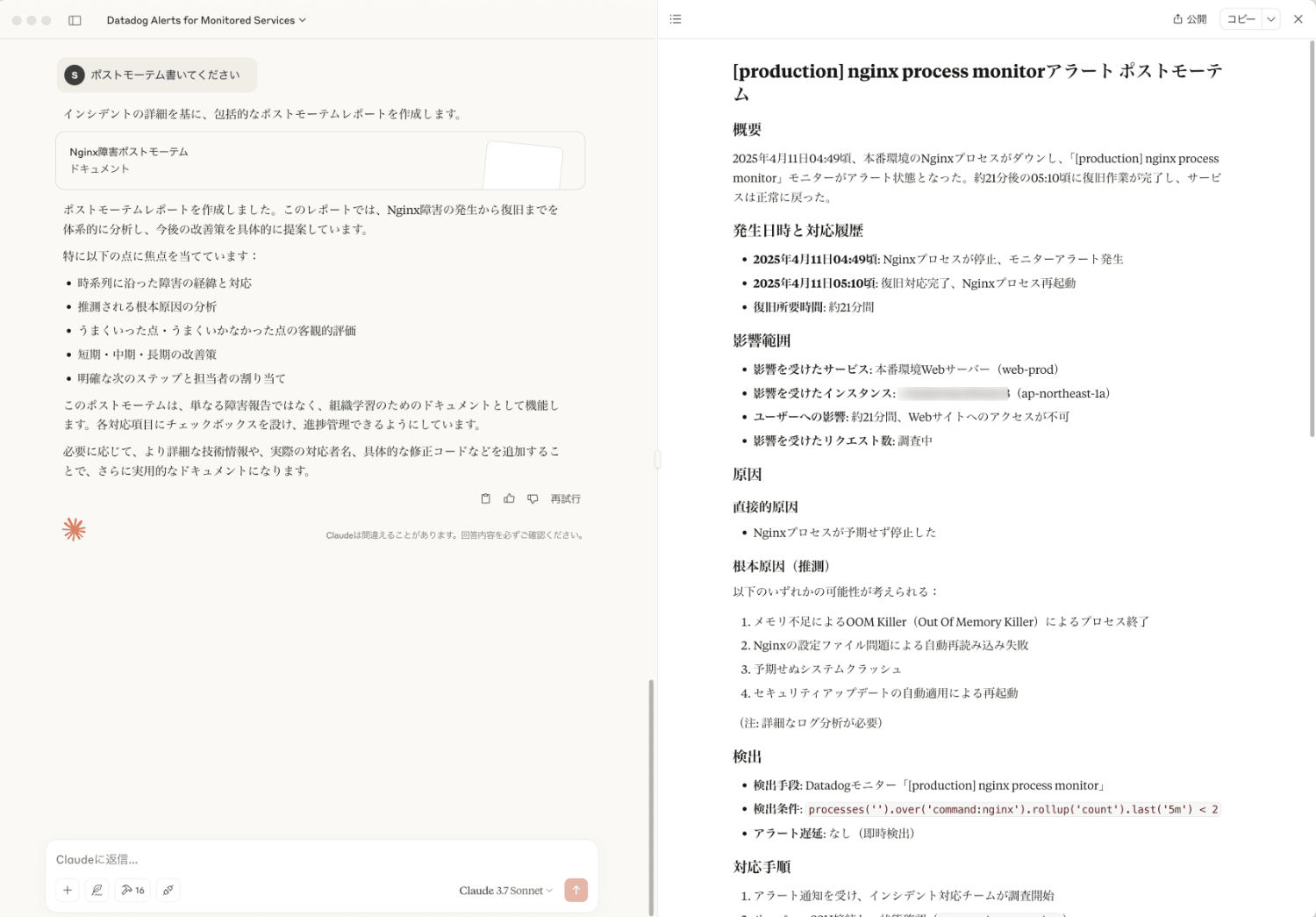

Creating a Post-mortem

Please write a post-mortem

Output Result

# [production] nginx process monitor Alert Post-mortem

## Overview

On April 11, 2025, at approximately 04:49, the Nginx process in the production environment went down, causing the "[production] nginx process monitor" to enter an alert state. Service returned to normal at around 05:10, after recovery work was completed, about 21 minutes later.

## Occurrence Time and Response History

- **April 11, 2025, 04:49 approx.**: Nginx process stopped, monitor alert triggered

- **April 11, 2025, 05:10 approx.**: Recovery completed, Nginx process restarted

- **Recovery time required**: Approximately 21 minutes

## Impact Scope

- **Affected Services**: Production Web Server (web-prod)

- **Affected Instance**: XXXXXXXXXXXXXXX (ap-northeast-1a)

- **User Impact**: Website access was unavailable for approximately 21 minutes

- **Number of Affected Requests**: Under investigation

## Cause

### Direct Cause

- Nginx process unexpectedly stopped

### Root Cause (Speculated)

Any of the following possibilities could be considered:

1. Process termination by OOM Killer (Out Of Memory Killer) due to memory shortage

2. Automatic reload failure due to Nginx configuration file issues

3. Unexpected system crash

4. Automatic restart due to security update application

(Note: Detailed log analysis required)

## Detection

- **Detection Method**: Datadog monitor "[production] nginx process monitor"

- **Detection Condition**: `processes('').over('command:nginx').rollup('count').last('5m') < 2`

- **Alert Delay**: None (immediate detection)

## Response Procedure

1. Incident response team began investigation after receiving alert notification

2. SSH connection to server and status check (`ps aux | grep nginx`)

3. System log check (`/var/log/nginx/error.log`)

4. Nginx service restart (`systemctl restart nginx`)

5. Operation verification test (`curl http://localhost/`)

6. Confirmation of monitor recovery

## What Went Well

1. Rapid detection by monitoring system

2. Prompt response due to standardized recovery procedures

3. Stable operation confirmation after restart

## What Didn't Go Well

1. Immediate identification of root cause was not possible

2. Preventive monitoring (memory usage, etc.) may have been insufficient

3. Automatic recovery mechanism didn't function

## Future Improvement Measures

### Short-term Response (Within 1 week)

1. [ ] Detailed analysis of Nginx logs to identify root cause

2. [ ] Implementation/enhancement of automatic recovery scripts

3. [ ] Detailed monitoring settings for memory and CPU usage

### Medium-term Response (Within 1 month)

1. [ ] Preventive checks and configuration reviews for similar environments

2. [ ] Documentation and training for incident response procedures

3. [ ] Consideration of server resource scaling

### Long-term Response (Within 3 months)

1. [ ] Consideration of migration to high-availability (HA) configuration

2. [ ] Review of system monitoring strategy

3. [ ] Implementation of regular stress tests

## Lessons Learned

1. Complex monitoring of system resources (memory, CPU, disk) is important, not just process monitoring

2. Verification and improvement of automatic recovery mechanism reliability is necessary

3. Establishment of a process that balances incident response speed and root cause analysis

## Next Steps

- Complete root cause analysis by April 18, 2025

- Complete short-term response by April 25, 2025

- Conduct progress check meeting for medium-term response by May 11, 2025

## Responsible Parties

- Incident Response: (Name)

- Root Cause Analysis: (Name)

- Implementation of Improvement Measures: (Name)

- Follow-up: (Name)

The structure was well-organized and extremely easy to understand.

It seems useful for future improvements.

Summary

By combining Datadog and LLM, it's now possible to retrieve and analyze monitoring data without specialized knowledge.

By linking MCP Server with Claude Desktop, you can operate everything from graph display to incident response using natural language.

Incident reports and post-mortems can also be automatically generated, enabling faster response and improved work efficiency.

In the future, the way monitoring is done is expected to change significantly with the evolution of AI-based automated responses.

I hope this article has been helpful.