Non-engineer salesperson created a "team of 6 AIs" using Claude Code to write a proposal

This page has been translated by machine translation. View original

This article is part of the "Non-Engineer Trying Claude/Hands-on with Claude" series.

⚠️ Warning: "Ishikawa Company, Inc." appearing in this article is a fictional company, and the contents of the proposal (service configuration, costs, case studies, etc.) are all created for demonstration purposes in this article. They have no relation to actual services, prices, or achievements provided by Classmethod.

Isn't it hard to write proposals alone?

I'm Ishikawa from Classmethod, working in sales. I'm not an engineer.

Creating proposals is an unavoidable part of sales work. And it's quite challenging.

-

Writing alone leads to biased perspectives. Haven't you experienced thinking something was good, only to find it didn't resonate with the customer?

-

Want to request reviews, but everyone's busy. It's difficult to ask "Could you take a look at this?"

-

Delegating to AI produces superficial results. When I asked for "write a proposal," I got generic content that could apply to any customer

During those times, I suddenly thought:

"Actual proposals are team efforts with researchers, designers, and reviewers. Could I recreate this team structure with AI?"

Claude Code has a mechanism called "agents" where you can create AI personnel with defined roles and instructions.

The definition files are written in Markdown (text file). No code needed.

Could I, a non-engineer, create this? I decided to try it out.

First, creating a 3-person team

Concerned that starting with too many people might take things in an unexpected direction, I consulted with Claude and first created a minimal configuration of 3 agents.

① Researcher → Organizes customer information

↓

② Writer → Writes the proposal

↓

③ Reviewer → Quality check

While conversing with Claude, I created definition files (Markdown) for each role. Again, I didn't write a single line of code.

To test the AI team's capabilities, I prepared a realistic scenario that sales professionals would find familiar.

Fictional customer "Ishikawa Company, Inc."

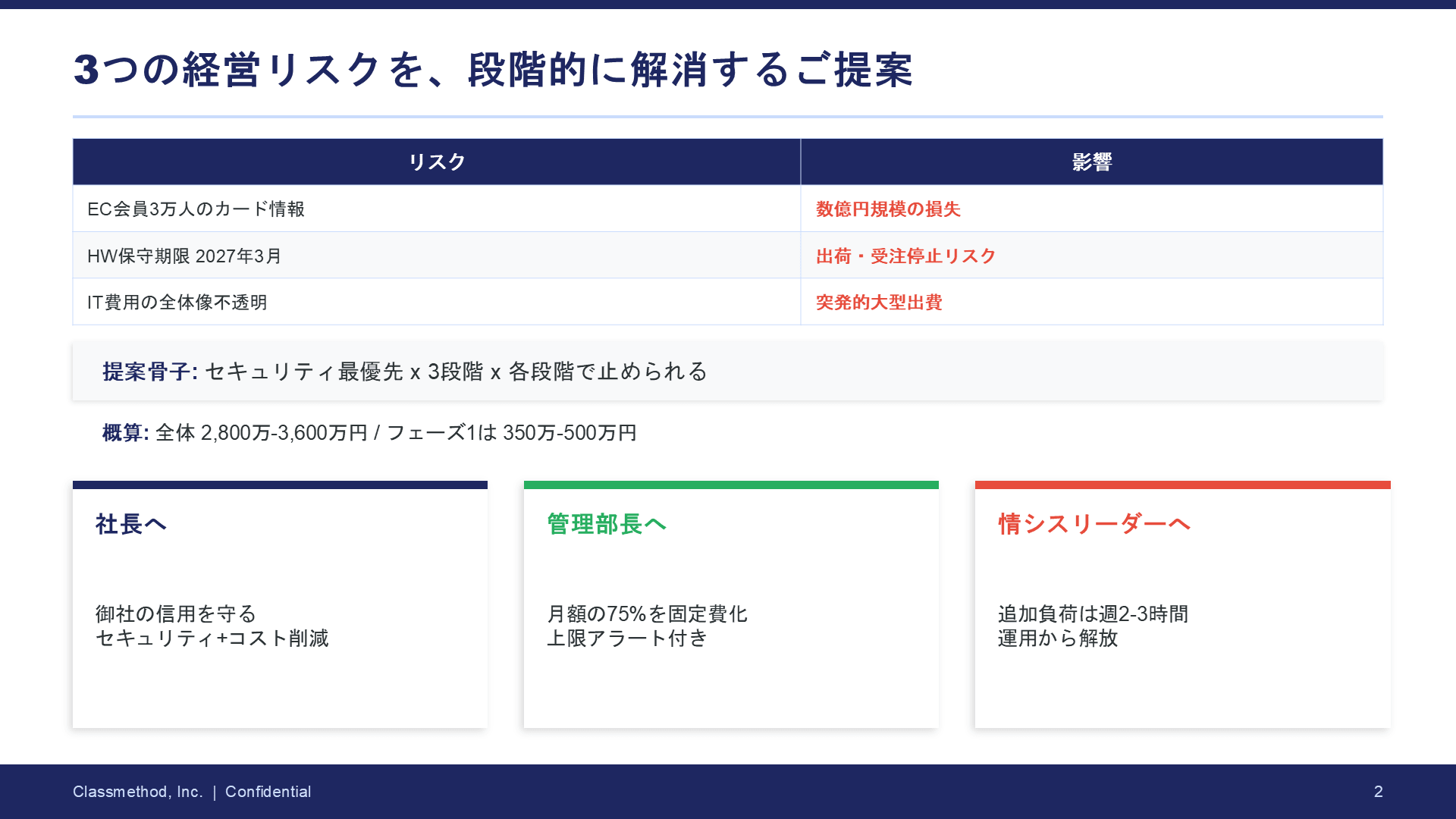

Ishikawa Company, Inc. (food manufacturer, 300 employees, annual revenue 12 billion yen)

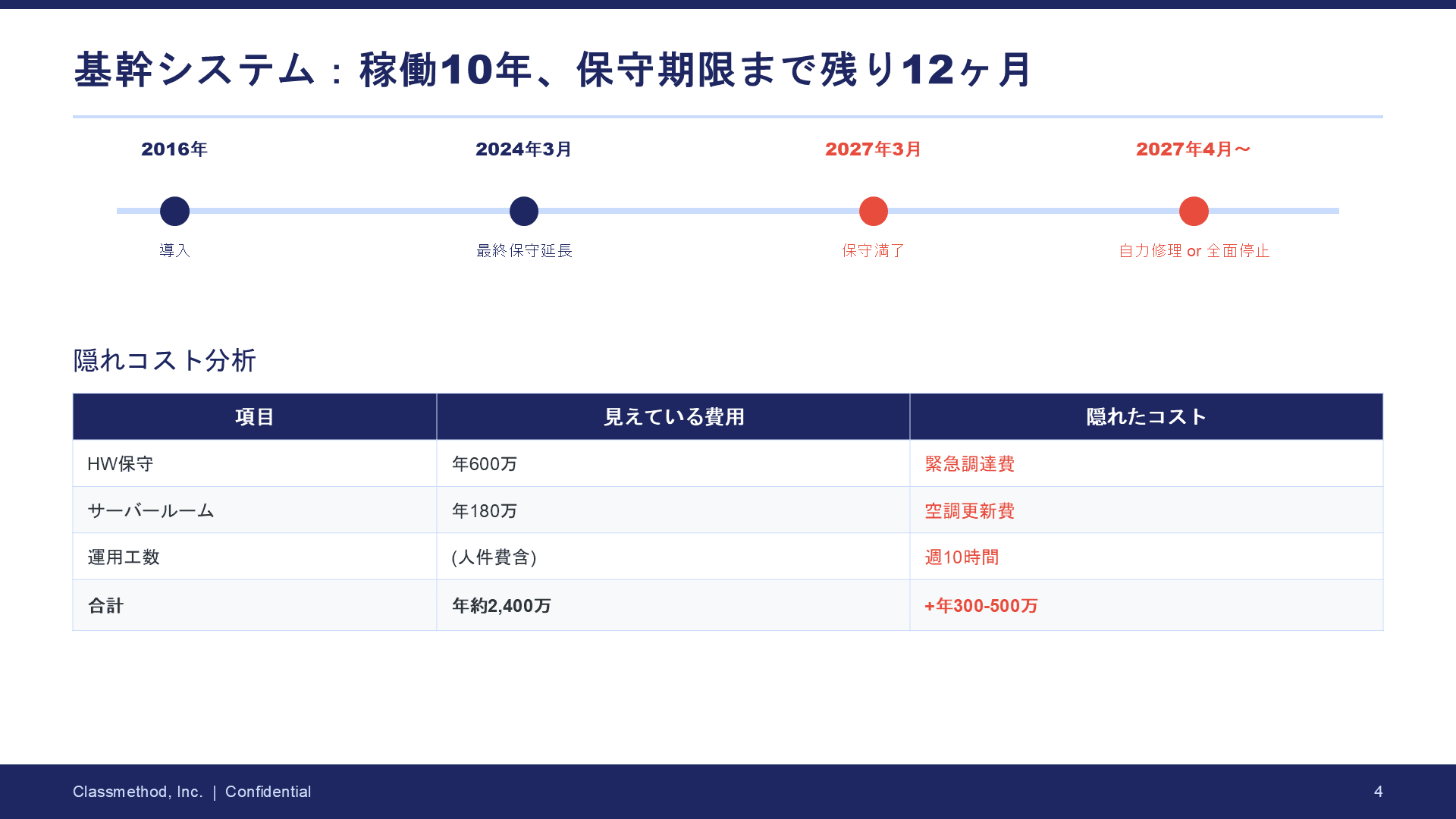

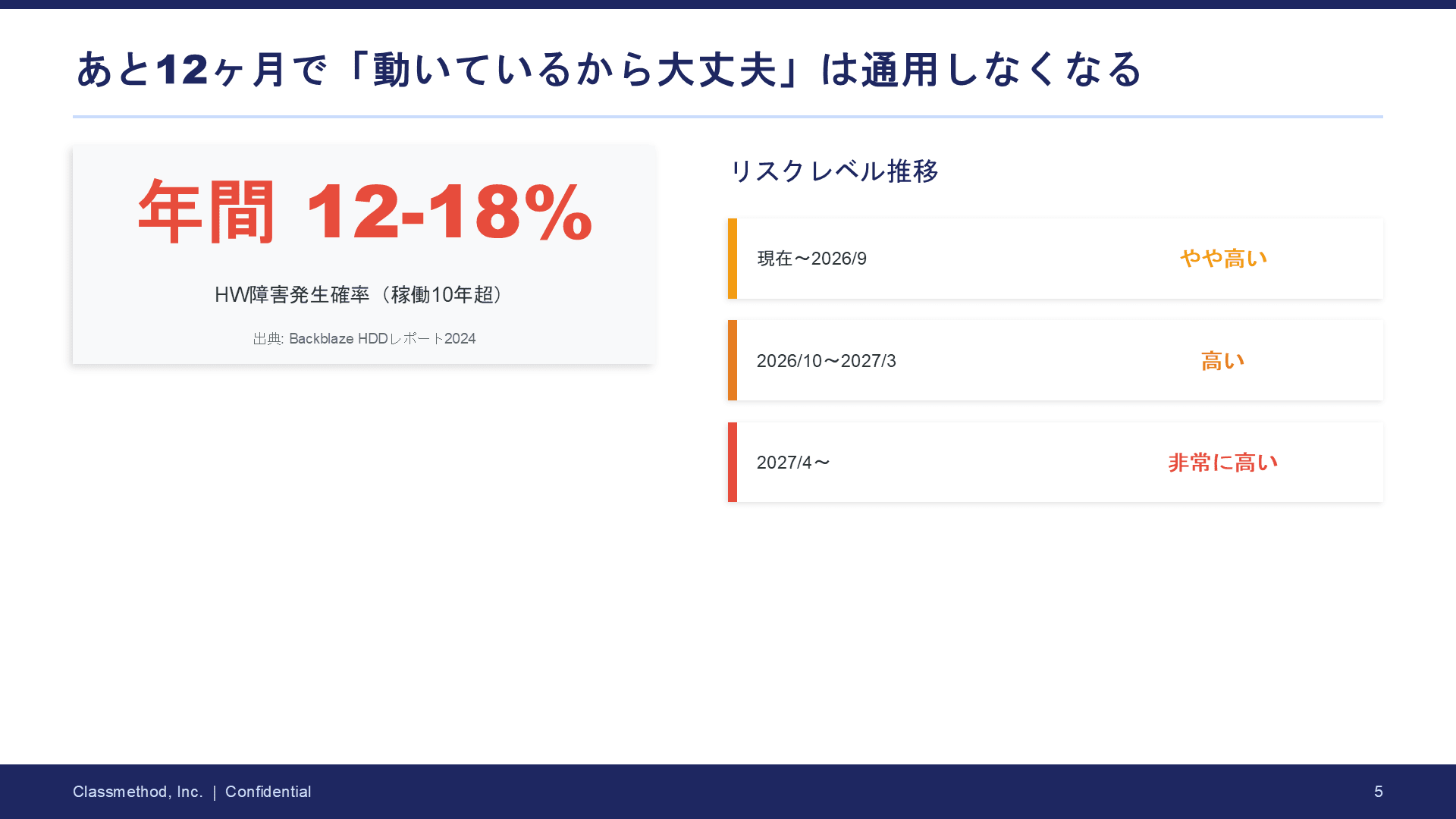

- Core system has been running on-premises for 10 years, hardware maintenance is expiring soon

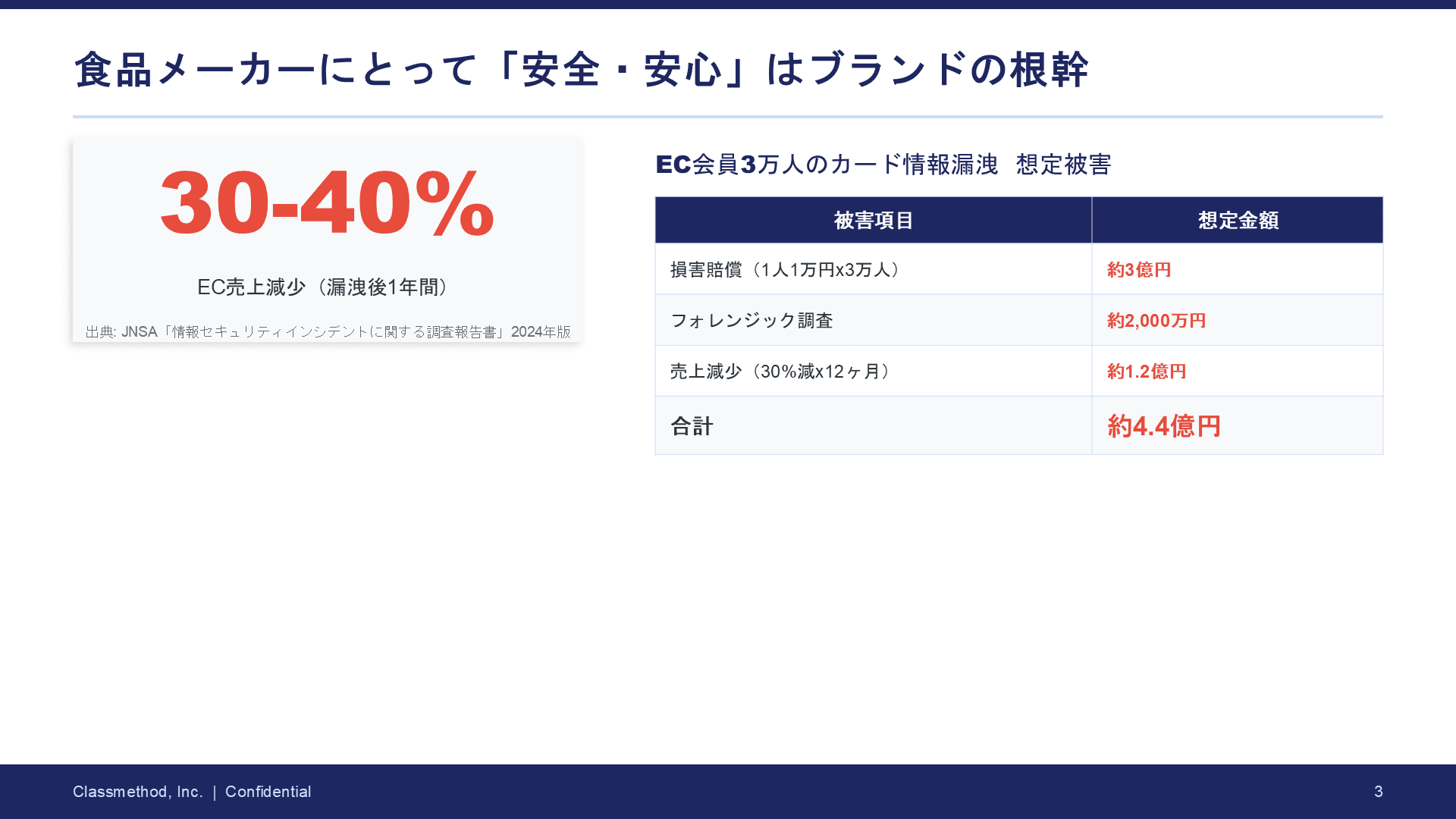

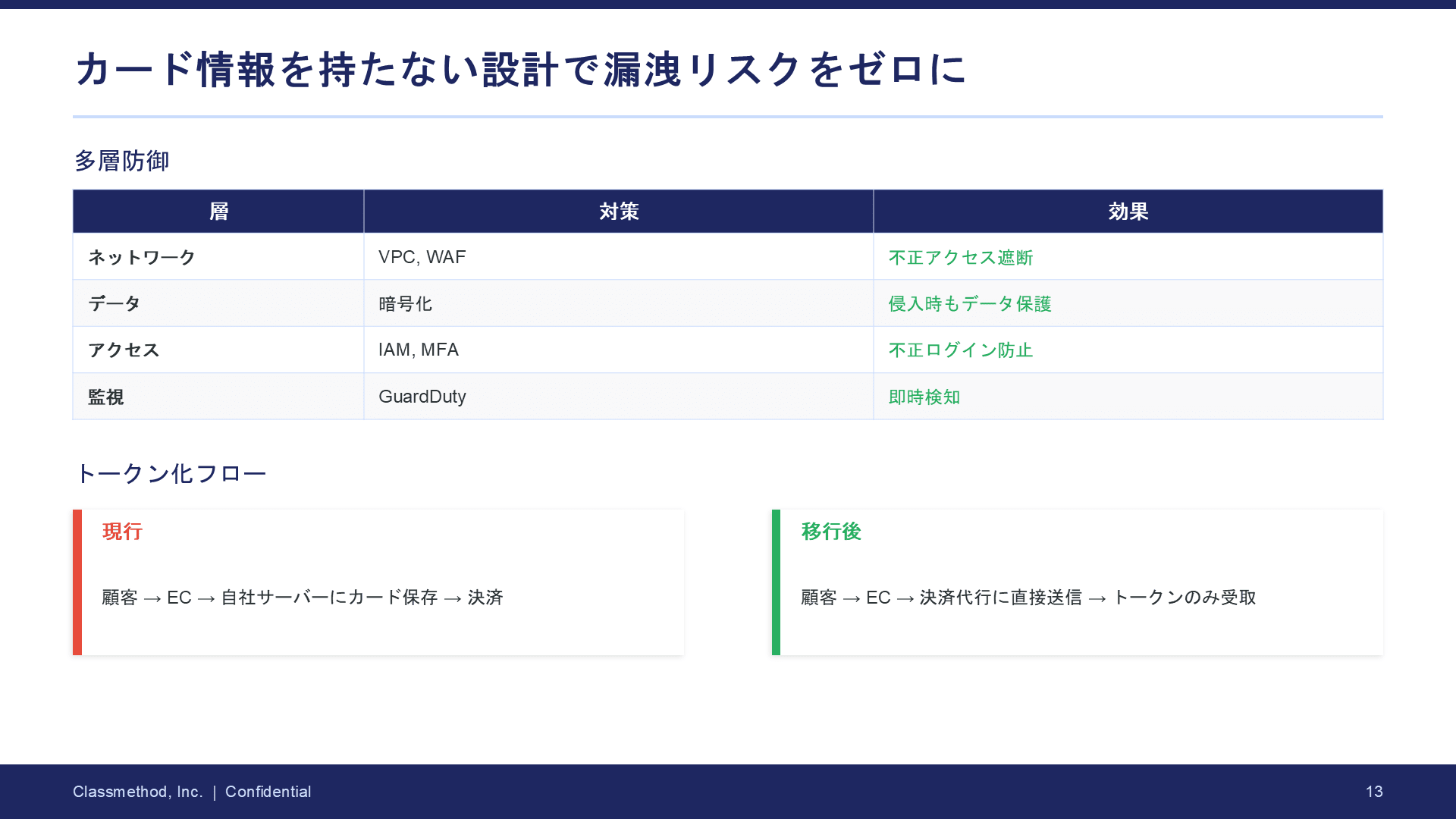

- Possesses credit card information for 30,000 EC members, with data breach risk left unaddressed

- After a ransomware attack on a competitor, management is feeling a sense of urgency

- IT department is overwhelmed with just 2 staff members

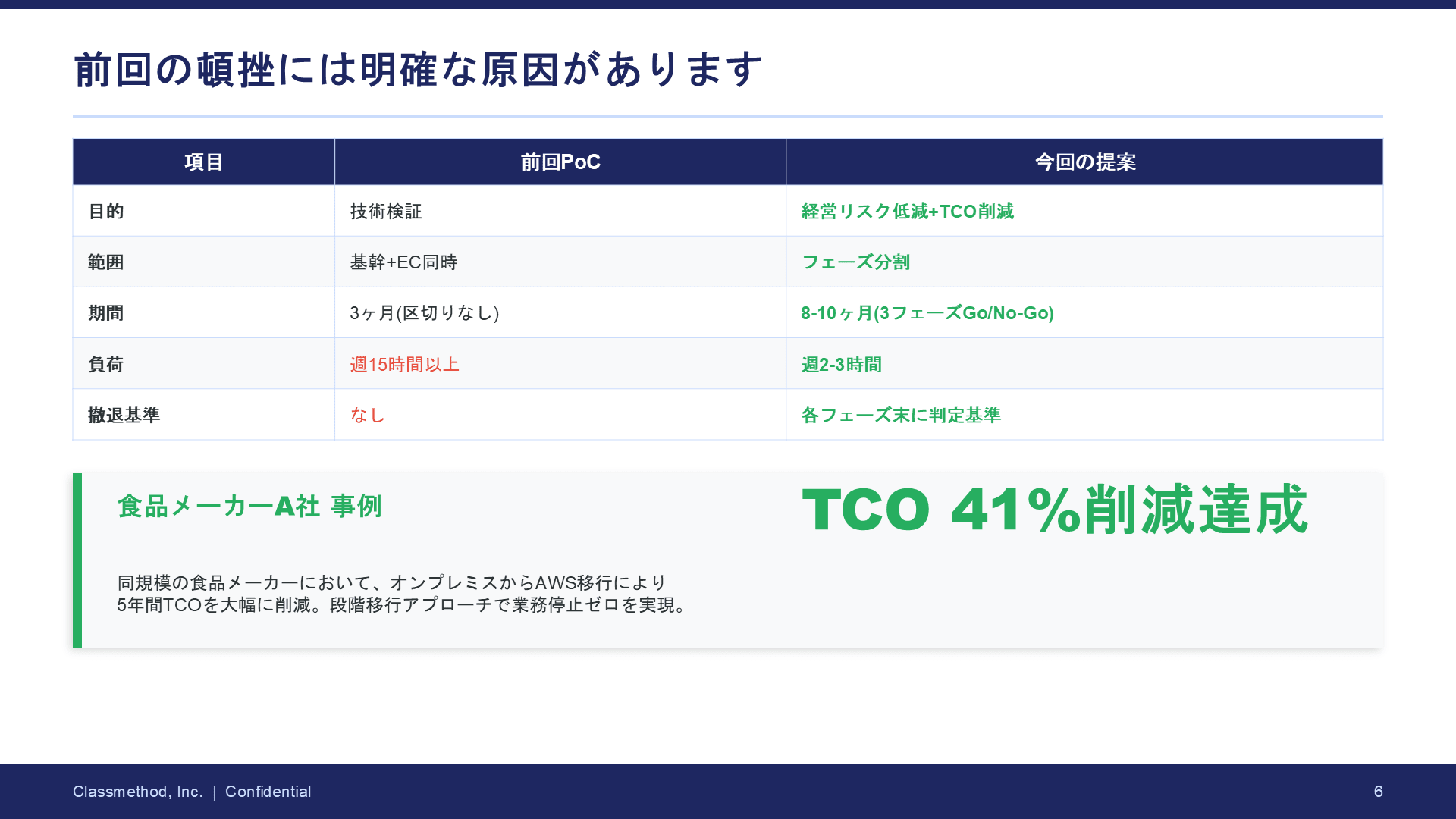

- A migration PoC by another SI vendor stalled 3 years ago

The proposal targets 3 people, each with the following positions and perspectives:

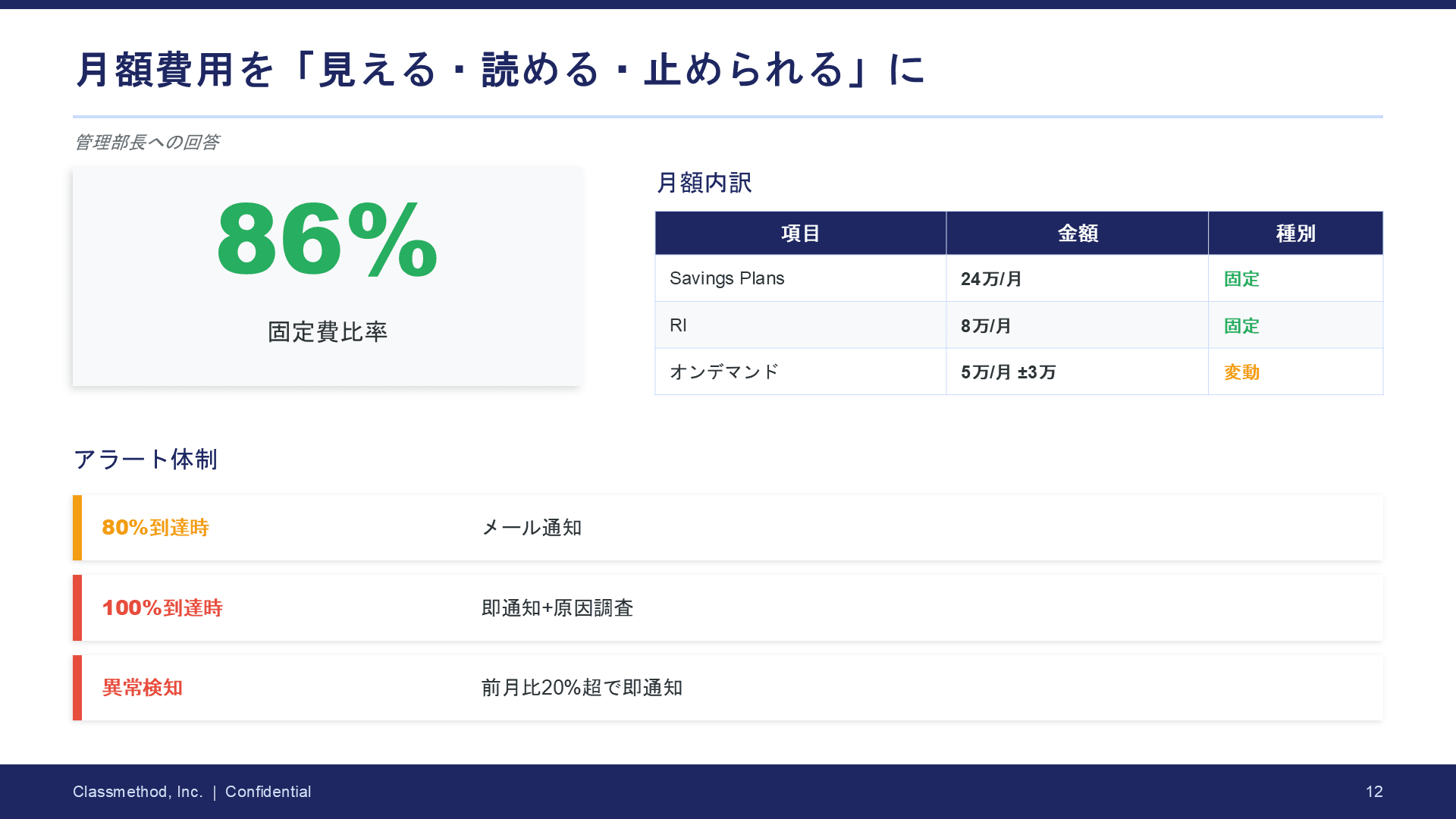

- President ("How much will it cost? Can you be trusted?")

- Administrative Manager (cost guardian, concerned about variable charges)

- IT System Leader (positive but overwhelmed)

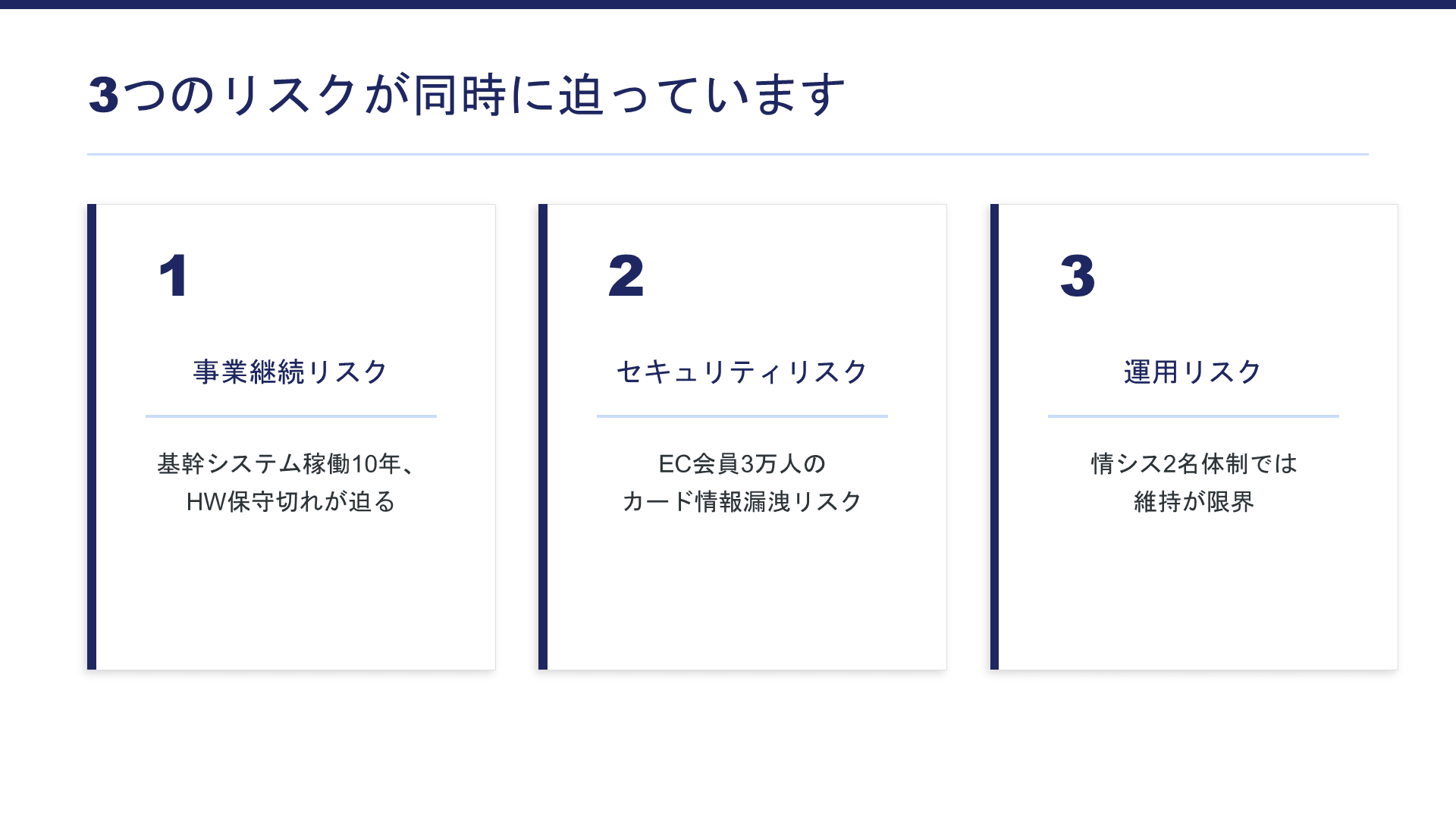

Is the proposal created by 3 agents lacking?

The resulting proposal is as follows.

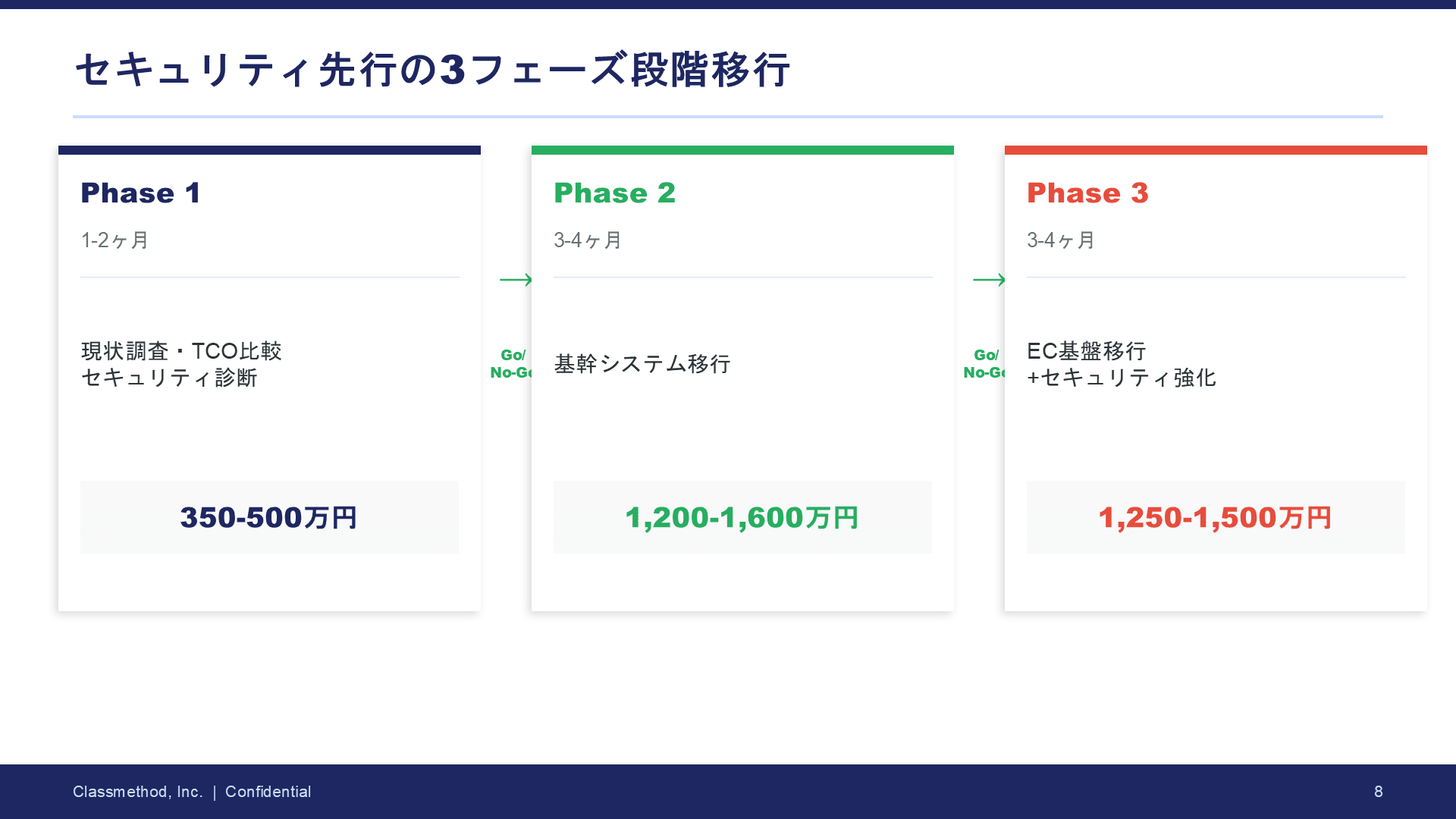

The flow from challenges → solutions → costs → next steps is there.

But if asked "Will this proposal move the customer's heart?"... that's difficult.

Looking at the material, the main points that concerned me were:

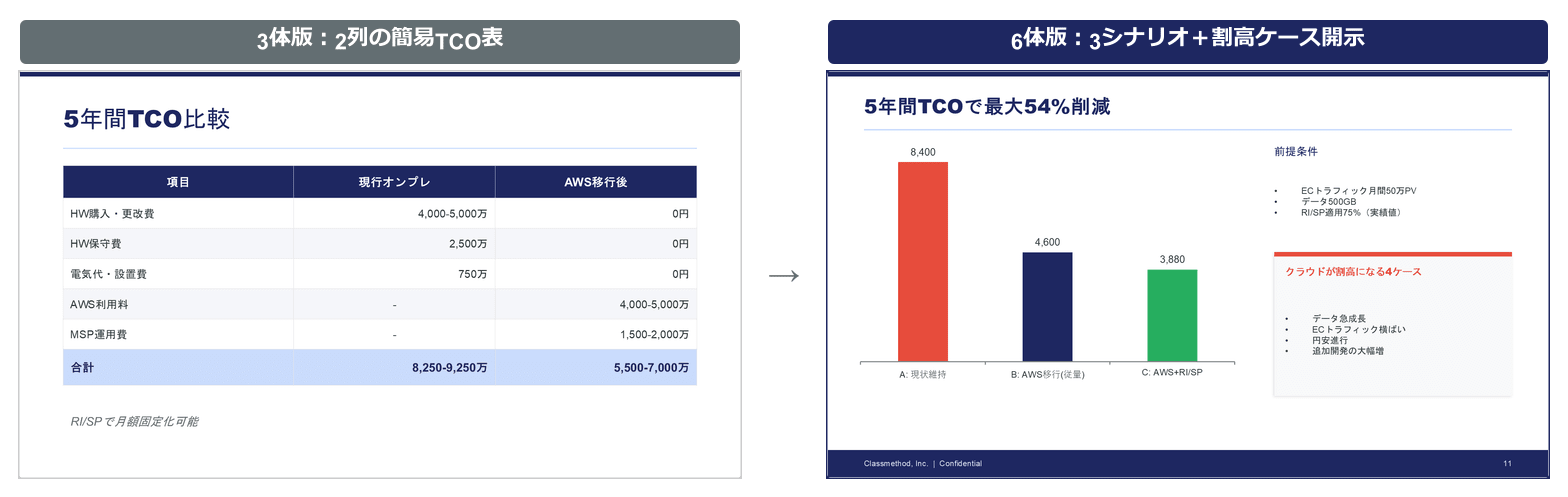

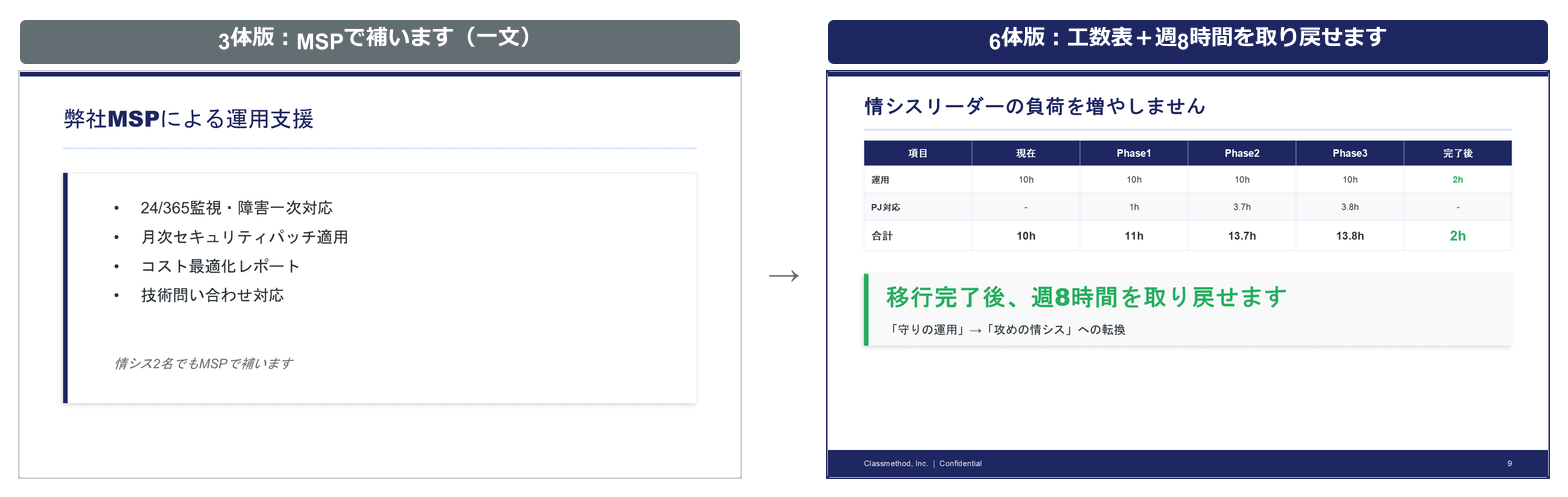

| Concerning points | Response from the 3 agents |

|---|---|

| Who is the proposal for | No executive summary. Jumps straight to challenges |

| About the stalled project 3 years ago | Only one line about "phased migration" |

| Cost comparison | Simple two-column table. No underlying assumptions |

| Consideration for the IT department | Just one line saying "We'll supplement with MSP" |

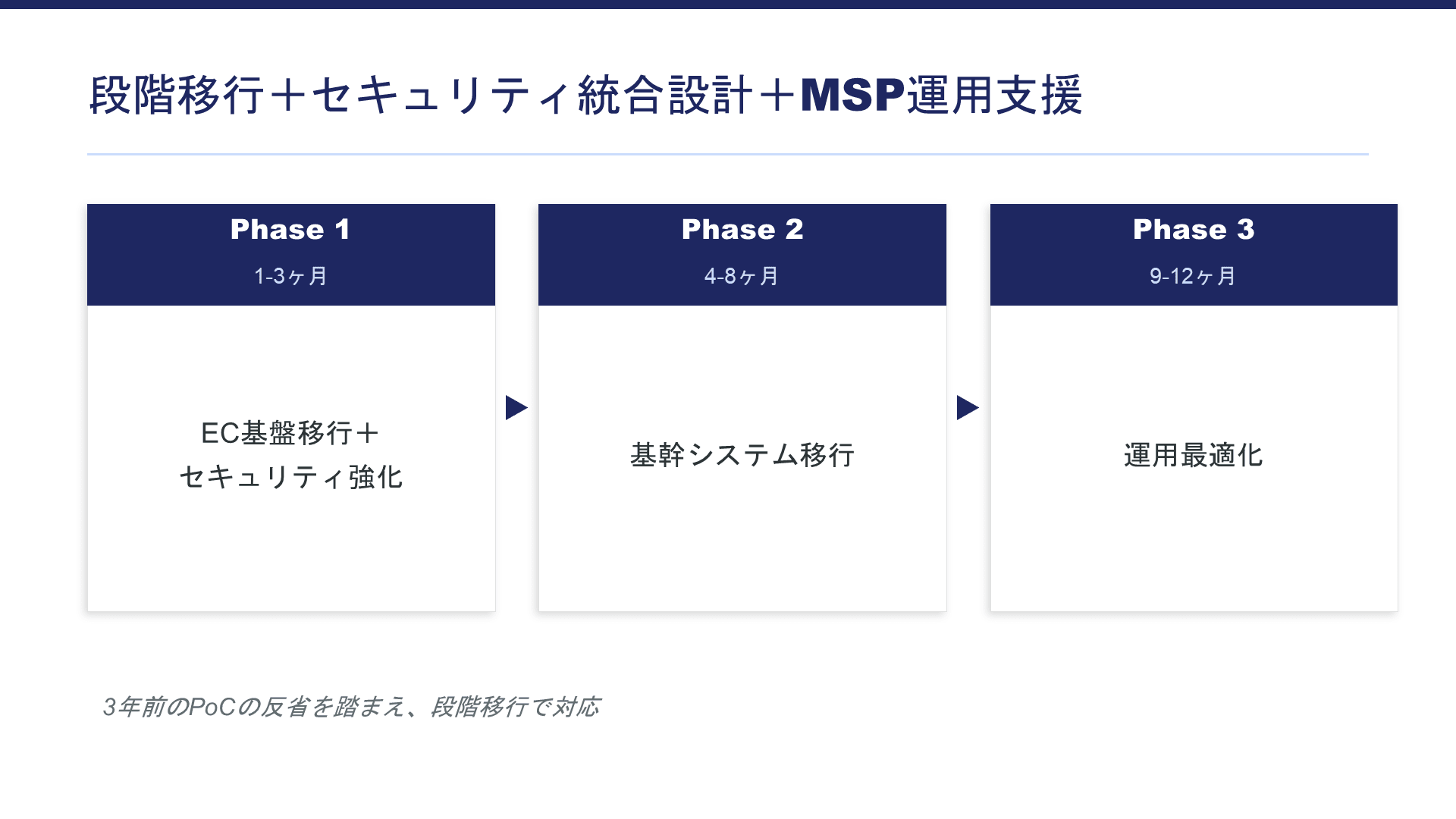

Three isn't enough! Adding more roles to improve quality!

After consulting with Claude, I added three more roles as follows:

┌─────┼─────┐

Researcher/Designer/Critic ← 3 roles working in parallel

└─────┬─────┘

Structure Coordinator ← Integrates output from the 3 roles

↓

Writer ⇄ Editor-in-Chief ← Write→Review→Modify→Approve

Among the three added roles, the most important is the Devils Advocate.

Their role is to thoroughly identify "What if the customer challenges us with this?"

The 3-person version lacked this "hole finder."

Additionally, the "Reviewer" evolved into an "Editor-in-Chief."

I instructed them to review based on four axes (customer perspective, logic, "So What?", and structure) and return it to the writer if standards aren't met.

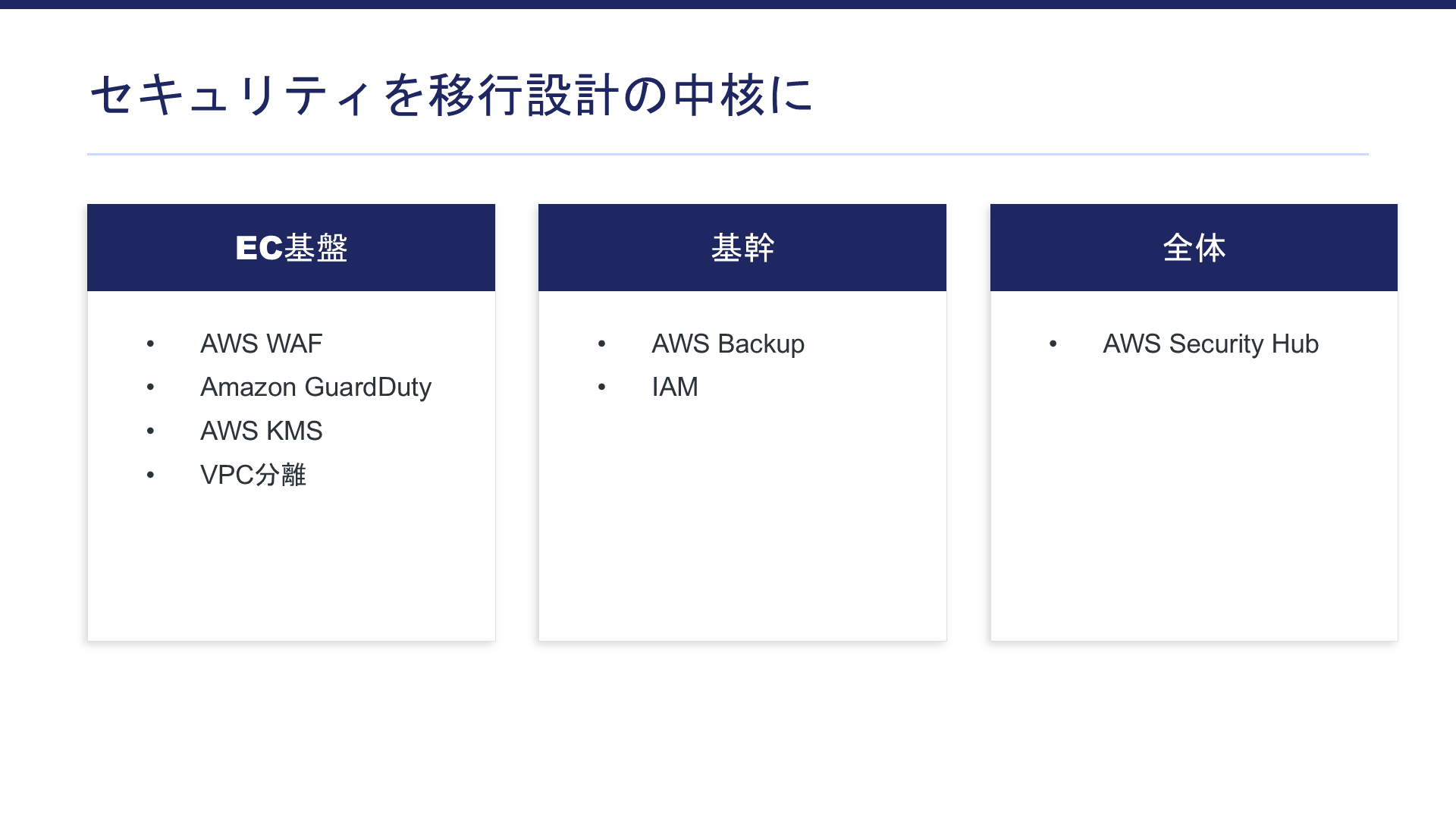

The 6-person team proposal became more multi-faceted and logical

I ran the same assignment with this team.

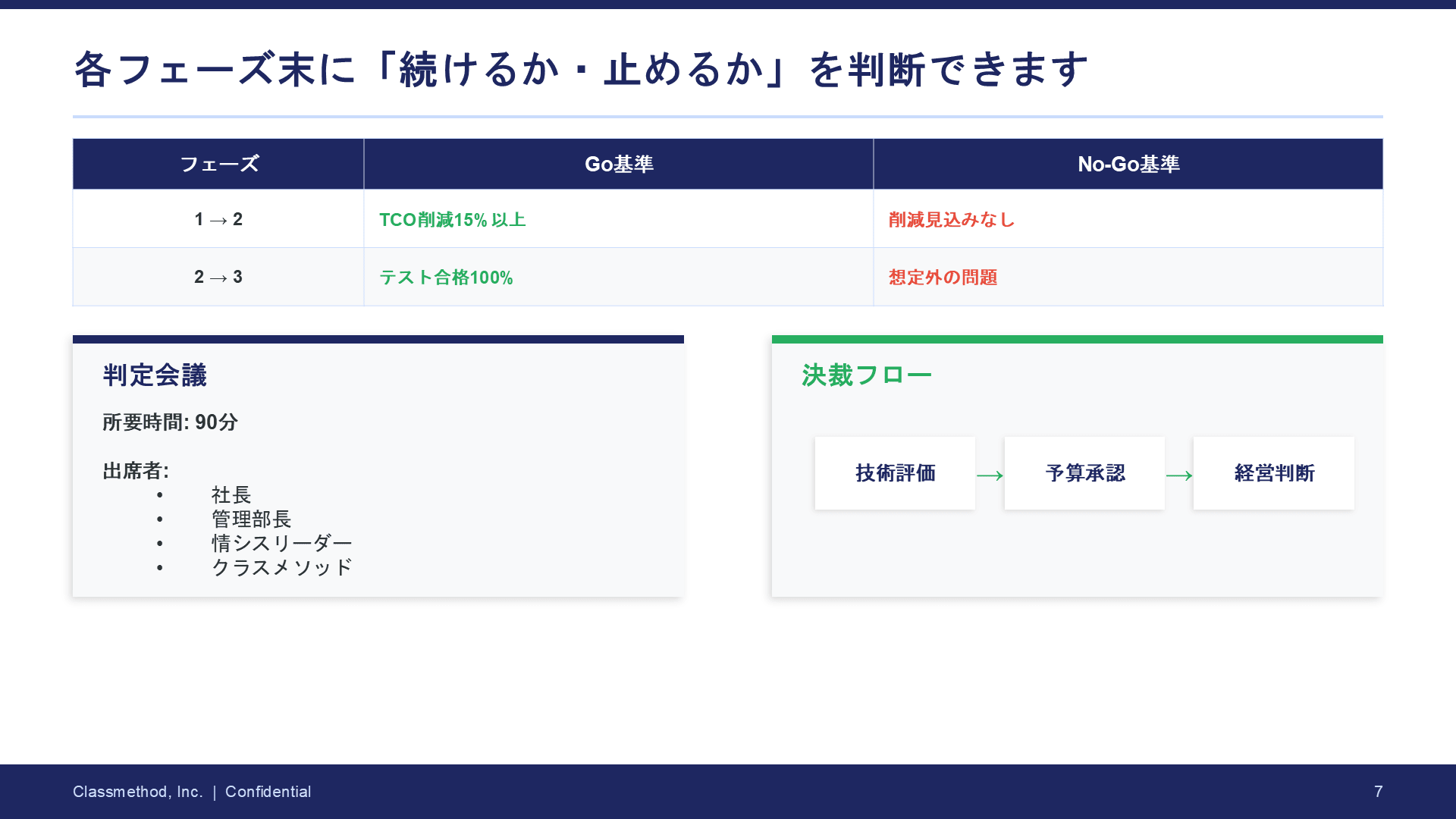

In Phase 4, the Editor-in-Chief reviewed and determined "revisions needed," identifying 4 mandatory corrections:

- TCO comparison appears to show "numbers that are favorable to us"

- No direct response to the Administrative Manager's concerns about variable charges

- Insufficient concrete consideration for the IT System Leader

- The urgency of security issues is buried in the structure

After that, the Writer created a revised version for re-review. It received a score of 4.75/5.0 and was approved.

The completed proposal is as follows.

3 people VS 6 people: What changed

Comparing the two proposals from the same assignment, here are three points where the differences were most striking.

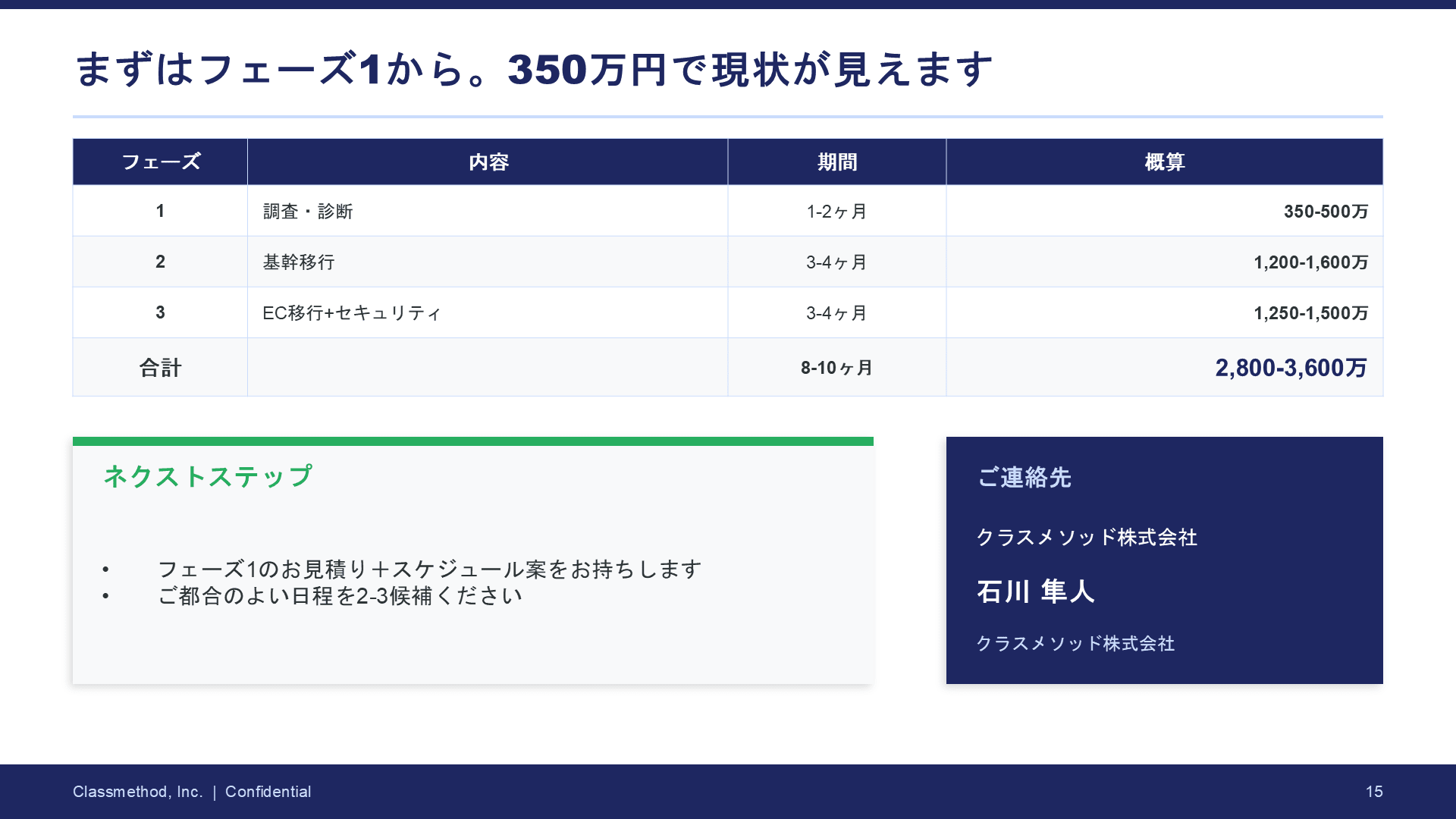

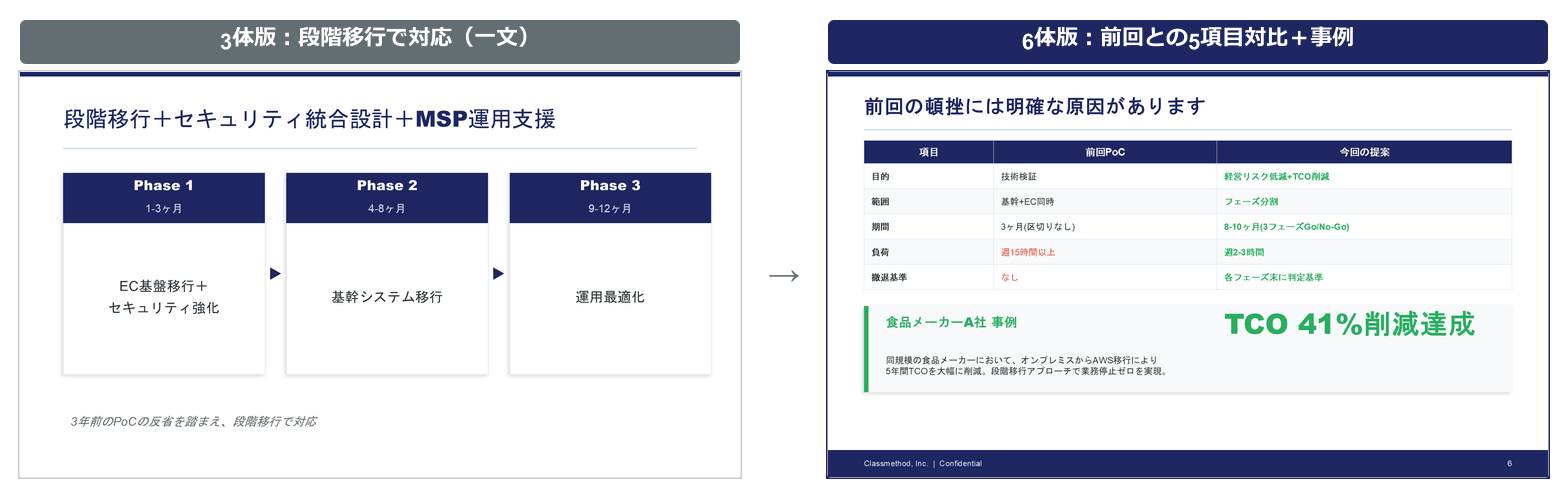

Comparison 1: Addressing the stalled PoC

| 3-person version | 6-person version | |

|---|---|---|

| Content | One line about "phased migration" | 5-item comparison table with previous attempt + Go/No-Go criteria + approval flow + food industry case studies |

| Depth | Logically correct but brief | Structurally proves "what's different from last time" |

Why the difference: The critic pointed out, "The biggest psychological barrier appears to be that the Administrative Manager experienced a budget approval failure 3 years ago. Saying 'it'll be fine with phased migration' is emotionally insufficient."

In response, the structure coordinator designed an independent section, the writer included a comparison table, and the editor-in-chief demanded more specific Go/No-Go criteria.

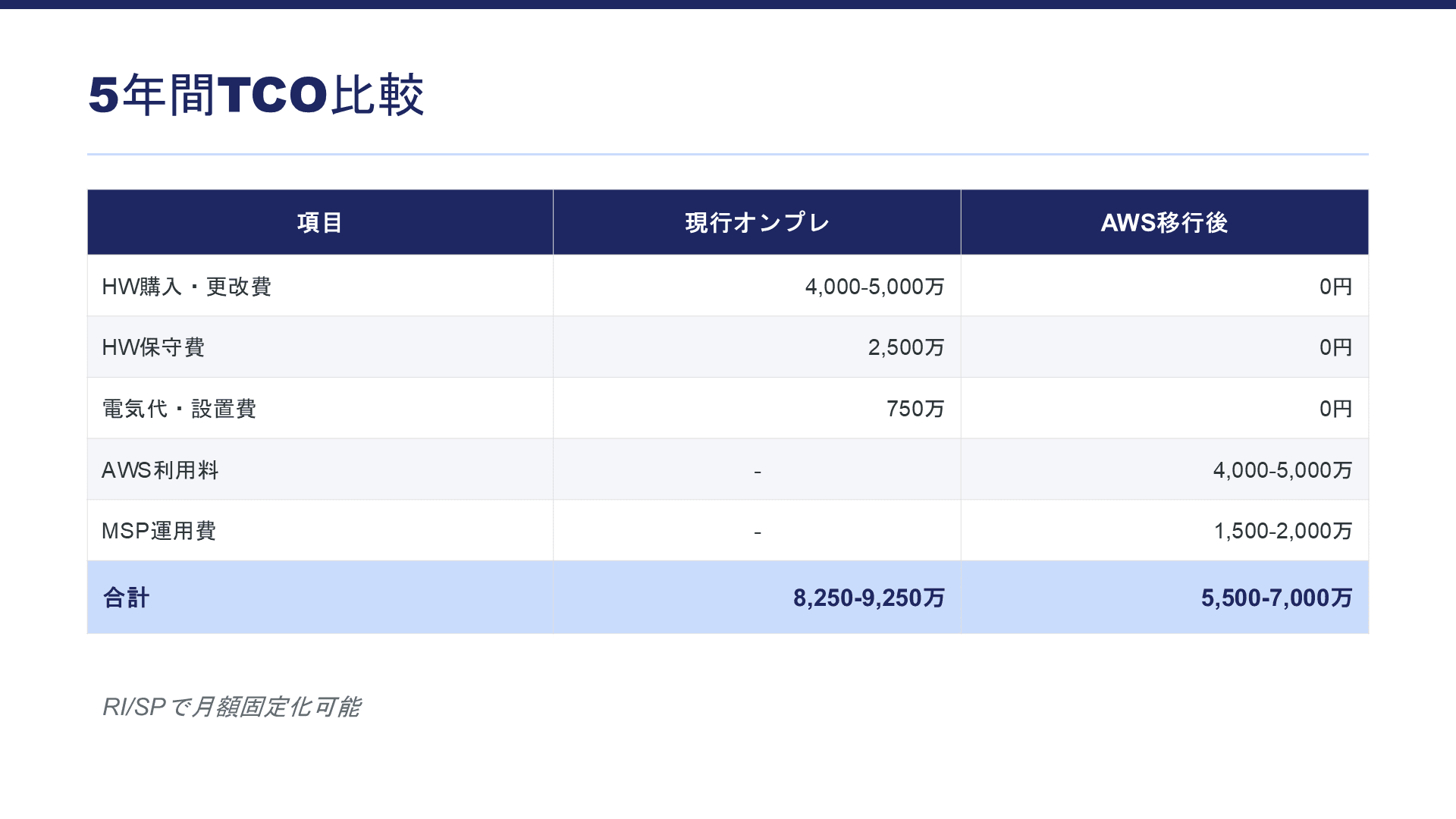

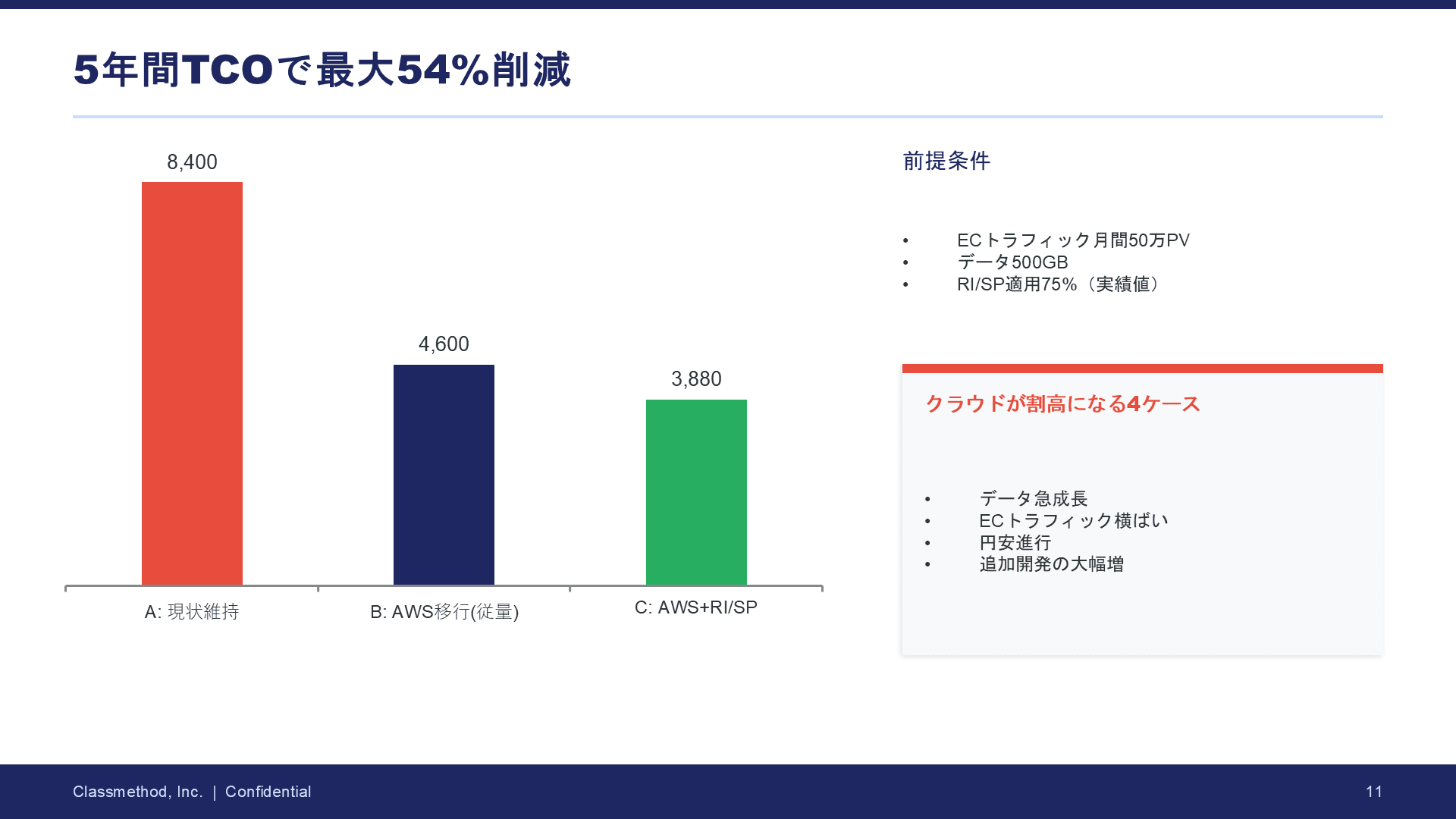

Comparison 2: Persuasiveness of TCO comparison

| Header | 3-person version | 6-person version |

|---|---|---|

| Number of scenarios | 2 columns (on-premises vs AWS) | 3 scenarios (maintain status quo/AWS variable/AWS+fixed) |

| Assumptions | None listed | Clearly states traffic volume, data volume, exchange rates, etc. |

| Honesty | One-sided "cloud is cheaper" | Honestly discloses 4 cases where costs could be higher |

Why the difference: The critic noted, "Listing only favorable numbers looks like a sales pitch," and the editor-in-chief commented, "The proposal is over once you lose trust in the numbers."

The decision to "deliberately include unfavorable information" is a point that even human reviewers might find difficult to make.

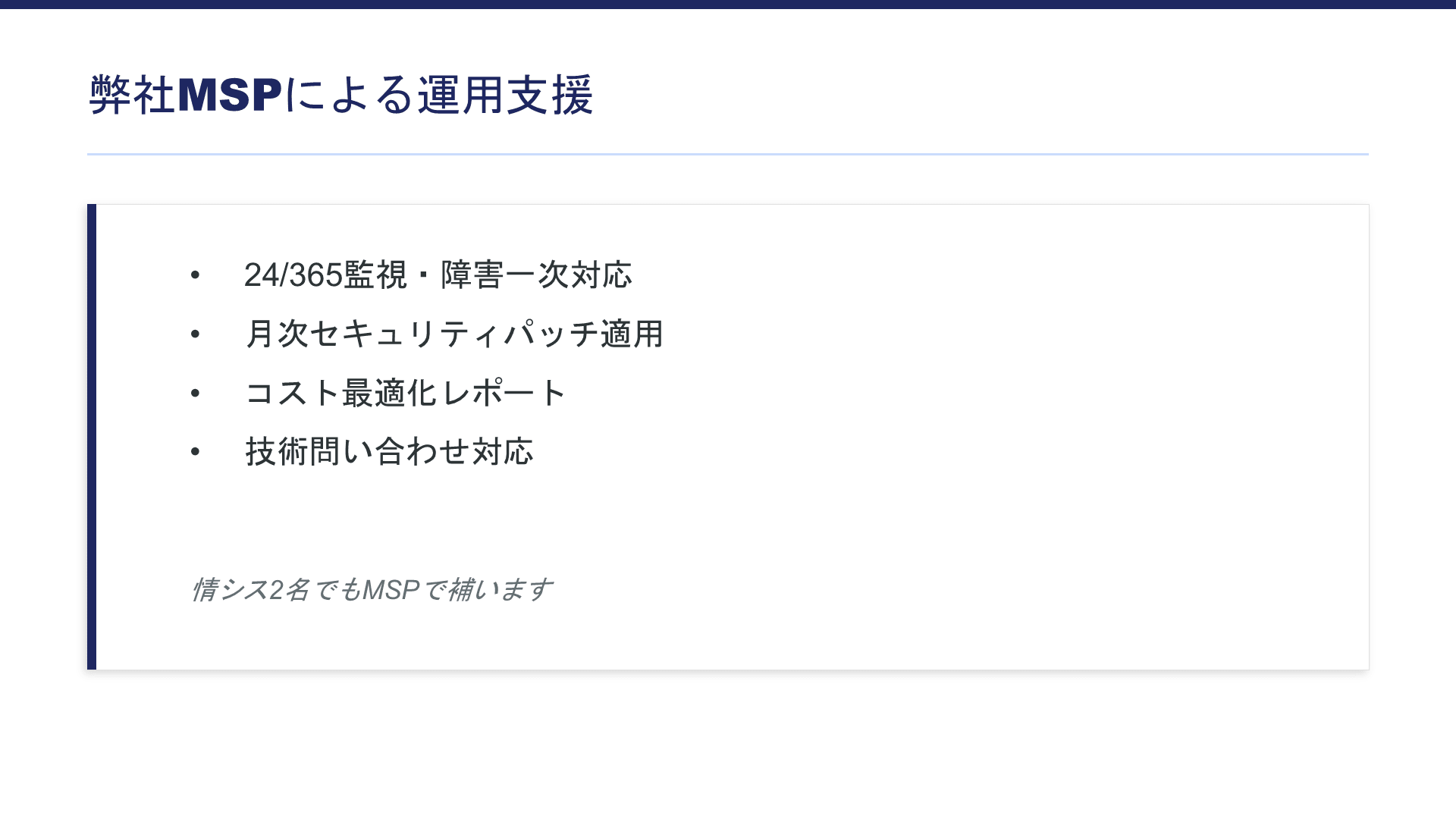

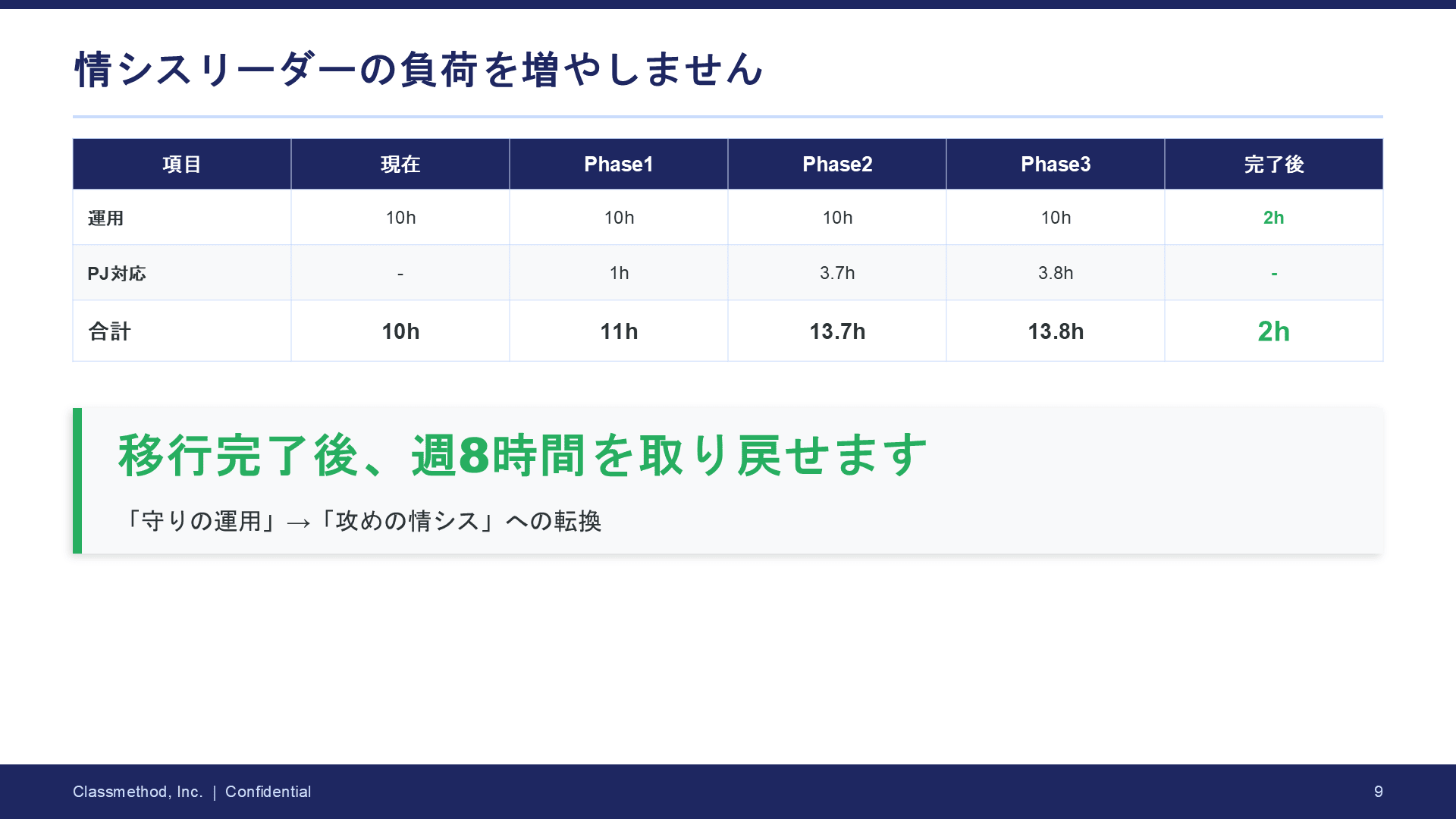

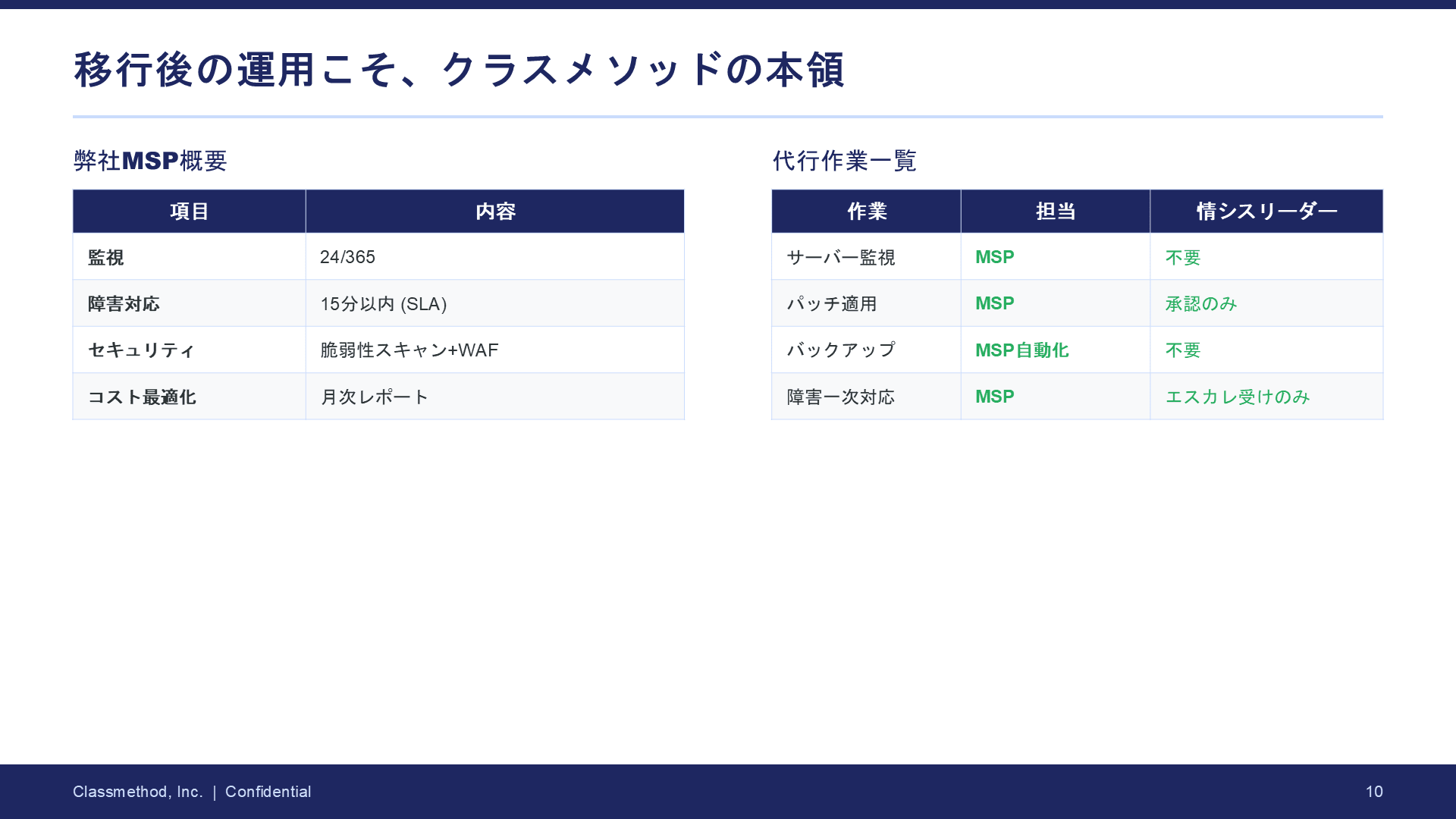

Comparison 3: Consideration for the IT System Leader

| 3-person version | 6-person version | |

|---|---|---|

| Depth of consideration | One line about "We'll supplement with MSP" | Phase-by-phase task list + breakdown of work hours + list of tasks handled for you |

| Message | None | Concrete statement: "After migration completion, you'll reclaim 8 hours per week" |

Why the difference: The editor-in-chief indicated, "Leader Sato's real concern is 'Will I have more time or be busier?' Just explaining MSP doesn't resolve the anxiety" and mandated revisions.

The final version shows workload in numbers and closes with a positive message.

The difference makers were the "critic" and "feedback loop"

The power of the critical reviewer

The 3-person version lacked someone to "find holes in the proposal." In the 6-person version, the critic made these observations in advance:

-

"They will ask 'We entrusted an SI vendor 3 years ago and it failed. Why will this time be different?'"

-

"Risk that the TCO comparison may appear arbitrary"

-

"Risk that the project could be handed off to a security specialist vendor"

These observations were passed to the structure coordinator, seemingly changing the very structure of the proposal.

The editor-in-chief's feedback loop

The 3-person version ended after the writer wrote it.

In contrast, the 6-person version had a "write → critique → fix → confirm" loop, which pushed up the quality. In particular, the 4 mandatory corrections that came from the "revisions needed" determination greatly changed the quality of the final draft.

What I realized from this experience

Instructions to AI are like "work manuals for junior staff." Agent definitions simply involve writing in Markdown things like "first organize the customer's challenges" and "check from this perspective." I didn't write any code.

Without business knowledge, even an AI team can't function. The most time-consuming part wasn't building the AI team but developing the fictional customer scenario. "A PoC stalled 3 years ago," "The Administrative Manager is concerned about variable charges." Only with realistic settings can the AI team produce deep proposals.

Conversely, creating good proposals requires high-quality information. This demands strong interviewing skills from sales professionals, and an organizational structure that can store and utilize this information across the company will likely be increasingly important.

Adding just one "critic" makes a difference. If asked whether all 6 roles are necessary, I think adding just a critical reviewer would make a significant difference. Having someone who identifies "what are the weaknesses of this proposal" raises the quality to the next level.

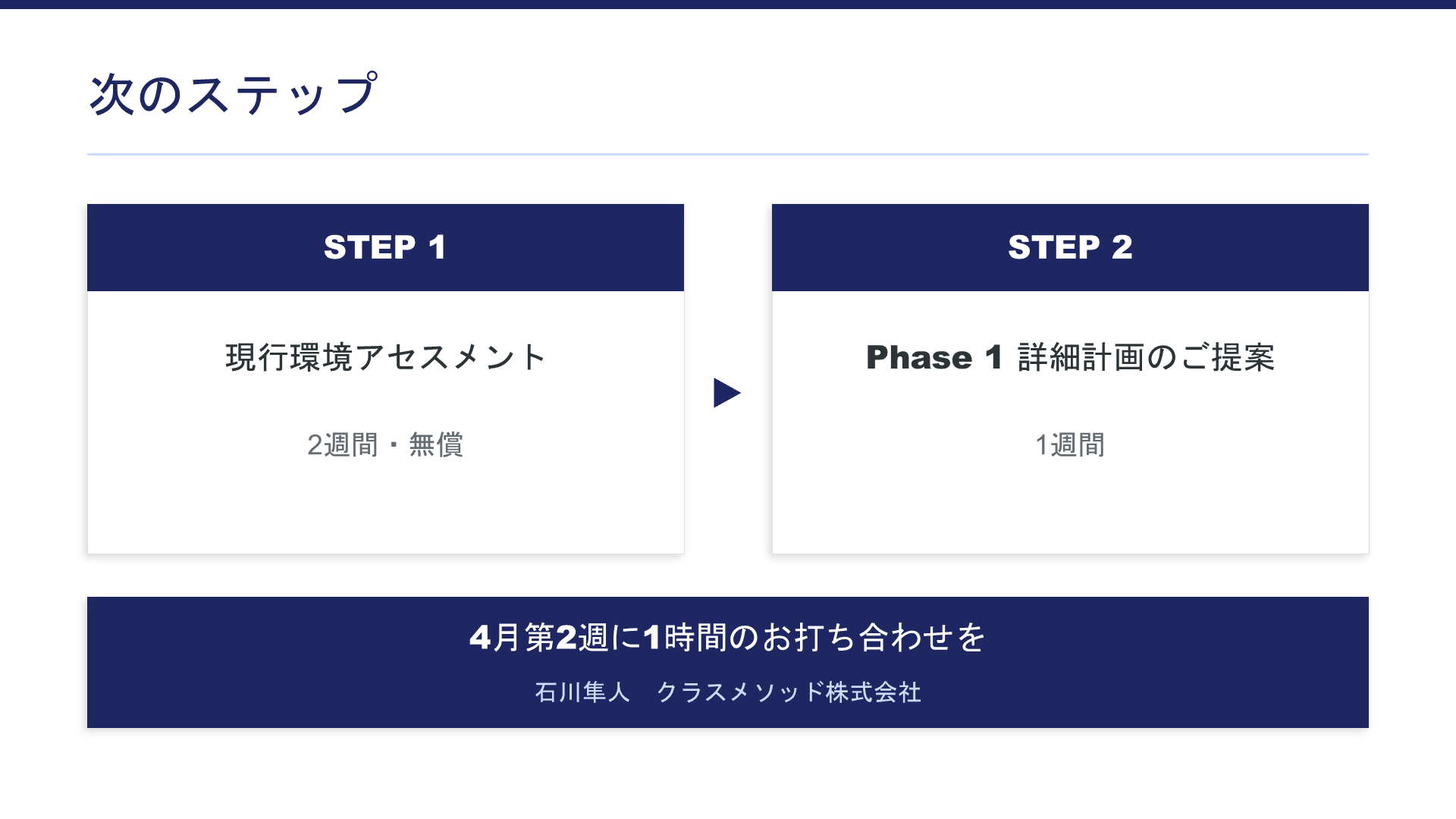

Conclusion: Start with just one, even if it's vague, just try it

You don't need to create a large team right away.

Start by adding just one "reviewer." Even just asking Claude to "point out weaknesses from the customer's perspective" for a proposal you've written will broaden your viewpoint.

From there, you can gradually expand by adding a critic, a structure coordinator, and other roles as needed. If you consult with Claude about "I want to create this kind of agent," it will help create the definition file too.

The more you interact with Claude, the more interested you become in trying new approaches, which is part of Claude's appeal.

I encourage everyone to create your own teams.

Finally, to avoid trouble, always review everything that the AI outputs.

You're the one who will be using the proposal.

Be sure to check that the assumptions are correct and that the output is logical and consistent.