Building a Local AI Coding Environment with Ollama and OpenCode

This page has been translated by machine translation. View original

Currently, various companies are fiercely competing to improve AI accuracy. As a result, the operational costs of AI systems are trending upward, and I believe there will likely be a shift from subscription models to usage-based pricing in the future.

I currently use Claude Pro for personal use, and signs of cost pressure are already becoming apparent. In March 2026, the consumption rate of session limits during peak hours (PT 5:00-11:00 on weekdays) was increased, and in addition to the 5-hour session limit, there's now a weekly cap on computational resource usage. It's possible that subscription plan limitations will strengthen further or shift to usage-based billing in the future. To avoid scrambling for alternatives when that happens, I decided to explore local LLMs.

In this article, I'll introduce the process of setting up Ollama as a local LLM execution environment and installing OpenCode as an AI coding agent to build a completely local AI coding environment.

Test Environment

- MacBook Pro (M1 Pro / 32GB)

- macOS 26.4 (25E246)

- Ollama 0.20.5

- OpenCode 1.4.3

- gemma4:26b (executed via Ollama)

What is Ollama

Ollama is an open-source tool for running LLMs in a local environment. It allows you to download and run various open-source models like Meta Llama and Google Gemma with a single command.

What is OpenCode

OpenCode is an open-source terminal-based AI coding agent. Similar to Claude Code or GitHub Copilot CLI, it creates and edits code based on natural language instructions in the terminal.

The key feature of OpenCode is its compatibility with over 75 LLM providers. In addition to cloud models from OpenAI, Anthropic, and Google, it can also use local models running through Ollama. This means you can run an AI coding agent completely locally without depending on external APIs.

Step 1: Install Ollama

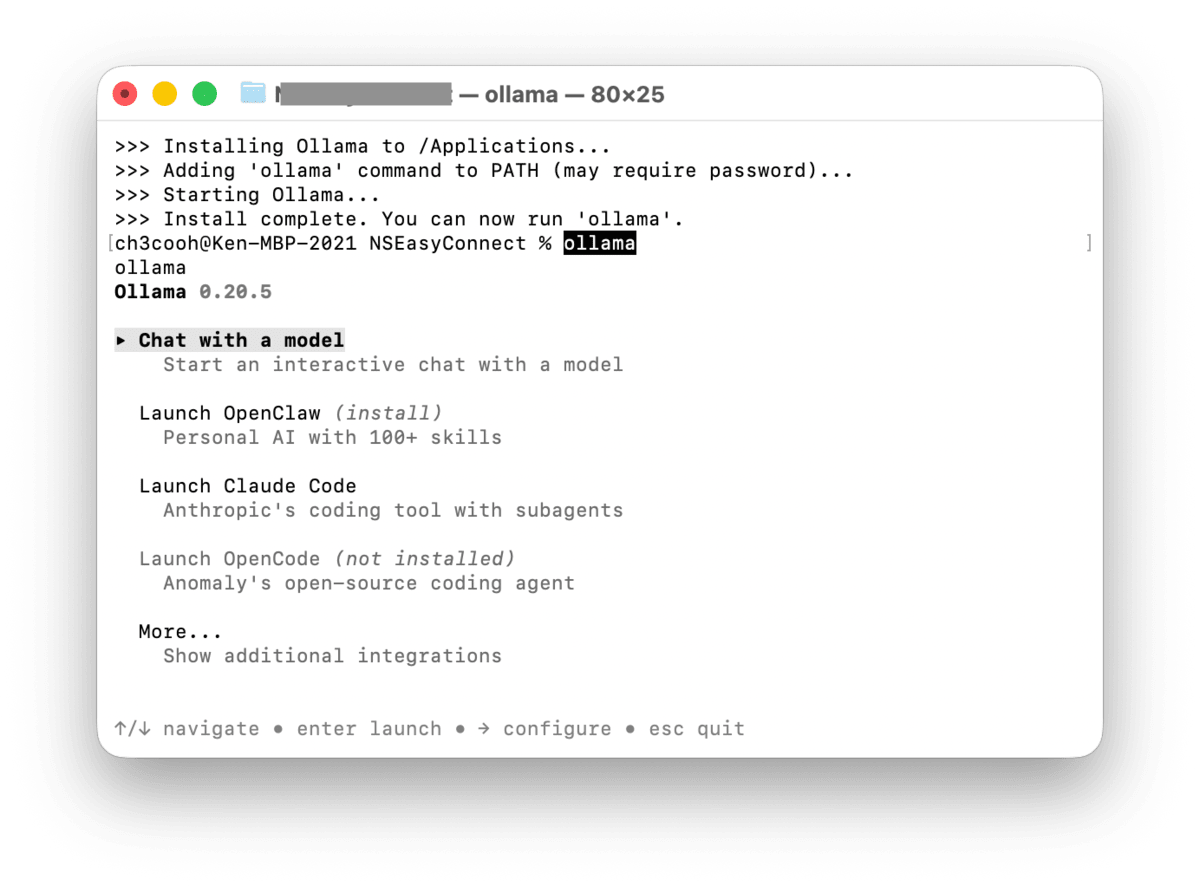

First, let's install Ollama. While it can be installed via Homebrew, the official GitHub README.md recommends using their installation script, so we'll use that method.

curl -fsSL https://ollama.com/install.sh | sh

After installation, launch Ollama.

ollama

The Ollama menu will appear in the terminal.

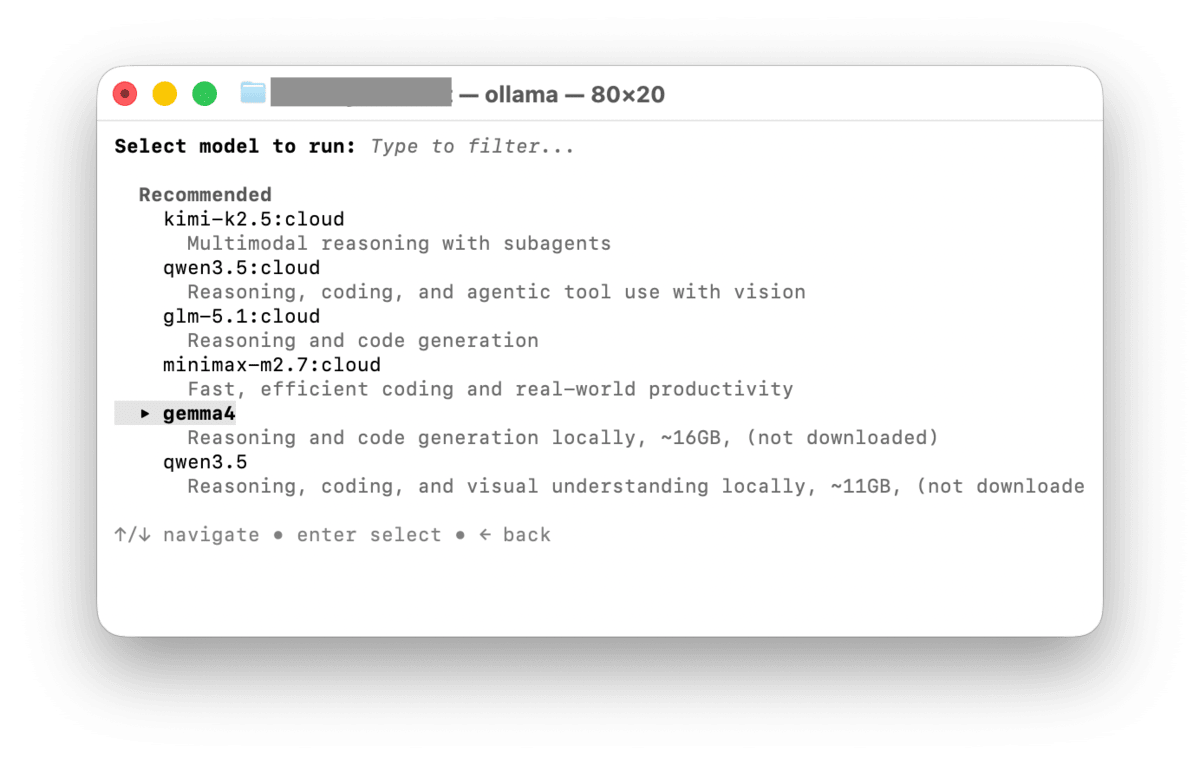

Selecting Chat with a model allows you to choose from several models. Since we want to try a local LLM, let's select gemma4.

After the download completes, the chat will start automatically. If you receive a normal response, the Ollama installation was successful.

Enter /exit to exit Ollama for now.

Step 2: Download the Model

In Step 1, we downloaded a model through the interactive selection during first launch, but for OpenCode, we'll explicitly download a model with a specified parameter size.

We'll use gemma4:26b for this demonstration. gemma4 is an open model released by Google based on Gemini, newly released in April 2026. As mentioned later, it was selected for its fast response speed and comfortable performance for local execution.

ollama pull gemma4:26b

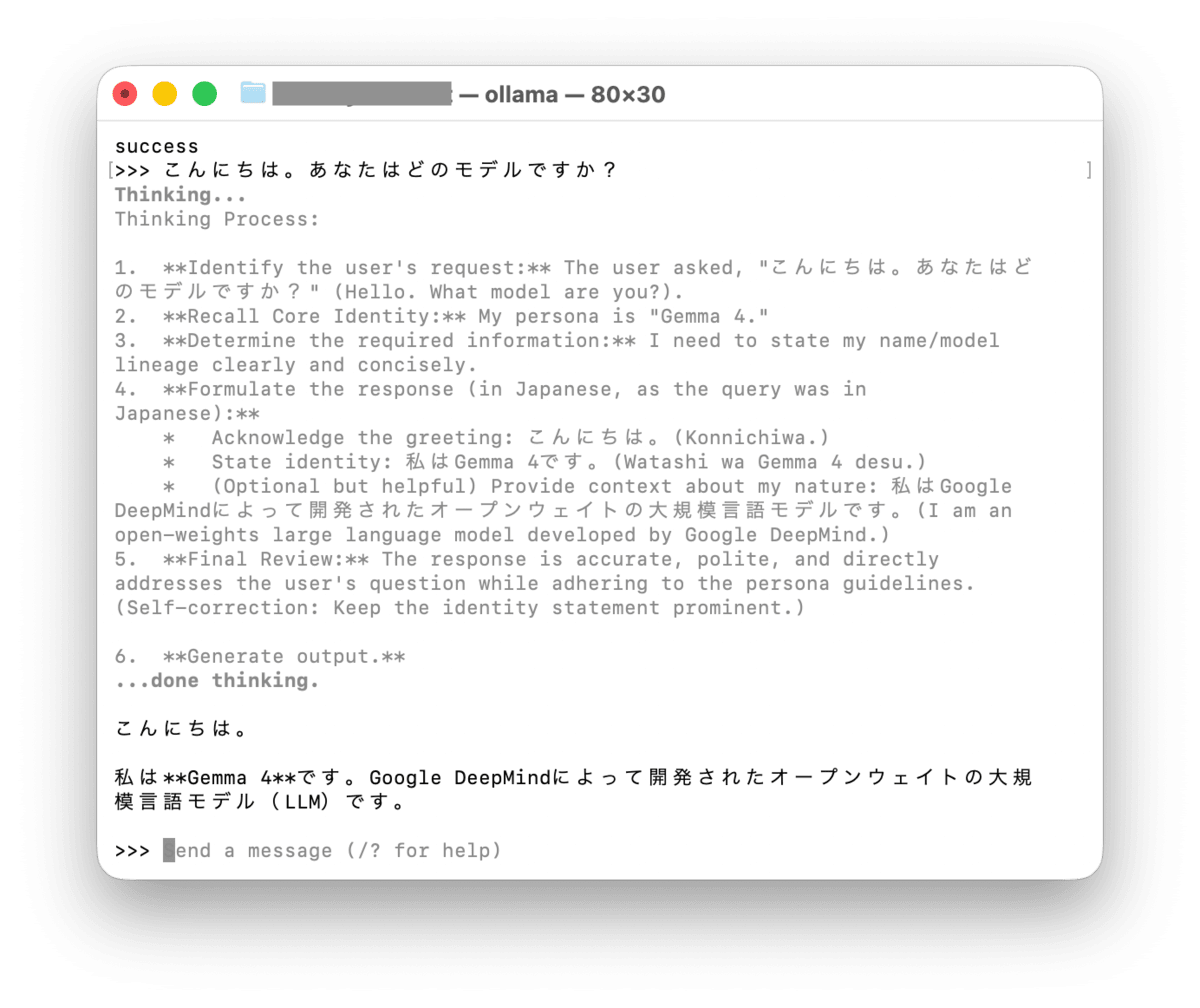

After the download is complete, let's test it.

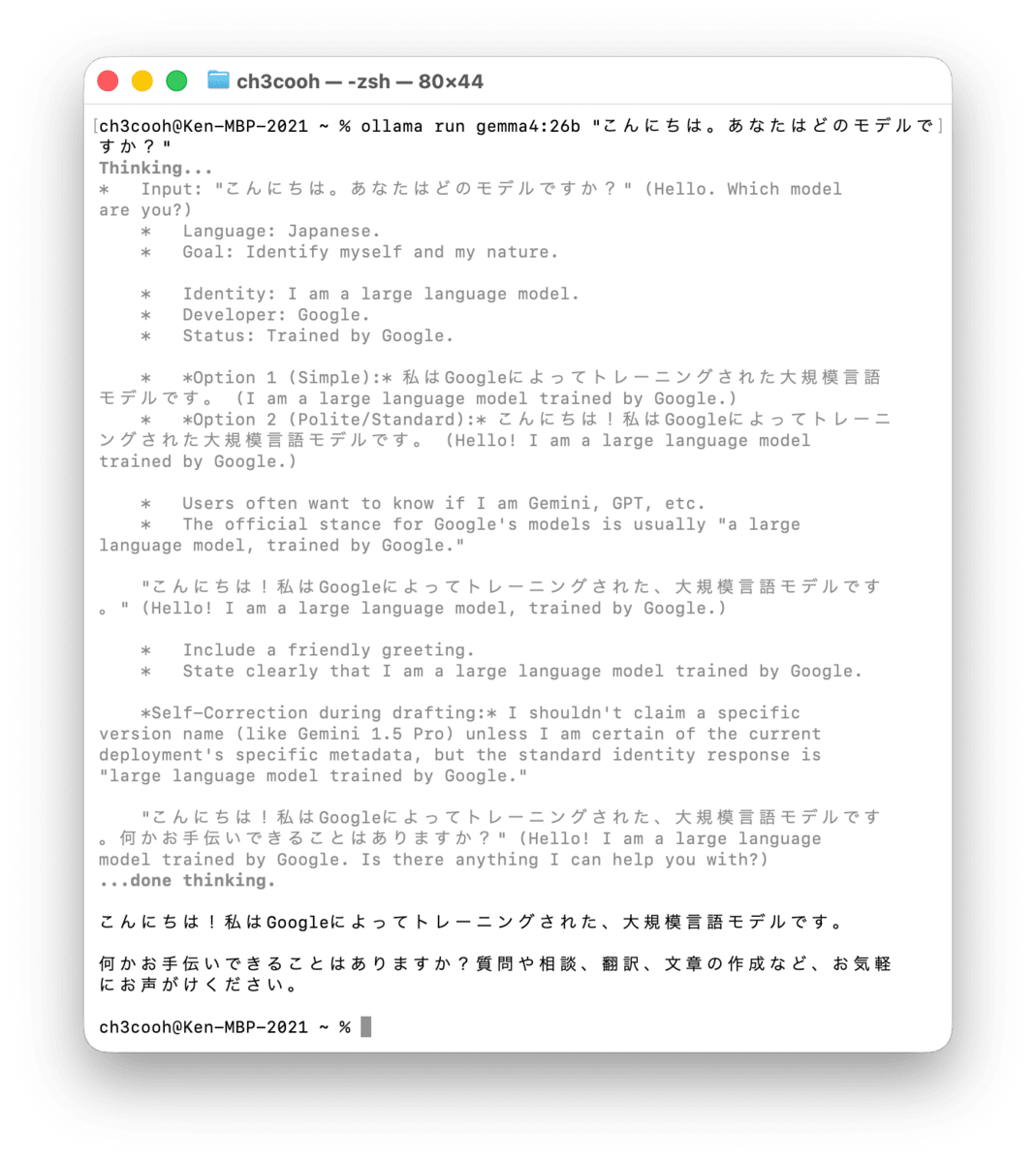

ollama run gemma4:26b "こんにちは。あなたはどのモデルですか?"

I also tried qwen3.5:27b, but in my environment, the response was slow and difficult to use. In contrast, gemma4:26b responded quickly and seemed to perform reasonably accurate inferences. Performance will vary depending on available memory.

I've heard that qwen3.5 is highly rated for coding performance, so I plan to try it again later.

Step 3: Install OpenCode

Next, let's install OpenCode.

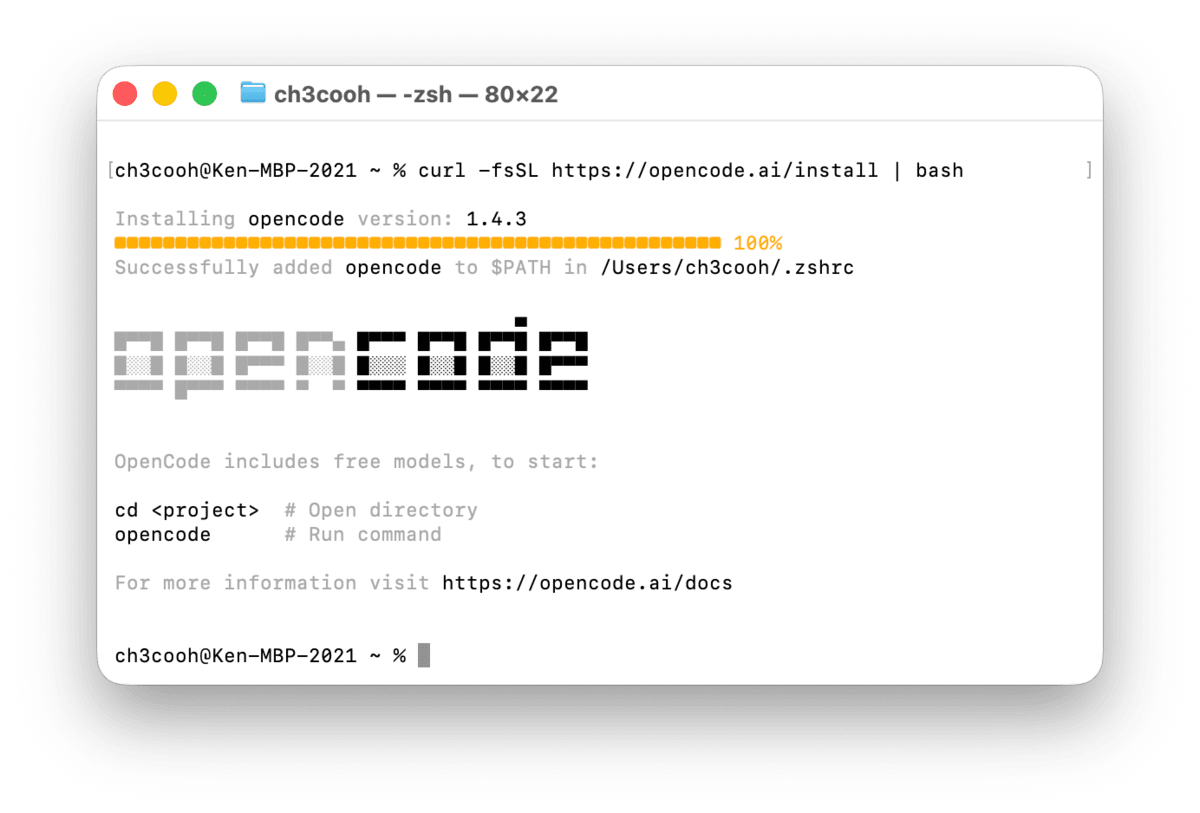

curl -fsSL https://opencode.ai/install | bash

After installation, check the version.

opencode --version

1.4.3

Step 4: Configure OpenCode

To use Ollama models with OpenCode, you need to create a configuration file. Add the following content to a file in your project's root directory or as a global configuration at ~/.config/opencode/opencode.json.

{

"$schema": "https://opencode.ai/config.json",

"provider": {

"ollama": {

"npm": "@ai-sdk/openai-compatible",

"options": {

"baseURL": "http://localhost:11434/v1"

},

"models": {

"gemma4:26b": {

"name": "Gemma 4 26B"

}

}

}

}

}

Key configuration points:

npmspecifies the AI SDK provider package that OpenCode uses internally. For Ollama integration, use@ai-sdk/openai-compatible. OpenCode will automatically install this package, so manual installation is not requiredbaseURLspecifies Ollama's OpenAI-compatible endpoint (http://localhost:11434/v1). Note that we use/v1, not Ollama's native/apiendpointmodelsspecifies the model name downloaded with Ollama

Ollama's default context window varies depending on available VRAM. In this test environment (32GB unified memory), the default is 32K tokens. If you want to change this, you can adjust Ollama's num_ctx parameter.

Step 5: Verification

After ensuring Ollama is running, start OpenCode.

opencode

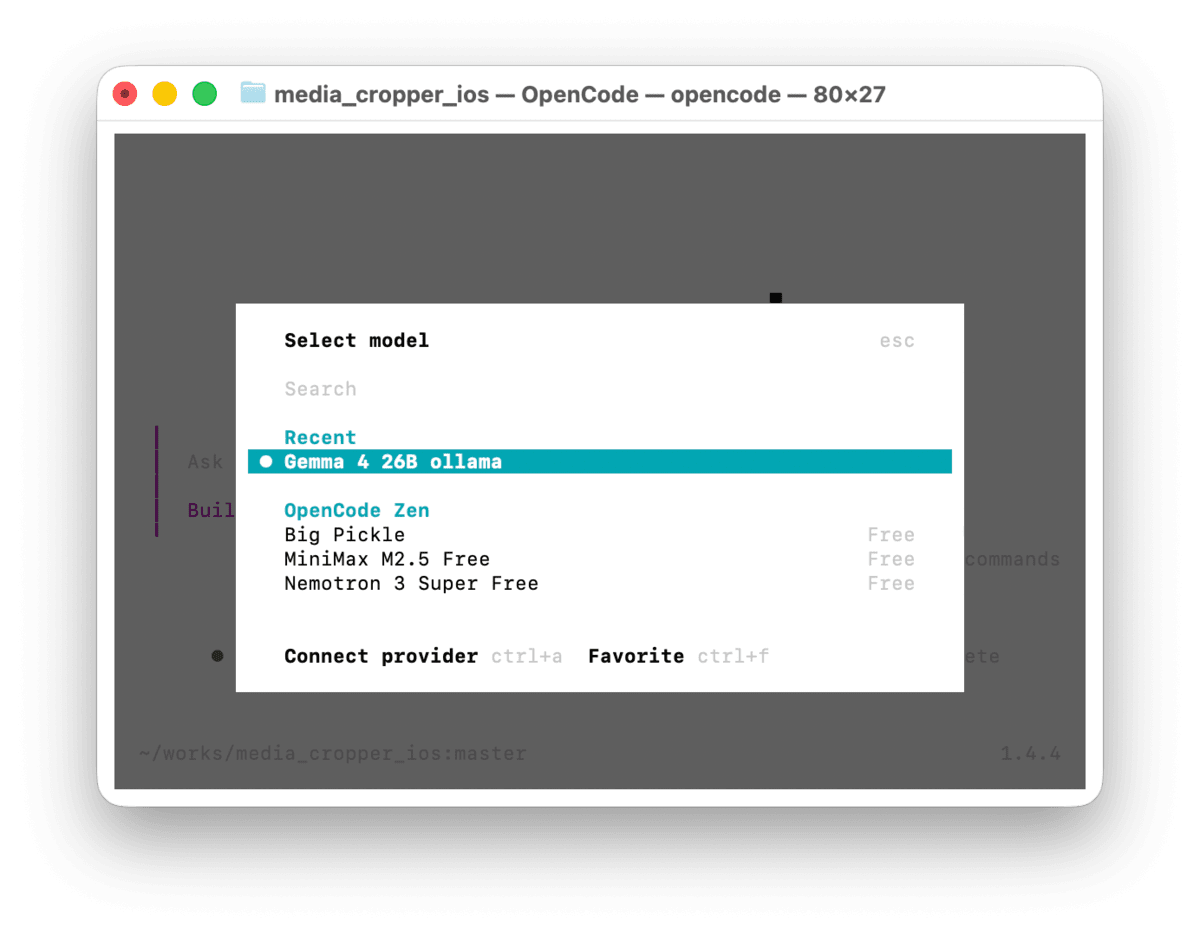

After startup, enter /models and verify that Ollama's gemma4:26b appears in the options. If it does, your configuration is correctly applied.

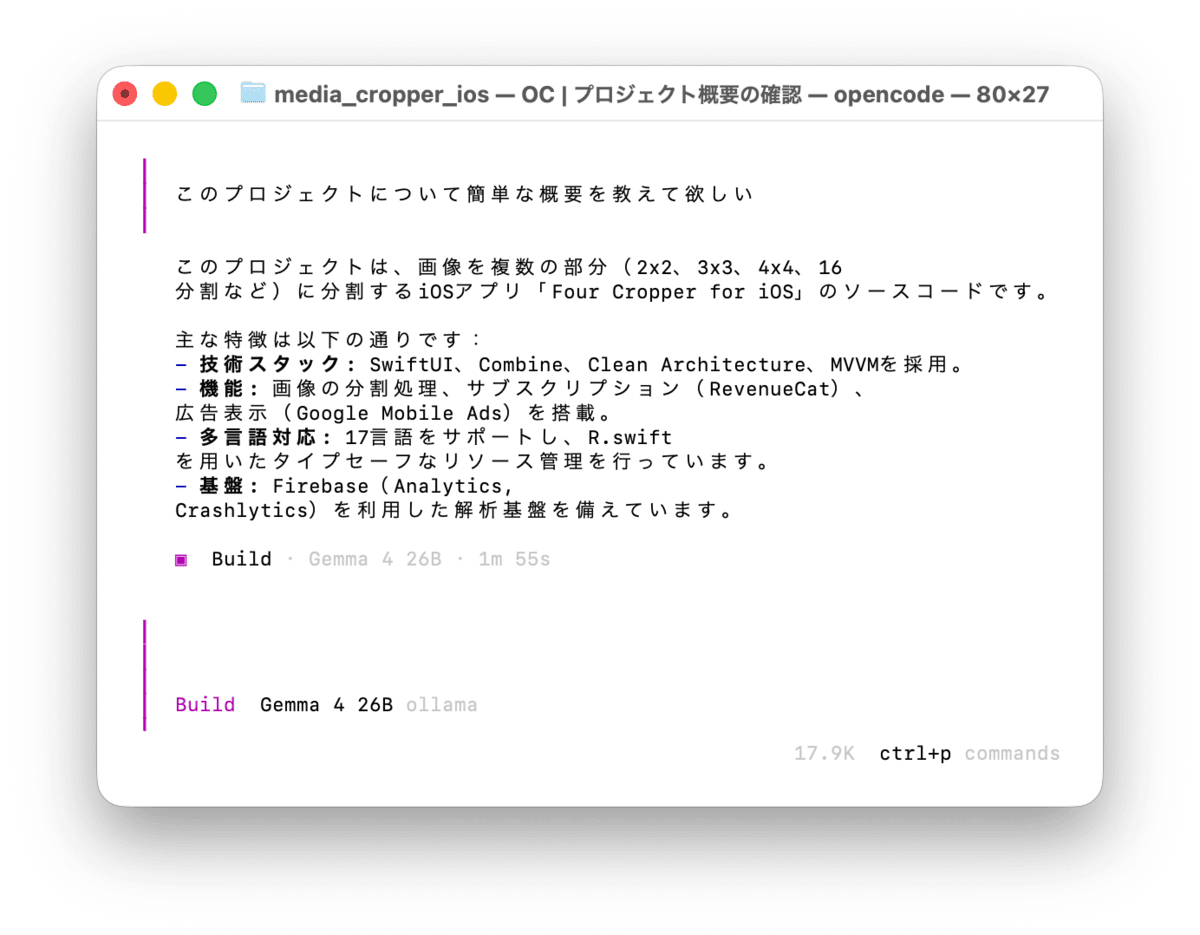

Enter a test prompt to confirm that the local model responds properly.

Conclusion

I've introduced the process of building a locally-running AI coding environment by combining Ollama and OpenCode.

While local LLMs are less accurate compared to cloud models, they don't require an internet connection and have no API usage fees. I believe they're a sufficient option for small code generation tasks in personal development or for projects where privacy is important.

The gemma4:26b model I used wasn't as capable for coding purposes as I had hoped, but I found it practical enough for normal Q&A and research tasks. I plan to use it for regular tasks going forward. I'm also interested in trying other models like qwen3.5:27b, which is highly rated for coding performance.

Setting up a local LLM environment to prepare for potential future price changes in cloud services is worthwhile. I hope this serves as a useful reference for those considering implementing local LLMs.