A record of tracking a non-reproducible connection issue encountered when using Supabase Realtime

This page has been translated by machine translation. View original

Introduction

When a defect occurs during development, we investigate the cause and fix it. This approach usually works, but not for defects that "appear intermittently." These are known as intermittent issues.

In this article, I'll share my experience of encountering an intermittent issue while developing with Supabase Realtime, and how I pursued the cause without ultimately identifying it. This isn't a story with a clean resolution, but I'll document how I approached the intermittent issue, what I tried, what I discovered, and what remained unclear.

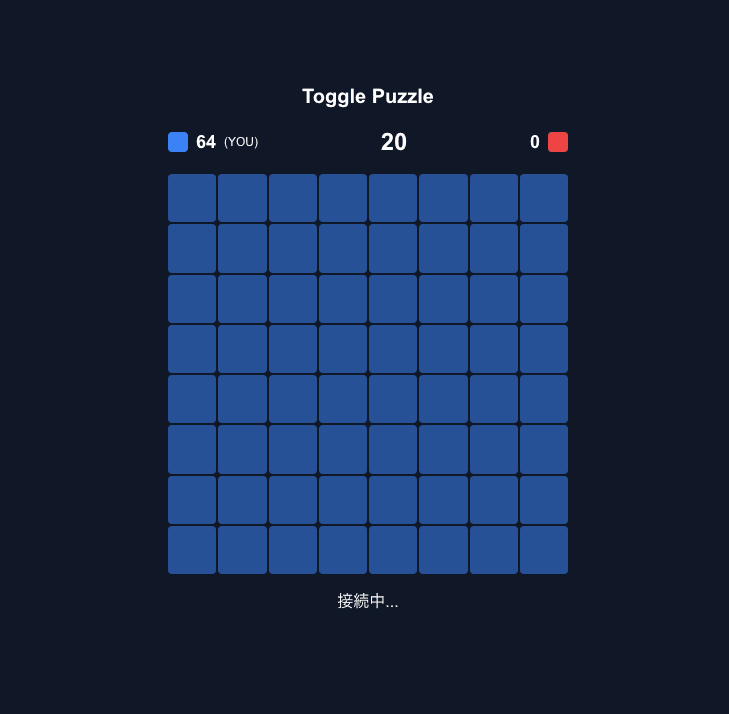

Stress test in progress

What are Intermittent Issues?

These are issues that only appear when multiple factors coincide, such as timing-dependent race conditions, temporary instability of external services, or environment-specific conditions. They often can't be detected through normal testing and suddenly appear in production environments, making them difficult to address proactively.

Target Audience

- Those struggling with connection issues using Supabase Realtime

- Those who have experienced intermittent issues with external service integrations

- Those looking for reference on how to approach bugs that can't be reproduced

References

What Happened

In a previous article, I introduced an implementation example of a battle game using Supabase Realtime's Presence (article link). After deploying it to Vercel, I started experiencing issues where channel subscriptions would stop at CHANNEL_ERROR or TIMED_OUT instead of reaching SUBSCRIBED. This didn't happen every time, but occurred intermittently. Executing "restart project" from the Supabase Console would temporarily resolve the issue, but it would recur after a while. It was happening frequently enough to disrupt the demo, so I needed to address it quickly.

What made this particularly troublesome was that my implementation at that time didn't properly handle subscription errors.

.subscribe(async (status, err) => {

if (status === "SUBSCRIBED") {

await channel.track({});

setIsConnected(true);

} else if (status === "CHANNEL_ERROR" || status === "TIMED_OUT") {

console.error("[Realtime] connection failed:", status, err);

// ← No retry or UI notification

}

});

While errors were logged to the console, nothing was displayed on the screen. From the user's perspective, the app just silently froze. This design made it even more difficult to isolate the problem.

At the time, I thought "insufficient disconnect handling is causing garbage to accumulate on the server," so I quickly implemented several solutions: adding disconnect handling with beforeunload / pagehide, changing to removeAllChannels, and shortening heartbeat intervals. Note that beforeunload / pagehide don't always fire depending on browser or mobile environment, so cleanup relying solely on these isn't robust. At one point I thought it was "fixed" and committed the changes, but the issue recurred, prompting further countermeasures. Eventually the symptoms subsided, but I couldn't determine which fix worked, or if the fixes worked at all.

Hypotheses and Verification

Later, I decided to investigate the cause again. I tested three hypotheses in sequence.

Hypothesis 1: Presence Session Residue

This was the scenario I suspected most at the time. I thought deficiencies in disconnect handling might be leaving Presence sessions on the server, corrupting the channel state. This was why I strengthened cleanup procedures.

I created a minimal reproduction app that only used Presence, repeatedly opening and closing tabs with the pre-fix implementation (only beforeunload, removeChannel).

As a result, I couldn't reproduce the issue. When closing tabs, the number of Presence participants promptly decreased, and session residue didn't occur.

Hypothesis 2: Reaching WebSocket Connection Limit

Supabase documentation states that the Free plan has a Realtime concurrent connection limit of 200. I hypothesized that connections might be accumulating from development reloads and tab operations, reaching this limit.

So I conducted a stress test that continuously generated WebSocket connections without cleanup.

Under the test conditions, even after increasing connections to 250, I didn't observe too_many_connections. Checking the Network tab confirmed all 250 connections succeeded with status 101. Therefore, explaining the original issue solely by connection limits seemed difficult.

Hypothesis 3: Reproducible Issue in the Original Code

As a last resort, I cloned the original project, reverted to the pre-fix commit, added logging, and ran it both locally and on Vercel against the same Supabase project.

However, it worked normally in both environments. The subscribe always returned SUBSCRIBED, and the issue couldn't be reproduced.

What Remains by Process of Elimination

None of the three hypotheses I tested could reproduce the original symptoms.

Two clues remained: First, the Supabase Realtime logs contained MigrationsFailedToRun and ErrorOnRpcCall: timeout. Second, executing restart project temporarily resolved the issue.

From these observations, I suspect the problem wasn't solely in the application code but might have involved the state of the Supabase project, the Realtime server, PostgreSQL integration, or temporary external environment instability.

However, this is only speculation based on the logs and behavior observed at the time. Detailed server logs from when the problem occurred were only retained for 24 hours on the plan I was using, and are now lost. This doesn't definitively indicate a bug on Supabase's side.

The fixes I implemented might not have addressed the root cause. During the troubleshooting process, I executed restart project multiple times, which might have reset the project state, leading to recovery.

Reflection

I couldn't identify the cause. But this investigation provided several learnings.

First, the danger of implementing fixes based on assumptions for intermittent issues. At the time, I was convinced that "revising disconnect handling would solve it" and was satisfied when symptoms subsided. Accepting "it's fixed" without understanding why means repeating the same trial-and-error process when it happens again.

Second, how inadequate error handling can make intermittent issues fatal. By swallowing CHANNEL_ERROR / TIMED_OUT in the subscribe, the app simply stopped silently when issues occurred. Without checking logs, you wouldn't even know what happened. Proper error handling and retry mechanisms are essential for external service connections, not just for intermittent issues.

Conclusion

After testing and rejecting three hypotheses, I'm left with the process-of-elimination conclusion that it was "a temporary issue on the execution environment side." While it's frustrating not to identify the cause, going through the process of eliminating hypotheses provided valuable insights. I hope this approach to intermittent issues serves as a reference for others facing similar situations.